- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

ASUS Radeon EAH5850

If we had an award for the “best bang for the buck”, it would require little thinking to give it to ATI’s Radeon HD 5850. For the price, it offers incredible power, superb power consumption, and of course, DirectX 11 support. We’re taking a look at ASUS’ version here, which along with Dirt 2, includes a surprisingly useful overclocking tool.

Page 2 – Test System & Methodology

At Techgage, we strive to make sure our results are as accurate as possible. Our testing is rigorous and time-consuming, but we feel the effort is worth it. In an attempt to leave no question unanswered, this page contains not only our testbed specifications, but also a fully-detailed look at how we conduct our testing. For an exhaustive look at our methodologies, even down to the Windows Vista installation, please refer to this article.

Test Machine

The below table lists our testing machine’s hardware, which remains unchanged throughout all GPU testing, minus the graphics card. Each card used for comparison is also listed here, along with the driver version used. Each one of the URLs in this table can be clicked to view the respective review of that product, or if a review doesn’t exist, it will bring you to the product on the manufacturer’s website.

|

Component

|

Model

|

| Processor |

Intel Core i7-975 Extreme Edition – Quad-Core, 3.33GHz, 1.33v

|

| Motherboard |

Gigabyte GA-EX58-EXTREME – X58-based, F7 BIOS (05/11/09)

|

| Memory |

Corsair DOMINATOR – DDR3-1333 7-7-7-24-1T, 1.60

|

| ATI Graphics |

Radeon HD 5870 1GB (Sapphire) – Catalyst 9.10 Radeon HD 5850 1GB (ASUS) – Catalyst 9.10 Radeon HD 5770 1GB (Reference) – Beta Catalyst (10/06/09) Radeon HD 4890 1GB (Sapphire) – Catalyst 9.8 Radeon HD 4870 1GB (Reference) – Catalyst 9.8 Radeon HD 4770 512MB (Gigabyte) – Catalyst 9.8 |

| NVIDIA Graphics | GeForce GTX 295 1792MB (Reference) – GeForce 186.18 GeForce GTX 285 1GB (EVGA) – GeForce 186.18 GeForce GTX 275 896MB (Reference) – GeForce 186.18 GeForce GTX 260 896MB (XFX) – GeForce 186.18 GeForce GTS 250 1GB (EVGA) – GeForce 186.18 |

| Audio |

On-Board Audio

|

| Storage | |

| Power Supply | |

| Chassis | |

| Display | |

| Cooling | |

| Et cetera |

When preparing our testbeds for any type of performance testing, we follow these guidelines:

- General Guidelines

- No power-saving options are enabled in the motherboard’s BIOS.

- Internet is disabled.

- No Virus Scanner or Firewall is installed.

- The OS is kept clean; no scrap files are left in between runs.

- Hard drives affected are defragged with PerfectDisk 10 prior to a fresh benchmarking run.

- Machine has proper airflow and the room temperature is 80°F (27°C) or less.

To aide with the goal of keeping accurate and repeatable results, we alter certain services in Windows Vista from starting up at boot. This is due to the fact that these services have the tendency to start up in the background without notice, potentially causing slightly inaccurate results. Disabling “Windows Search” turns off the OS’ indexing which can at times utilize the hard drive and memory more than we’d like.

- Services Disabled Prior to Benchmarking

- PerfectDisk 10

- Windows Defender

- Windows Error Reporting Service

- Windows Event Log

- Windows Firewall

- Windows Search

- Windows Update

For more robust information on how we tweak Windows, please refer once again to this article.

Game Titles

At this time, we currently benchmark all of our games using three popular resolutions: 1680×1050, 1920×1080 and also 2560×1600. 1680×1050 was chosen as it’s one of the most popular resolutions for gamers sporting ~20″ displays. 1920×1080 might stand out, since we’ve always used 1920×1200 in the past, but we didn’t make this change without some serious thought. After taking a look at the current landscape for desktop monitors around ~24″, we noticed that 1920×1200 is definitely on the way out, as more and more models are coming out as native 1080p. It’s for this reason that we chose it. Finally, for high-end gamers, we also benchmark using 2560×1600, a resolution that’s just about 2x 1080p.

For graphics cards that include less than 1GB of GDDR, we omit Grand Theft Auto IV from our testing, as our chosen detail settings require at least 800MB of available graphics memory. Also, if the card we’re benchmarking doesn’t offer the performance to handle 2560×1600 across most of our titles reliably, only 1680×1050 and 1920×1080 will be utilized.

Because we value results generated by real-world testing, we don’t utilize timedemos whatsoever. The possible exception might be Futuremark’s 3DMark Vantage. Though it’s not a game, it essentially acts as a robust timedemo. We choose to use it as it’s a standard where GPU reviews are concerned, and we don’t want to rid our readers of results they expect to see.

All of our results are captured with the help of Beepa’s FRAPS 2.98, while stress-testing and temperature-monitoring is handled by OCCT 3.1.0 and GPU-Z, respectively.

Call of Duty: World at War

Call of Juarez: Bound in Blood

Crysis Warhead

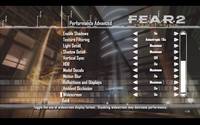

F.E.A.R. 2: Project Origin

Grand Theft Auto IV

Race Driver: GRID

World in Conflict: Soviet Assault

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!