- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

ATI HD 4870 1GB vs. NVIDIA GTX 260/216 896MB

In the $250 – $300 price-range, there exists two graphics cards that want to see your dollar, but which one deserves it the most? To find out, we’re taking a thorough look at each. In addition to general performance comparison, we’re also taking a look to see which excels where power consumption and temperatures are concerned, in addition to overall pricing.

Page 6 – 3DMark Vantage, Power Consumption

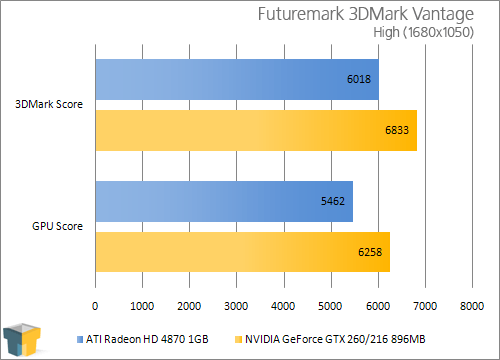

Although we generally shun automated gaming benchmarks, we do like to run at least one to see how our GPUs scale when used in a ‘timedemo’-type scenario. Futuremark’s 3DMark Vantage is without question the best such test on the market, and it’s a joy to use, and watch. The folks at Futuremark are experts in what they do, and they really know how to push that hardware of yours to its limit.

The company first started out as MadOnion and released a GPU-benchmarking tool called XLR8R, which was soon replaced with 3DMark 99. Since that time, we’ve seen seven different versions of the software, including two major updates (3DMark 99 Max, 3DMark 2001 SE). With each new release, the graphics get better, the capabilities get better and the sudden hit of ambition to get down and dirty with overclocking comes at you fast.

Similar to a real game, 3DMark Vantage offers many configuration options, although many (including us) prefer to stick to the profiles which include Performance, High and Extreme. Depending on which one you choose, the graphic options are tweaked accordingly, as well as the resolution. As you’d expect, the better the profile, the more intensive the test.

Performance is the stock mode that most use when benchmarking, but it only uses a resolution of 1280×1024, which isn’t representative of today’s gamers. Extreme is more appropriate, as it runs at 1920×1200 and does well to push any single or multi-GPU configuration currently on the market – and will do so for some time to come.

Nothing too surprising here. Our largest gain was seen with our “High” test, where the GTX 260 delivered a 13.5% increase. “Extreme” saw an 11.8% increase.

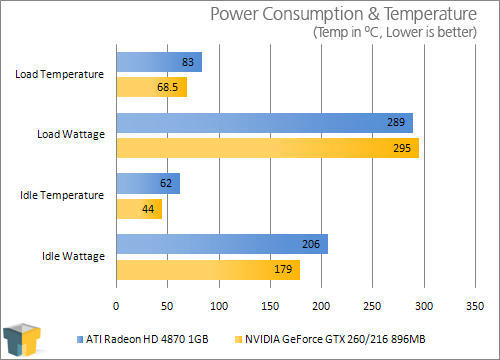

Power Consumption & Temperatures

Since we’ve had so many graphs already, the power consumption and temperature results are being condensed into this last one. For power consumption, we use a Kill-a-Watt that has nothing but the PC plugged in, while for GPU temperature tracking, we use GPU-Z, which does a fantastic job of exporting various bits of information to a clean text file.

To begin, the PC is left turned off for at least five minutes, and then is boot up and left to sit at the Windows’ desktop for another five minutes. At that point, both the idle wattage and temperature are recorded. To stress the GPU for load information, 3DMark Vantage’s “Extreme” test is executed at 2560×1600. The space flight test is used exclusively here in a loop of three, with the results being recorded during a specific sequence during the run where it seems to stress the GPU the most.

Overall, it seems NVIDIA’s card doesn’t just excel in performance, but in both power consumption and temperatures as well. It loses at full load, compared to the HD 4870 1GB, but given the performance has generally scaled beyond that 2%, it seems fair. Not to mention that the idle wattage (where the PC will likely sit most of the time) is 14.5W lower.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!