- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Intel Details Nehalem, Dunnington, Tukwila & Larrabee

At a press briefing, much more was revealed about the numerous upcoming technologies from Intel. In this article, we will be taking a look at Nehalem, Dunnington, Tukwila and Larrabee, along with a look at the new QuickPath Interconnect.

Page 3 – Larrabee, Final Thoughts

Larrabee – Sure to Bee Interesting?

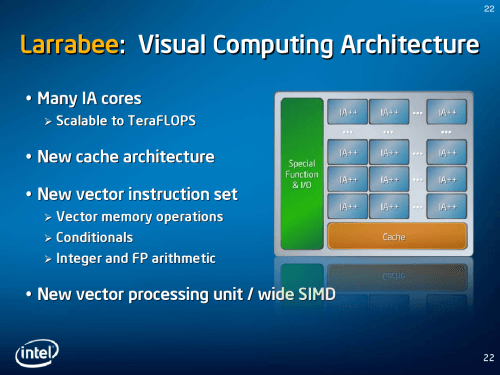

Sure, the title there is one of the lamest I’ve ever come up with, but luckily, the technology doesn’t seem so lame. Larrabee will be a completely scalable GPU that can contain many different cores and implement a new cache architecture and can utilize a new vector processing unit.

Not too much was revealed about Larrabee overall, but Pat made it perfectly clear that it would not be developed as a competitor to ATI’s or NVIDIA’s high-end offerings. As an integrated solution, Pat explained that there is simply not enough surface-area to support such a powerful beast, not to mention that it would be impossible to develop one to compete with those cards and retain a reasonable power envelope. This assumption seems fair, as current high-end GPUs can consume as much as 150W.

What it will compete with, however, would be the low-end to mid-range GPU offerings. However, because Larrabee is scalable to include many cores, the end result will be one with other features in mind – not only a GPU.

In addition to regular 3D graphics use, Larrabee can be used to handle audio and video processing as well, which in addition to the SSE instruction sets, could be utilized to increase performance dramatically. How well the architecture will be put to good use, however, is yet to be seen.

Although likely unrelated to their September’s acquisition of Havok, Larrabee will also be able to handle physics processes, and given the amount of cores available to a Larrabee chip, the potential is enormous. Other benefits would include life-like rendering and global illumination, improved ray-tracing, superb AI… the possibilities are great.

So while Larrabee will not be a “killer” GPU in itself when compared to high-end offerings, it can do much more than spit out spiffy graphics, which can be put to extremely good use when combined with your primary graphics.

Intel Software – Helping to Get The Show On the Road

The last thing Pat touched on was new and improved Intel development software to both aide and encourage developers to support all of their current and upcoming technologies.

When questioned as to how difficult it would be to develop for Larrabee and the IA++, Pat gave a general answer that I didn’t quite catch, but it seems that if you are programmer of any sort, developing around these new technologies should be similar to learning how to develop around any new library or programming language. Before ceasing discussion though, Pat mentioned that Larrabee would be fully compatible with OpenGL and DirectX, so interoperability should be rather straight-forward.

Final Thoughts

There’s not much to be said that hasn’t already been said, but the future is certainly going to be interesting. Every product discussed here is exciting in it’s own right, but Nehalem will be the chip that many people will be looking forward to by years-end, although things are sure to kick off on a slow foot. By mid-next year, Nehalem should be in full-swing and will be in many enthusiast’s rigs.

Larrabee is also something to pay attention to, because the possibilities are great. If game developers and software developers alike take full-advantage of what the technology offers, we could be seeing some incredible things. Looking back at the 45nm launch, I remember how much the SSE4 instruction set impressed me. It increased encoding times by more than 50%, but that’s an instruction set for one purpose. Imagine a full chip that can be manipulated in many different ways.

Although we will not be at IDF in early April, we will continue to report on anything newsworthy that comes out of the show. Things should really become interesting at this fall’s IDF in August, where demos of all the products discussed here should be.

If you have a comment you wish to make on this article, feel free to head on into our forums! There is no need to register in order to reply to such threads.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!