- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Intel Opens Up About Larrabee

Intel today takes a portion of the veil off their upcoming Larrabee architecture, so we can better understand its implementation, how it differs from a typical GPU, why it benefits from taking the ‘many cores’ route, its performance scaling and of course, what else it has in store.

Page 3 – Graphics Support, Performance Scaling, Final Thoughts

Though we can’t publish slides, the thing to take away from Larrabee is that it’s ultra-scalable and opens up many pipelines that used to be fixed. Development should be made easier, and a Larrabee chip should be extremely efficient and fast. Just how fast, we don’t know. Given that IDF takes place in two weeks, it would be unlikely to not see hands-on demos there.

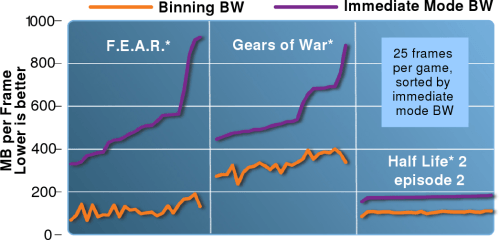

Not surprisingly, no raw performance data has been revealed yet, but Intel did give us a few graphs that show how well binned rendering compares to immediate mode per frame, and also how game performance can scale with Larrabee.

Using the technology called ‘binned rendering’, Intel’s benchmarking results show that it’s possible for a game to use much less memory bandwidth than when aspects of the game are rendered with traditional methods. As you can see, using the new binned mode, each game was able to use substantially less memory overall, sometimes half.

As far as scalability is concerned, Intel also showed in another graph just how much gaming performance could be enhanced as the number of cores is increased. These numbers aren’t the result of typical benchmarks, as their tests were specific in nature.

It’s interesting to note the stark increases, which are almost completely linear in nature. The largest variation was 10%, which is rather modest compared to some even higher variations when doing these kinds of tests with our desktop Quad-Cores. Intel continued by noting that while the typical GPU has to ‘assume’ what typical workloads will be like in the future, Larrabee is completely scalable and ready to tackle whatever workload comes at it.

What’s that mean to the consumer? Instead of seeing certain games excel on certain GPUs, all games should scale similarly on Larrabee, regardless of the game engine. The three games shown above all use different engines, yet all scaled on a linear path. If only most desktop applications took such great advantage of our desktop CPUs…

Final Thoughts

There was a lot more discussed during this press briefing, but most of it is specific to game developers and has little relevance to the end consumer or enthusiast. During the briefing though, we were able to learn a lot more about the architecture and what benefits it holds, and overall, it’s all rather impressive. Still, until we see real-world examples of all its benefits, it’s of course going to be difficult to state much of an opinion.

The architecture itself is fascinating, because it differs substantially from the typical GPUs. Instead of a single massive GPU core on-board, Larrabee will feature numerous Pentium-derived cores that are redesigned to better handle applications of all sorts in the visual computing scheme of things. Just how effective this method of scaling will prove to be in real-world application is yet to be seen, and it’s sure going to be hard to wait.

Intel did provide performance scaling information, as seen in the chart above, and while it does give us a brief idea of the performance, it in no way gives us a real-world idea of what to expect. Eight cores might have delivered 25% of the performance of thirty-two cores, but that doesn’t mean much if the eight cores mustered 4FPS in a randomly selected title.

For those interested in even deeper specifics regarding how Larrabee can improve graphic rendering (transparency, handling irregular Z-buffer, etc), Intel will be giving an in-depth presentation at SIGGRAPH next week in Los Angeles, and I’m sure many game development websites will be publishing lots of information on that.

With Intel’s Developer Forum occurring in just two weeks, we can be sure that there will be some form of a Larrabee demo on display. I’m sure at that time we’ll learn even more about the new architecture and potentially see just how life-changing it could prove to be, when the processor is released in 2009/2010.

We will once again be at this years IDF, so stay tuned as we’ll be sure to pass along any of the cool happenings along to you as they take place.

Discuss in our forums!

Done reading? Have a comment you’d like to make? Please feel free to head on into our forums, where there is absolutely no need to register in order to reply to our content-related threads!

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!