- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

OCZ Vertex 2 100GB

Are you interested in equipping yourself with one of the fastest SSD’s on the planet? If so, then OCZ’s Vertex 2 is the one you want to keep an eye on. Thanks to its tweaked SandForce SF-1200 controller, the Vertex 2 is the fastest SSD we’ve ever tested, dominating almost every single one of our tests.

Page 4 – Synthetic: Iometer & AS SSD

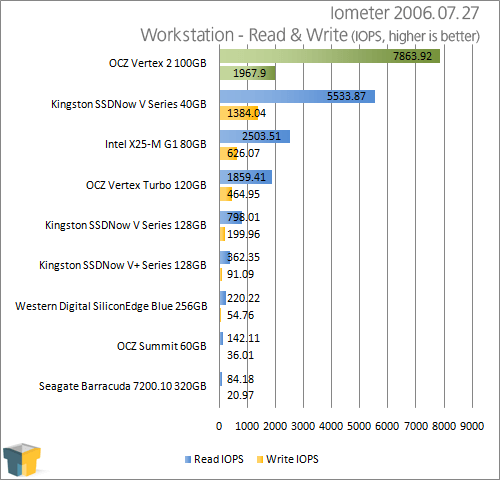

Iometer 2006.07.27

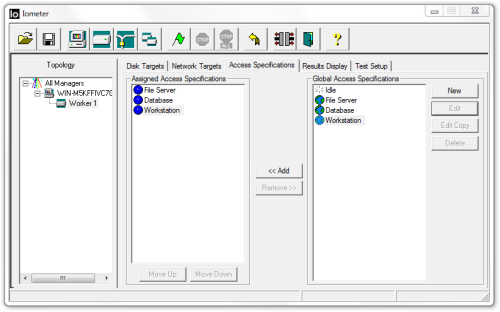

Originally developed by Intel, and since given to the open-source community, Iometer (pronounced “eyeawmeter”, like thermometer) is one of the best storage-testing applications available, for a couple of reasons. The first, and primary, is that it’s completely customizable, and if you have a specific workload you need to hit a drive with, you can easily accomplish it here. Also, the program delivers results in IOPS (input/output operations per second), a common metric used in enterprise and server environments.

The level of customization cannot be understated. Aside from choosing the obvious figures, like chunk sizes, you can choose the percentage of the time that each respective chunk size will be used in a given test. You can also alter the percentages for read and write, and also how often either the reads or writes will be random (as opposed to sequential). I’m just touching the surface here, but what’s most important is that we’re able to deliver a consistent test on all of our drives, which increases the accuracy in our results.

Because of the level of control Iometer offers, we’ve created profiles for three of the most popular workloads out there: Database, File Server and Workstation. Database uses chunk sizes of 8KB, with 67% read, along with 100% random coverage. File Server is the more robust of the group, as it features chunk sizes ranging from 512B to 64KB, in varying levels of access, but again with 100% random coverage. Lastly, Workstation focuses on 8KB chunks with 80% read and 80% random coverage.

Because these profiles aren’t easily found on the Web, with the same being said about the exact structure of each, we’re hosting the software here for those who want to benchmark their own drives with the exact same profiles we use. That ZIP archive (~3.5MB) includes the application and the three profiles in an .icf file.

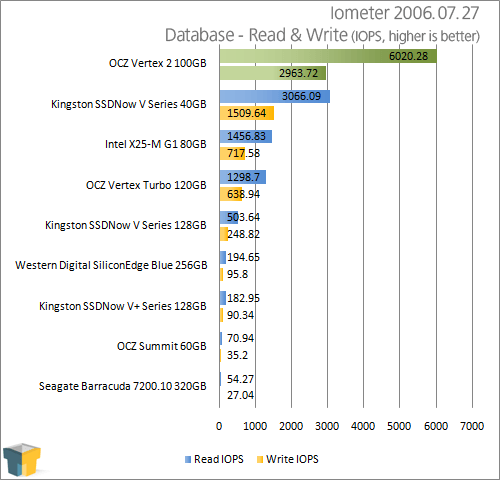

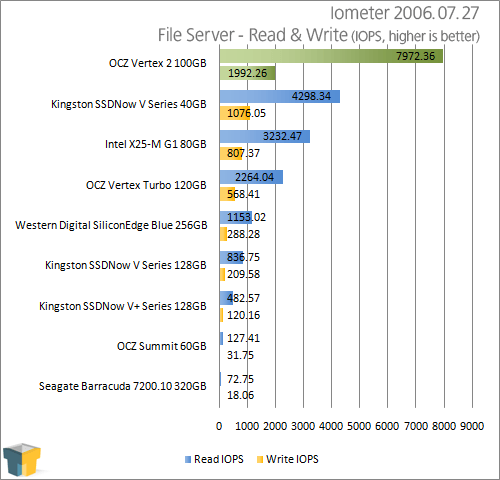

Scenarios such as these are pretty much the bane of hard drives and illustrate why 15,000RPM SCSI drives exist. Ironically, despite Iometer and these tests being created well before SSDs began entering the desktop market, the nature of the tests are going to inherently favor them due to their rapid, near-instantaneous random access times. While we do not have a 15,000RPM or VelociRaptor drive to use for reference, the numbers generated by them would only be little better than the desktop drive here due to the large number of tiny reads and writes to be made often randomly across the platter surface.

If looking for a perfect test to illustrate the potential strengths of random access, this would be it. That said, the large number of 512B to 4KB mixed reads and writes in the File Server scenario will also be the anathema of any SSD controller that was designed primarily for large sequential read & write performance. We can gauge which candidates have the highest potential to suffer from performance stuttering with these tests. Very small, random file operations are the worst offenders as they stress the controller the most.

If the SSD controller becomes backlogged, it will exacerbate the stuttering, causing file operations that should take milliseconds to instead take up to a full second or more to complete, thereby only compounding the problem. Unfortunately, the Summit happens to use a Samsung controller optimized solely for large, single sequential writes and makes for a good example as a warning of what to look for.

While we were expecting the Vertex 2 to prove less adept at numerous small file operations we can easily say we were proven wrong here. The performance results here are rather scary to put it mildly, for example in the Database test the mechanical disk reaches 54.27 read IOPs, yet the Vertex 2 is capable of performing 6020.28 read IOPs. That’s again roughly 111 times the performance! If percentages are your thing, then that’s an approximately 10,993% increase in performance.

Differences in results this large are extremely abnormal with computer technology today. The difference between new CPUs, GPUs is often anywhere from 10-30% better per generation, and with RAM the results are often lower. With results so drastically higher it is clear that storage technology is undergoing a revolution, or perhaps more accurately a paradigm shift. Of course this is something SSD proponents have been trying to claim ever since Intel released their first SSDs in 2008!

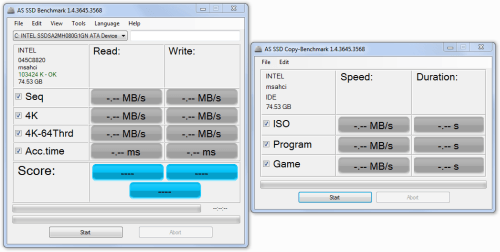

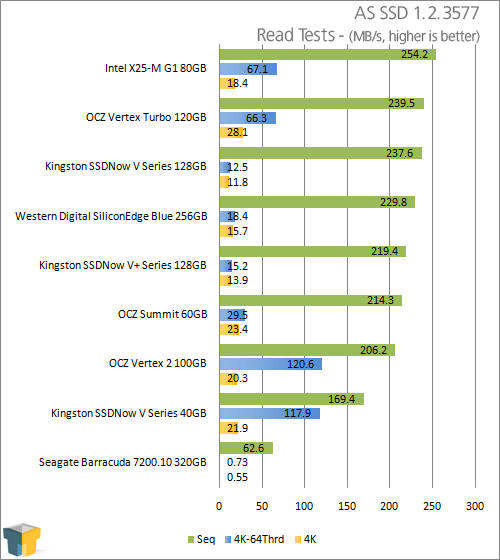

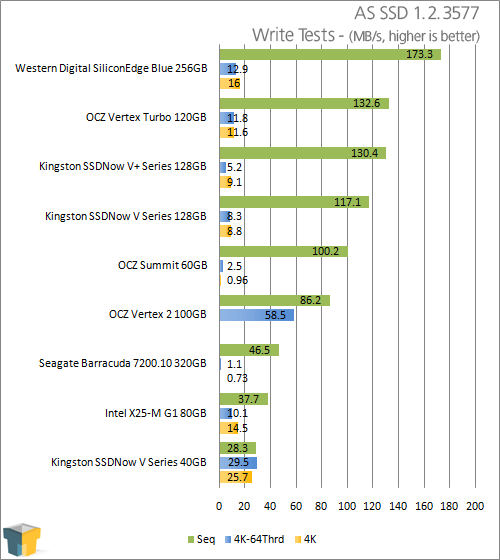

AS SSD

As the name might hint, AS SSD is a nifty little program written exclusively for SSDs. It can be run on mechanical hard drives, but be warned that what should take minutes will take over an hour to benchmark! This handy little tool provides several read/write tests at important file sizes, but also includes a benchmark to simulate the transfer of three types of large files.

We selected this program for its precision, ability to generate large file sizes on the fly, and that it is written to bypass Windows 7’s automatic caching system. The tool does not bypass any onboard cache.

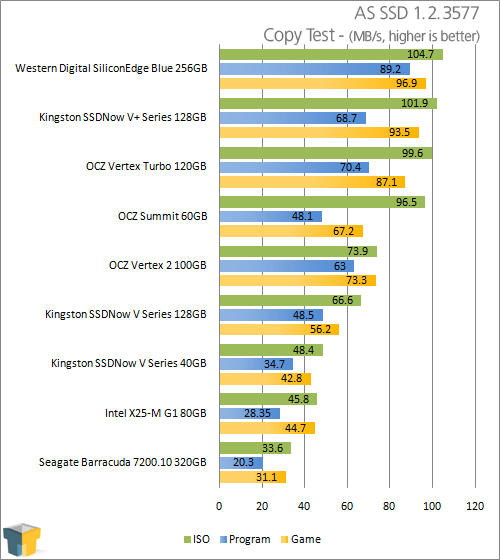

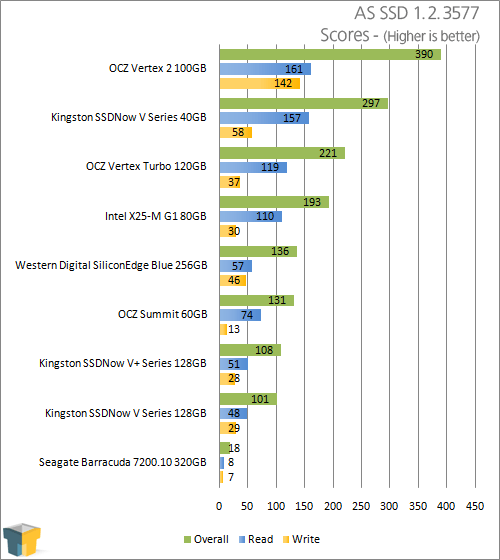

Curiously, AS SSD doesn’t seem to favor the Vertex 2 at all, and we aren’t altogether sure why. We will chalk this one up as an exception, but it does go to show that no single test by itself is capable of always determining an SSD’s performance. Or perhaps I just spoke too soon, as inexplicably the AS SSD benchmark gives the Vertex 2 top spot in all three scoring metrics, and by a far margin at that. While we can’t explain how these final scores were reached, the AS SSD program is available as a free download if you wish to try it for yourself.

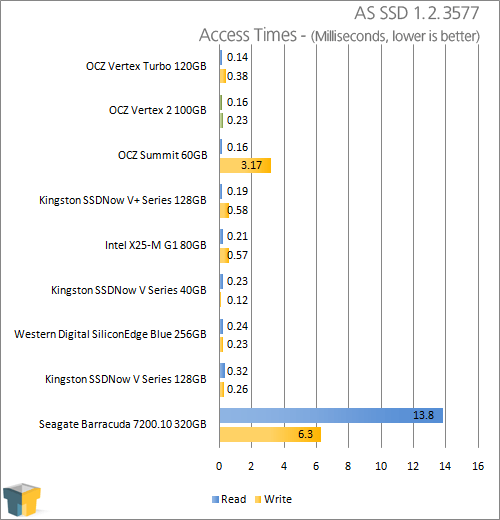

The access times for the Vertex 2 place it second to only the Vertex Turbo. Access times like these are the reason why a mechanical disk RAID array can never be the equal of any good quality SSD, because adding mechanical drives together in RAID does not decrease the initial access latency involved. Concurrent file accesses in RAID can better hide the latency penalty, but it doesn’t actually remove it.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!