- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Sapphire Radeon R9 280 Dual-X 3GB Graphics Card Review

When we took a look at EVGA’s GeForce GTX 760 Superclocked a couple of weeks ago, we lacked the ideal card to compare it to. With Sapphire’s Radeon R9 280 Dual-X in the house, retailing for just $10 shy of EVGA’s, we’ve managed to remedy that problem. So, let’s dive in, and see how both fare through our battery of tests.

Page 8 – Synthetic Tests: Futuremark 3DMark, 3DMark 11, Unigine Heaven 4.0

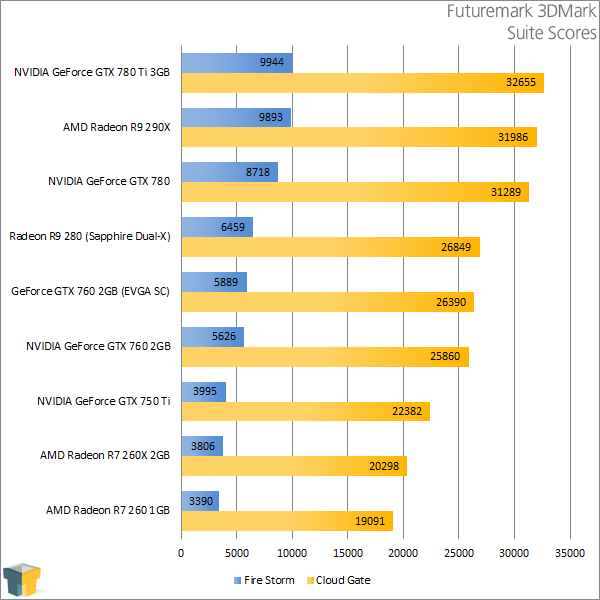

We don’t make it a point to seek out automated gaming benchmarks, but we do like to get a couple in that anyone reading this can run themselves. Of these, Futuremark’s name leads the pack, as its benchmarks have become synonymous with the activity. Plus, it does help that the company’s benchmarks stress PCs to their limit – and beyond.

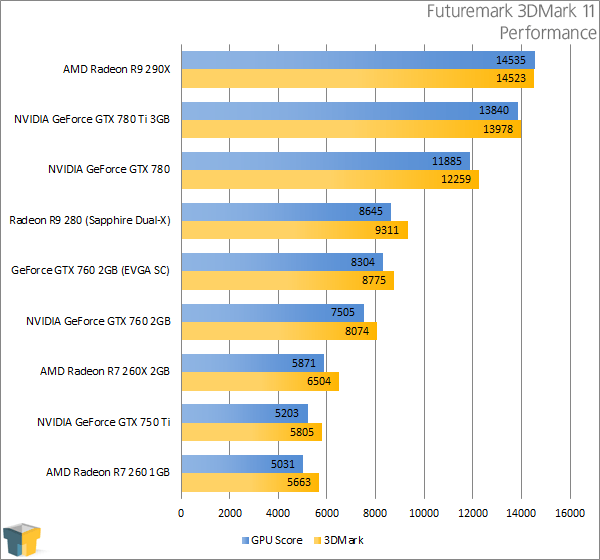

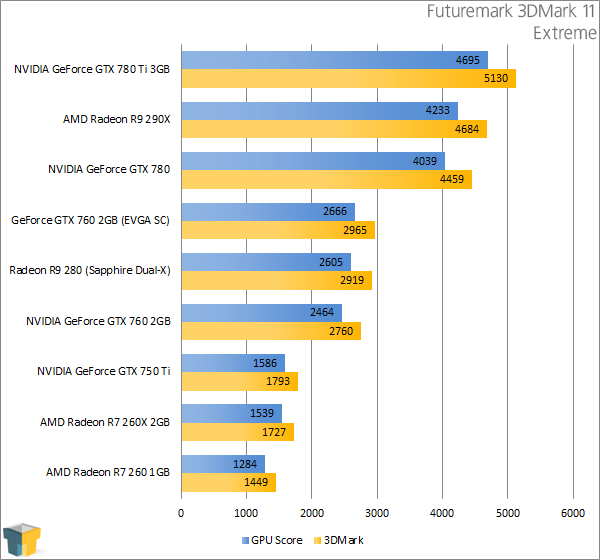

While Futuremark’s latest GPU test suite is 3DMark, I’m also including results from 3DMark 11 as it’s still a common choice among benchmarkers.

Interestingly, while Sapphire’s Dual-X did outperform EVGA’s Superclocked overall, it didn’t stretch its arms in our real-world tests as it does in 3DMark – 6459 vs. 5889 in Fire Storm. We don’t see quite as large a delta with 3DMark 11 – and in fact I’d say those result gains come closer to what we saw through the course of the review.

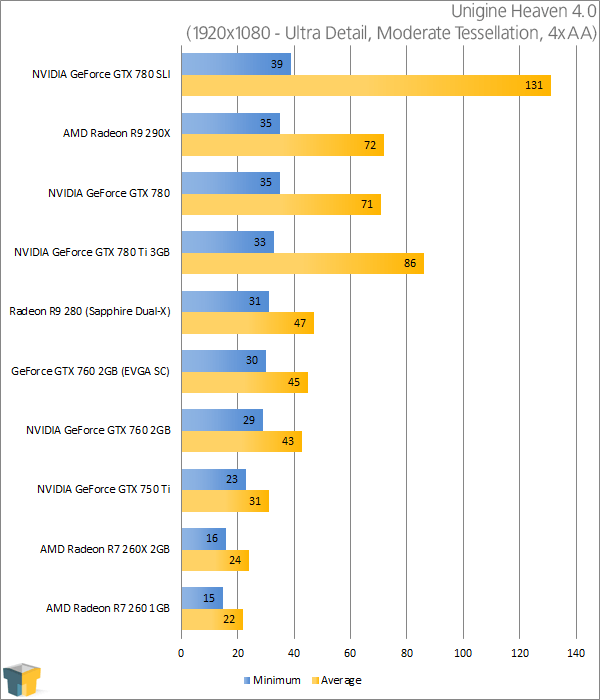

Unigine Heaven 4.0

Unigine might not have as established a name as Futuremark, but its products are nothing short of “awesome”. The company’s main focus is its game engine, but a by-product of that is its benchmarks, which are used to both give benchmarkers another great tool to take advantage of, and also to show-off what its engine is capable of. It’s a win-win all-around.

The biggest reason that the company’s “Heaven” benchmark is so relied-upon by benchmarkers is that both AMD and NVIDIA promote it for its heavy use of tessellation. Like 3DMark, the benchmark here is overkill by design, so results are not going to directly correlate with real gameplay. Rather, they showcase which card models can better handle both DX11 and its GPU-bogging features.

Finishing up our synthetic tests, we see more of what to expect: AMD’s card coming out a few millimeters ahead of NVIDIA’s.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!