- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Sapphire Radeon HD 5550 Ultimate

This past February, AMD quietly launched the Radeon HD 5550 alongside the much more touted HD 5570. At about $10 less than that card, the HD 5550 is an unusual breed. To help put all of the pieces together, Sapphire sent us its “Ultimate” edition of the card, which uses reference clock speeds, but features a very effective passive cooler.

Page 11 – Power & Temperatures

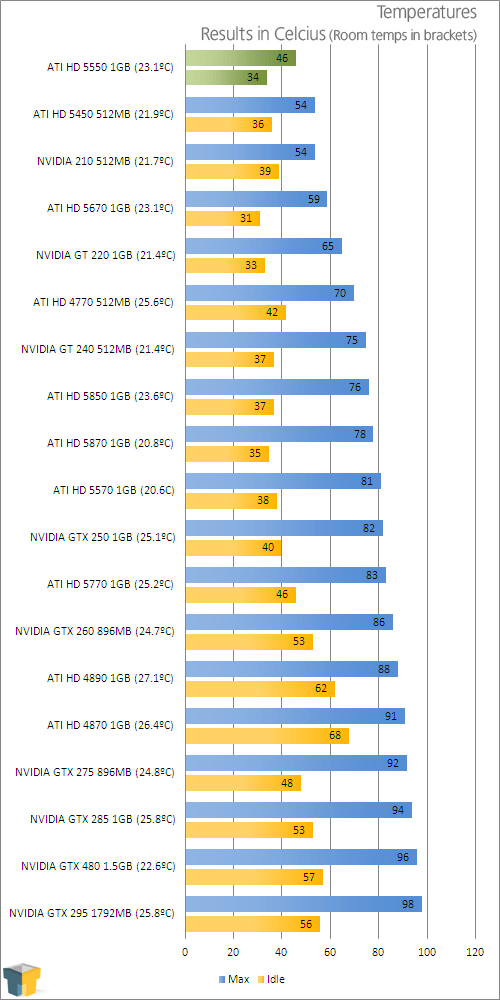

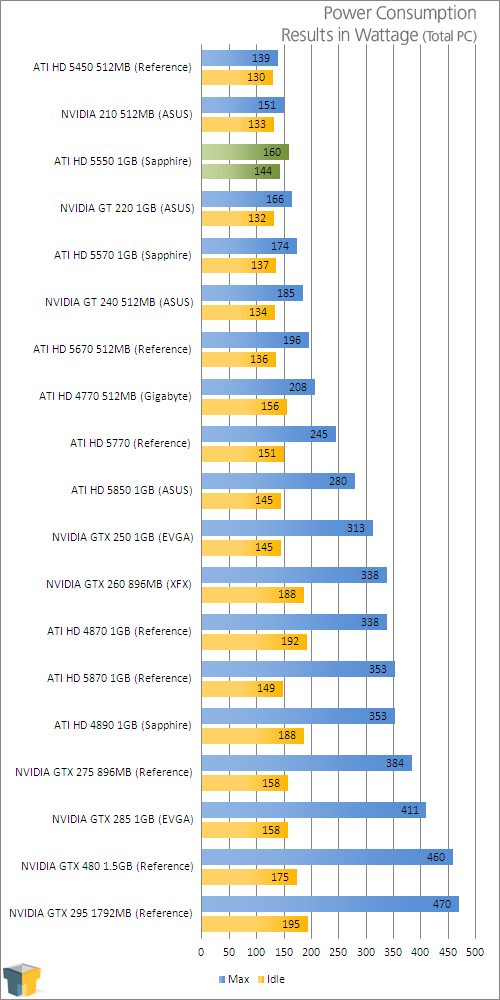

To test our graphics cards for both temperatures and power consumption, we utilize OCCT for the stress-testing, GPU-Z for the temperature monitoring, and a Kill-a-Watt for power monitoring. The Kill-a-Watt is plugged into its own socket, with only the PC connect to it.

As per our guidelines when benchmarking with Windows, when the room temperature is stable (and reasonable), the test machine is boot up and left to sit at the Windows desktop until things are completely idle. Once things are good to go, the idle wattage is noted, GPU-Z is started up to begin monitoring card temperatures, and OCCT is set up to begin stress-testing.

To push the cards we test to their absolute limit, we use OCCT in full-screen 2560×1600 mode, and allow it to run for 30 minutes, which includes a one minute lull at the start, and a three minute lull at the end. After about 10 minutes, we begin to monitor our Kill-a-Watt to record the max wattage.

As I mentioned in the intro, the cooler on this card works well, and that’s proven here. No matter how long I ran OCCT, the temperature would never go above 46°C. Even after our overclock, we only managed to get the card up to 48°C. This is definitely HTPC material!

I find the idle wattage on the HD 5550 to be a little higher than I would expect, given it’s more than the HD 5570, but the card redeems itself at load, shaving off 14W.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!