- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

The Almost Titan: NVIDIA GeForce GTX 780 Review

When Titan released in February, it seemed likely that NVIDIA’s GeForce 700 series would be held off on for some time. Well, with today’s launch of the company’s mini-Titan – ahem, GTX 780, we’ve been proven wrong. Compared to the GTX 680, it offers a lot of +50%’s – but is it worth its $649 price tag?

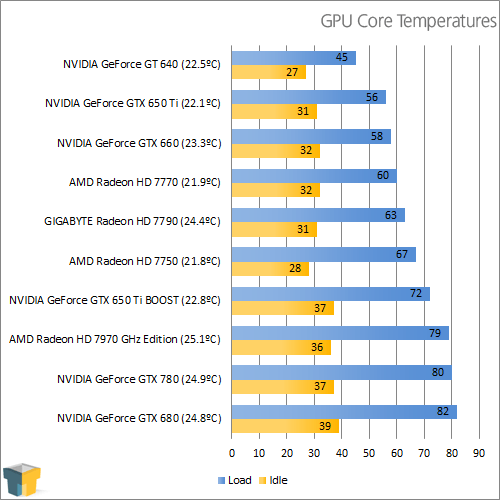

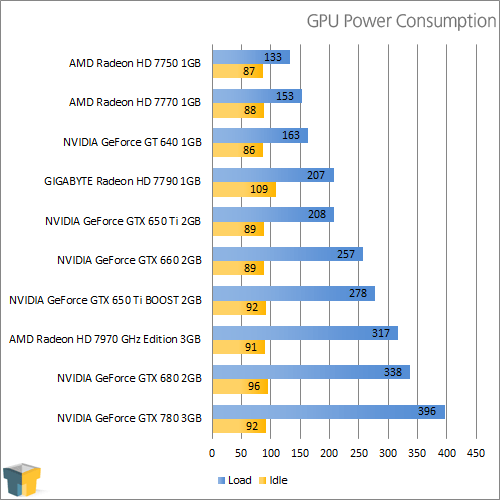

Page 8 – Temperatures & Power

To test graphics cards for both their power consumption and temperature at load, we utilize a couple of different tools. On the hardware side, we use a trusty Kill-a-Watt power monitor which our GPU testing machine plugs directly into. For software, we use Futuremark’s 3DMark 11 to stress-test the card, and techPowerUp’s GPU-Z to monitor and record the temperatures.

To test, the general area around the chassis is checked with a temperature gun, with the average temperature recorded (and thus noted in brackets next to the card name in the first graph below). Once that’s established, the PC is turned on and left to site idle for five minutes. At this point, GPU-Z is opened along with 3DMark 11. We then kick-off an Extreme run of 3DMark and immediately begin monitoring the Kill-a-Watt for the peak wattage reached. We only monitor the Kill-a-Watt during the first two tests, as we found that’s where the peak is always attained.

Note: (xx.x°C) refers to ambient temperature in our charts.

NVIDIA’s GTX 780, as mentioned in the intro, has a higher TDP than the GTX 680 (it’s 250W vs. 195W) – an increase of 55W. In our testing, that value was almost perfectly reflected; we saw +58W. To be that spot-on with the TDP is rare; there’s definitely some truth to NVIDIA’s marketing here.

Beating both the GTX 680 and GTX 780 with both power and temperatures is AMD’s HD 7970 GHz. AMD’s cooler isn’t nearly as cool looking (in my opinion) as the one found on the GTX 780, but it does seem to be efficient.

With that, it’s onward to the final thoughts.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!