- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

The Future is Parallel, But that Future is a Long Way Off

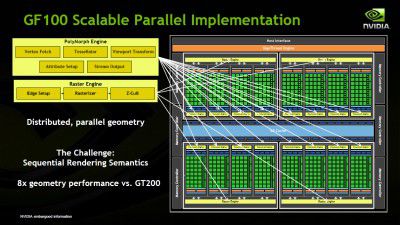

During an interview with Forbes last week, NVIDIA’s Chief Scientist, Bill Dally, stated that Moore’s Law’s time is running out, and now is a good time to start thinking in parallel. Of course, such a statement is bold, but not surprising coming from NVIDIA, as parallel processing is where its hardware excels, which is proven by any of the CUDA-based apps that the company or other developers offer.

But as Ars Technica’s Jon Stokes points out, there’s a lot more to writing your code to take advantage of parallel processing than just having the right tools. Tools are one thing, but thinking is something else, and as it is, programing for parallel workloads is difficult, and it’s the exact reason that we’ve had multi-core processors for over five years now but are still lacking many truly multi-threaded applications and workloads.

The situation does continue to get better over time, but most processes on our PC are designed for serial operation, and it looks to remain that way for quite some time. As the article here points out, even students, when diving into learning about parallel programing, get frustrated and many drop out. Writing in parallel requires looking at the big picture and thinking in parallel… that’s tough.

Over the years, there have been many rumors and false hopes that a be all end all compiler of workload translator could come along and automatically convert serial processes into parallel, but we’ve yet to see it. I don’t think it’s impossible to see such a thing, but as it stands, it’s likely a very long way away. By the time such a thing could come into existence, we may possibly have this parallel thing down pat. At least we could hope.

As for NVIDIA, its easy to understand the company’s viewpoint, and even agree with it, but will take a lot more than just words to overcome the challenge of moving into a truly parallel computing world.

Ultimately, NVIDIA’s fundamental problem boils down to this simple fact: you can do parallel tasks in a serial manner, but you can’t do serial tasks in a parallel manner. What this means for computing’s history up until now is that everyone started out making serial hardware, with the result that parallel tasks have tended to be done in serial because that’s the hardware that was available. Some percentage of those tasks can be rethought to work in parallel, but, as I said above, so far this percentage has been disappointingly low.