- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

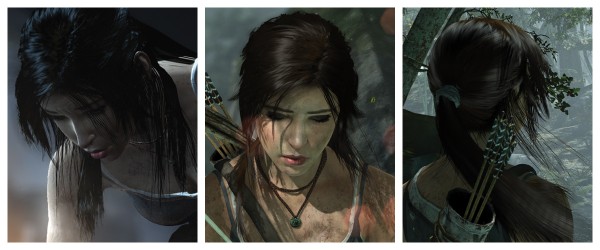

TressFX – For Salon Beautiful Hair in Your Virtual Home

Have you ever wanted silky-smooth, volumized, independently-rendered hair? Now you can, with Per-Pixel Linked-List Order Independent Transparency DirectCompute Real-time Physics Simulated TressFX Hair, all without paying salon prices.

AMD and Crystal Dynamics have formulated a special blend of Silica and C-derived syntax from the Direct family to create a nourishing and invigorating extension that can bring life back to poorly-rendered and static hair. With careful application, hair can be instantly volumized.

Single strand, textural and polygonal enhancements allow for contextual dynamic animation over a broad range of environmental conditions.

Fortified with collision detection algorithms to prevent tangling and knotting, divas can now flick their hair with ferocity and confidence. Rain, sleet, snow, it will all enhance and provide a pure glossy sheen; even a salon professional would be proud.

Liberal use of Graphics Core Next architecture, available from select Radeon HD 7000 series GPUs, enables life-like rendering. Virtual conditioners and shared memory management can process and nourish hair from root to tip. To enhance your Tomb Raider experience, order TressFX now. Available while supplies last.

For more information about TressFX, please check out AMD’s detailed explanation of the science behind luxurious hair.