- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

A Linux For Speed Hounds: A Look At Clear Linux Performance

We’ve heard lots about Clear Linux being a super-fast OS, so we’ve decided to put the claim to the test. With the help of our Core i9-9980XE test platform, we’re taking a look at performance in Intel’s Clear Linux, alongside five other distros, including the new Fedora 31 beta and Ubuntu 19.10. Even with the hardware remaining identical from distro to distro, some of the results are bound to surprise you.

At only a few years old, Clear Linux hasn’t been around long enough to start appearing in top-ten distro lists all over, but it’s an OS that offers enough uniqueness to warrant a fair look. At a basic level, it’s a distribution that focuses largely on security and performance, with many of its design philosophies accompanying that.

A notable Clear Linux fact is that it’s an Intel creation, originally birthed in the company’s open-source lab. That means that it’s optimized for Intel’s own processors, but the reality is, many performance benefits seen in Clear Linux for Intel hardware could be seen for competitive gear, as well. Clear Linux isn’t just about Intel shoving its optimizations into Linux. It’s about optimizing the entire Linux OS.

This article started out as a basic distro performance comparison until adding even more options became too much of a time-sink. With the distros we did test, Clear Linux managed to separate itself from the crowd in multiple cases, and since we’ve been wanting to explore Intel’s take on Linux for a while, this seems like the perfect time to finally do that.

To be “clear”, this is not a review, but merely a performance look at an out-of-the-box Clear Linux (and others). We may expand testing to trying out Clear Linux on AMD hardware at some point, as well as add even more distros for comparison’s sake. For now, this is just a start. If you have particular performance interests, please feel free to leave a comment.

For this article, Clear Linux was tested in addition to Ubuntu 18.04.3 LTS, 19.04, and the just-released 19.10. For good measure, Fedora 31 beta and Linux Mint 19.2 have also been tested. Intel’s 18-core Core i9-9980XE CPU was used along with 32GB of DDR4-3200 memory. We plan to expand our Linux testing to the GPU in time, but for now, we’re sticking to only the CPU.

We ran close to fifty benchmarks across our six tested distros, but only a fraction of them are included here. Because we’re doing cross-distro testing on the same PC, the majority of tests do not reflect any meaningful variance. We are including some test results to highlight the lack of movement, but it’s important to note that just because most of the tests on this page show noticeable differences between distros, most workloads will perform similarly on each one. We wanted to focus on the standout examples.

Here’s the tested hardware and distro / kernel versions:

| Intel LGA2011-3 Test Platform | |

| Processor | Intel Core i9-9980XE (3.0GHz, 18C/36T) |

| Motherboard | ASUS ROG STRIX X299-E GAMING CPU tested with BIOS 2002 (October 4, 2019) |

| Memory | G.SKILL Flare X (F4-3200C14-8GFX) 8GB x 4 Operates at DDR4-3200 14-14-14 (1.35V) |

| Graphics | NVIDIA TITAN RTX (24GB) |

| Storage | Kingston KC1000 960GB M.2 SSD (SATA 6Gbps) |

| Power Supply | Corsair Professional Series Gold AX1200 (1200W) |

| Chassis | Corsair Carbide 600C |

| Cooling | NZXT Kraken X62 AIO (280mm) |

| Et cetera | Clear Linux 31230 (5.3.6-849.native) Fedora 31 Beta (5.3.6-300.fc31) Linux Mint 19.2 (4.15.0-54-generic) Ubuntu 18.04.3 LTS (5.0.0-31-generic) Ubuntu 19.04 (5.0.0-32-generic) Ubuntu 19.10 (5.3.0-18-generic) |

| All product links in this table are affiliated, and support the website. | |

Our Linux configurations are simple, close to “out-of-the-box”. Prior to any testing, the OS is completely updated, with all relevant software needed for testing installed. Sleep is disabled, and the performance power profile is enforced with this command (as root):

echo performance | tee /sys/devices/system/cpu/cpu*/cpufreq/scaling_governor

A lot of our testing is done with the help of the open-source Phoronix Test Suite (PTS), and compared to our most recent Linux testing, we’ve augmented the suite with quite a few additional tests. Some of those are not actually making a debut here since they didn’t scale between distros at all, but they will certainly be included in content complementing some upcoming CPU launches.

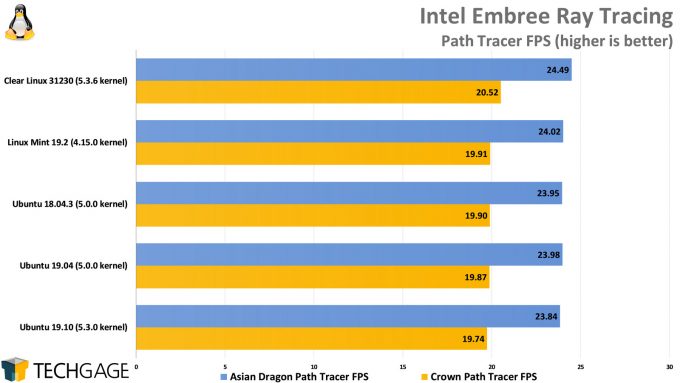

Notable additions featured in this article include GCC compiling, V-Ray Next, Intel Embree, IndigoBench, Intel SVT, and the return of single-threaded tests SciMark and HMMer.

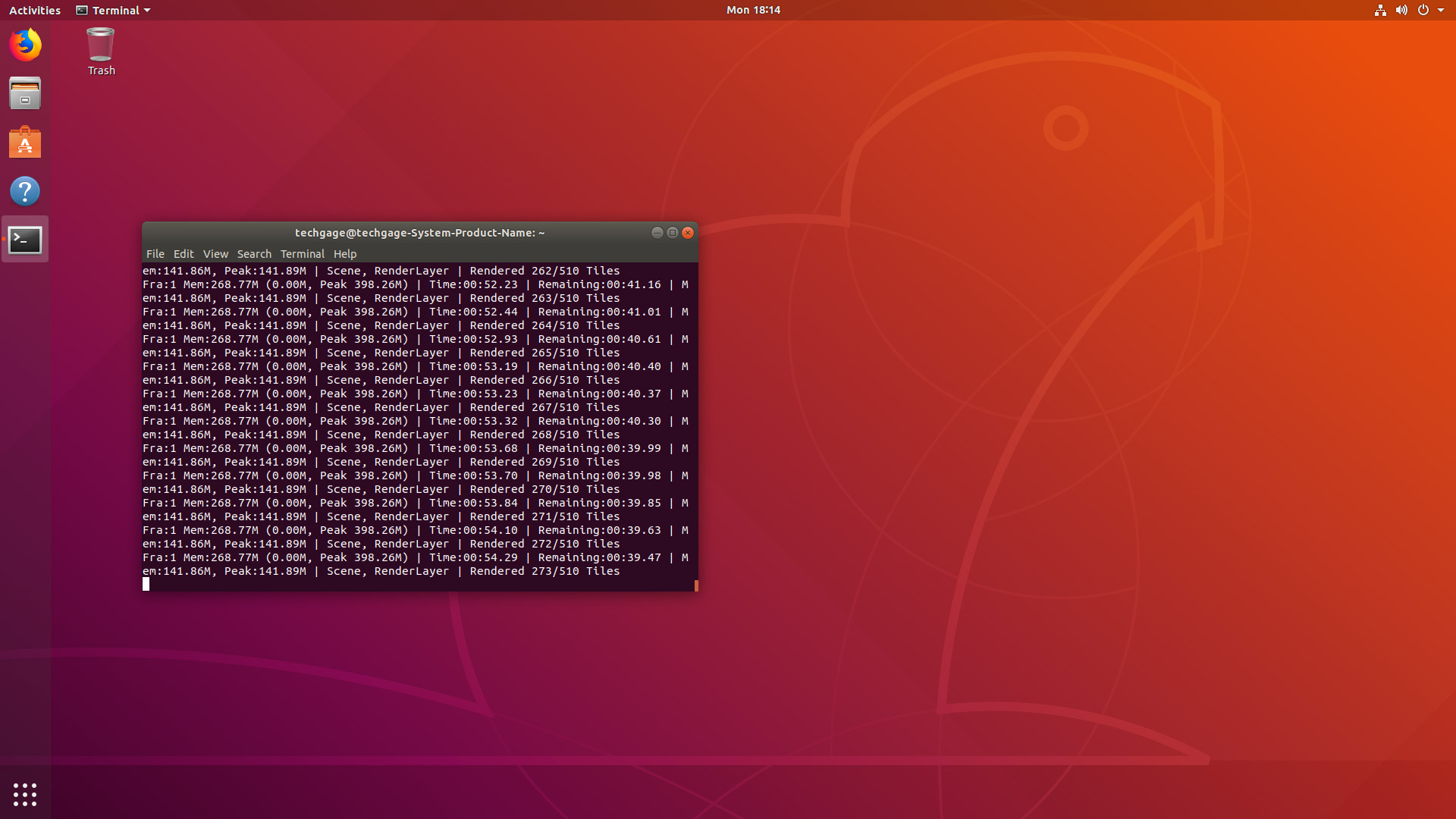

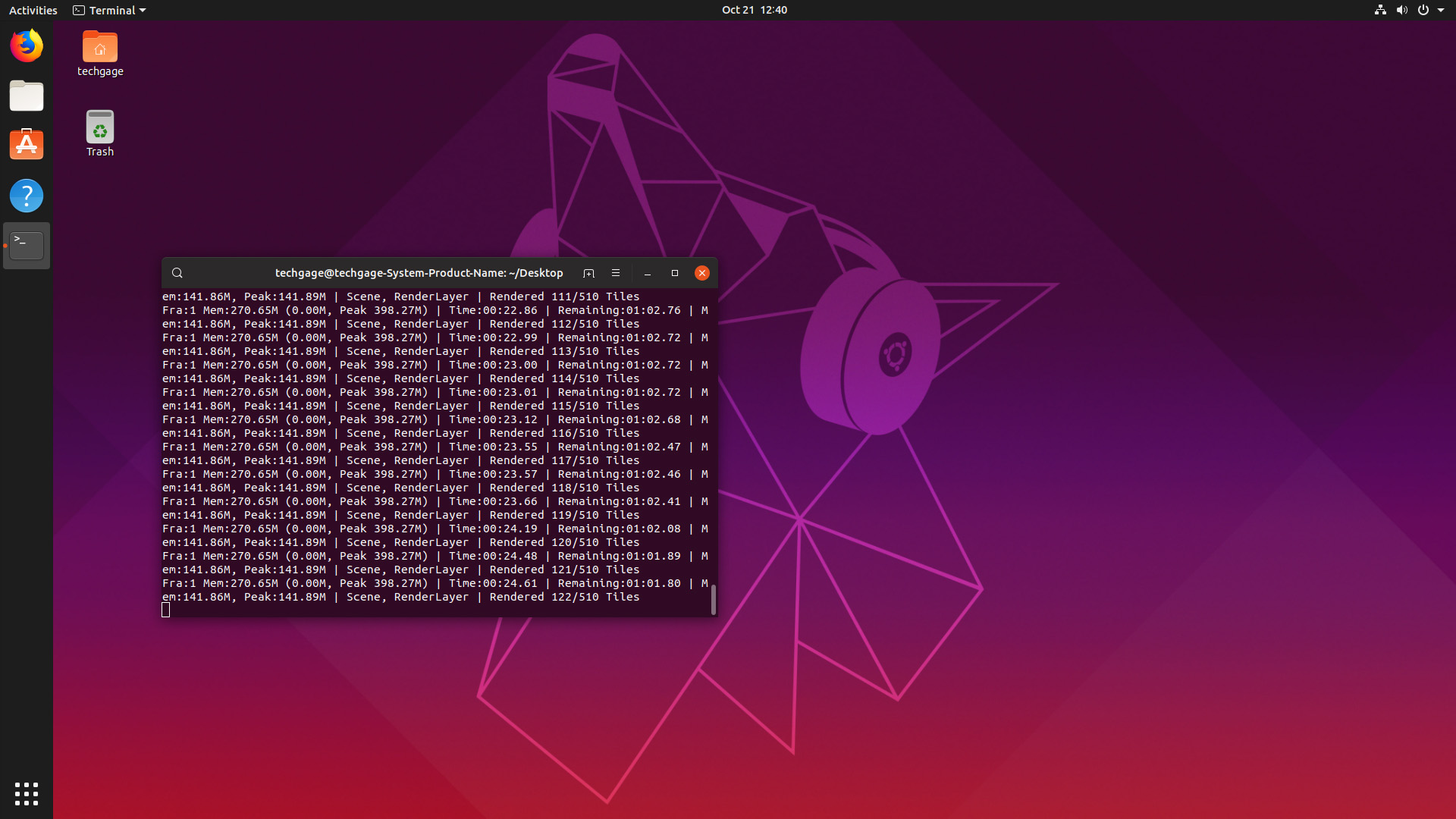

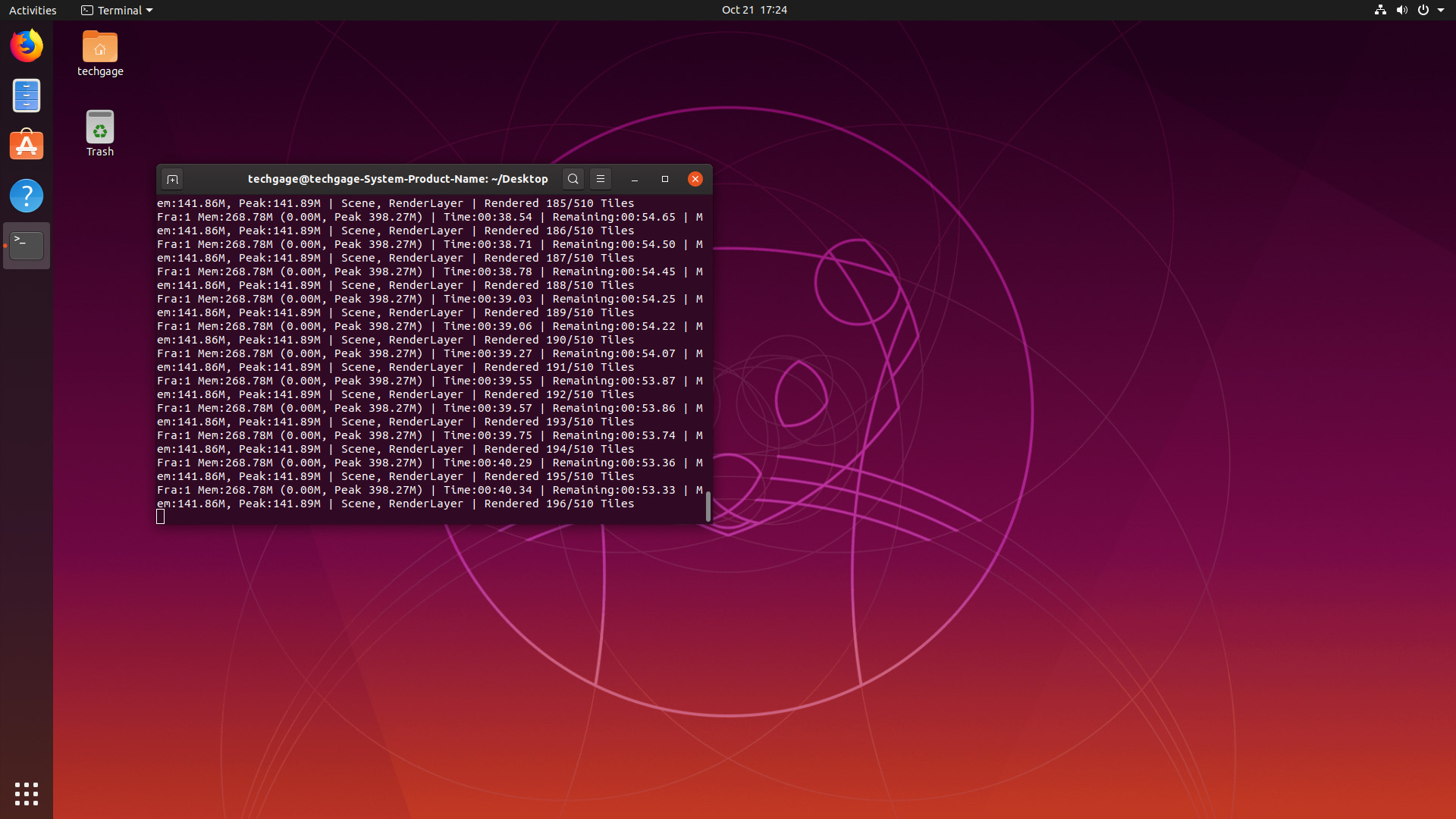

With PTS, benchmarks are run three times by default, and then averaged. We run the entire suite twice over, which means all tests are run at least six times total for their averages. Our Blender testing runs each render three times, while four runs help make up our V-Ray Next results. We wanted to include HandBrake testing here, but the 1.2.2 Flatpak required for some distros actually installs version 1.2.1, which is not at all comparable (despite the minor version iteration).

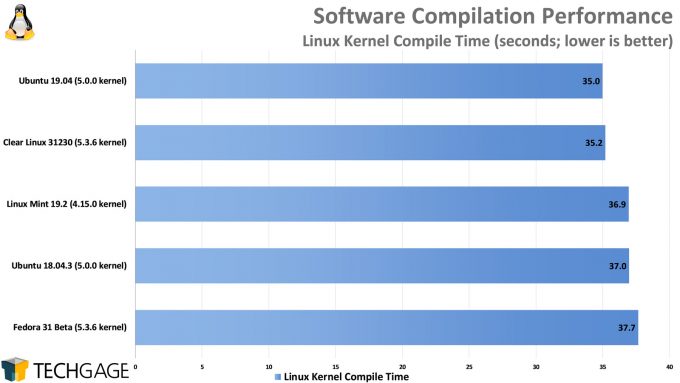

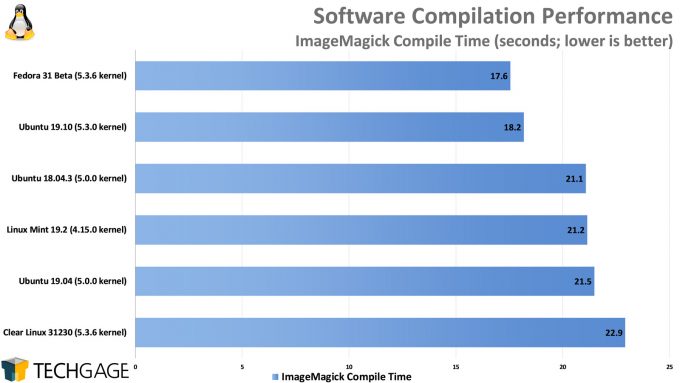

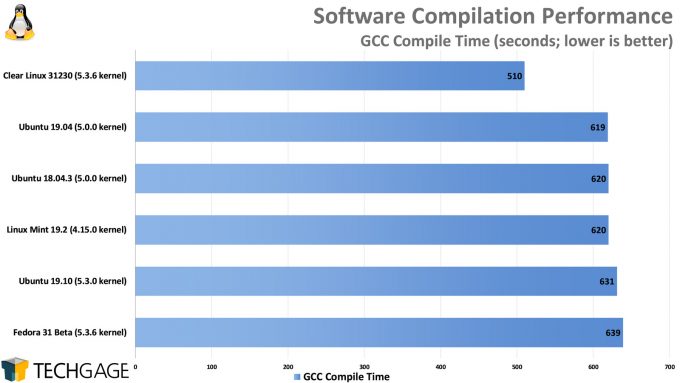

Compile

The GCC compile is new to our suite, and it’s the only one here that shows any sort of improvement on Clear Linux. But the improvement is notable, because it managed to shave 100 seconds off of the compile compared to the others. It’s hard to call Clear Linux a runaway winner, though, since it didn’t dominate the other tests the same way. In fact, it lagged behind in the ImageMagick compile. Not all compile workloads are built alike, that’s for sure.

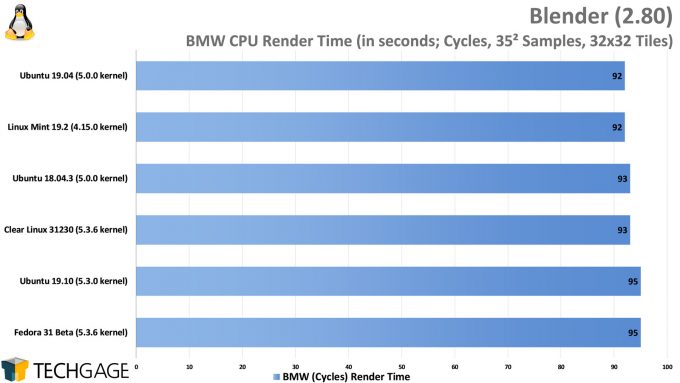

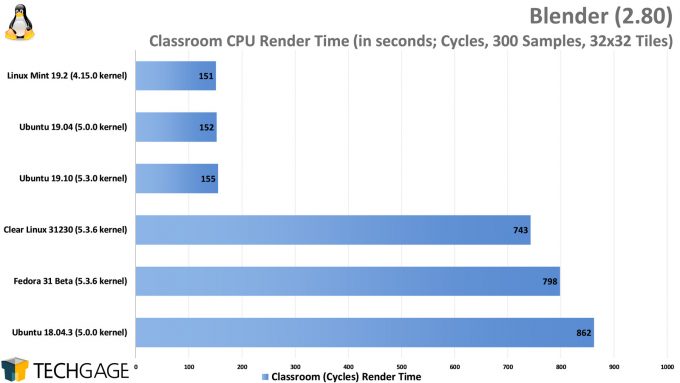

Rendering

With Blender, we run into our first oddity in testing. With the BMW project, all of the distros performed roughly the same, although the older distros surprisingly placed ahead of Ubuntu 19.10 and Fedora 31. With the Classroom project, which is far more complex, certain distros will get hung-up on some processes of the render and take far longer than they should.

It’s important to note this bizarre performance behavior is repeatable, and reflects using Blender 2.80 straight from the official website, and the same projects we use for our regular Blender testing in Windows. The Classroom project has given us some undesirable variance in the past in our Windows testing, but nothing to this extent, and it was never definitively repeatable like this. We’re not really sure what to take away from this, but we feel confident enough to say that this Classroom project is probably an anomaly in this regard.

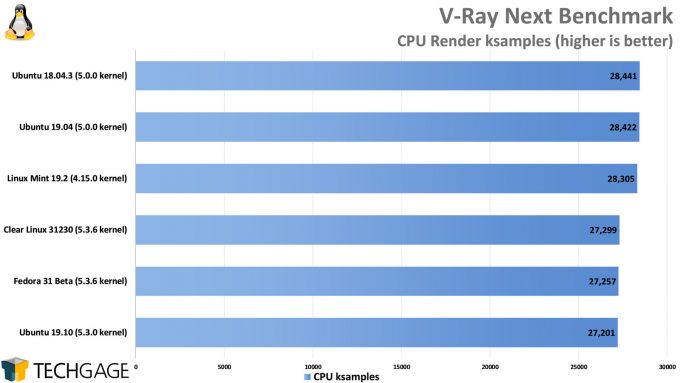

With Chaos Group’s V-Ray Next benchmark for Linux, we’re seeing another odd case where the most up-to-date distros are not performing as well as the older ones. The overall differences are minor in the grand scheme, but they’re worth noting, nonetheless.

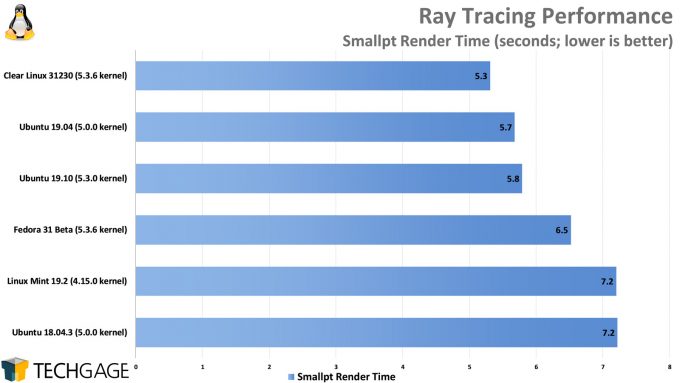

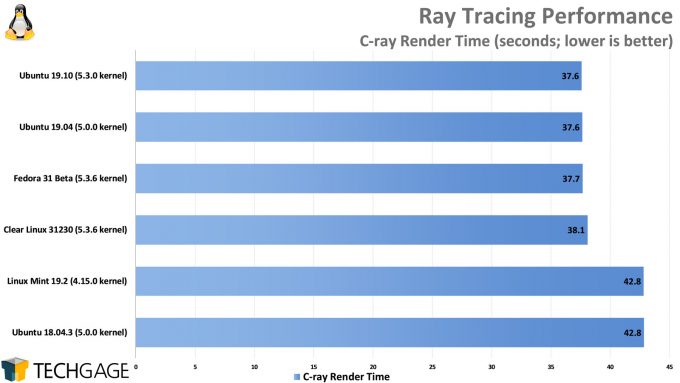

Clear Linux doesn’t seem to have a demanding lead in rendering overall, but it definitely doesn’t fall to the back of the pack. With the Smallpt test, it takes the cake, while with C-Ray, it sticks to most other distros and hovers around the 38 second mark. Performs not-so-strangely, the oldest kernels perform the weakest here.

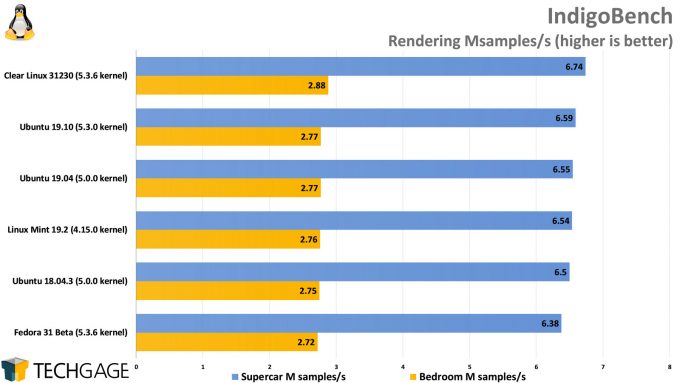

On an ordinary day, we wouldn’t find this IndigoBench performance all too interesting, but because the entire goal of this article is to see what Clear Linux can eke out of our hardware, the fact that it manages to place ahead of all the others in both rendering tests is impressive.

To wrap up our rendering tests, we introduce Intel Embree into the mix. It’s not unusual that an Intel software library runs best on an Intel Linux distribution, but it’s interesting that it managed to pull it off here, as well as in Indigo Renderer (which doesn’t use Embree, for the record). The advantages are not extreme, but the fact that we’re seeing notable gains at all on the same hardware is what’s important.

Encode

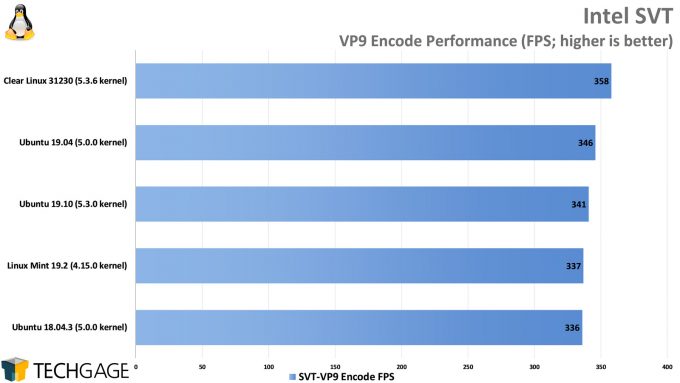

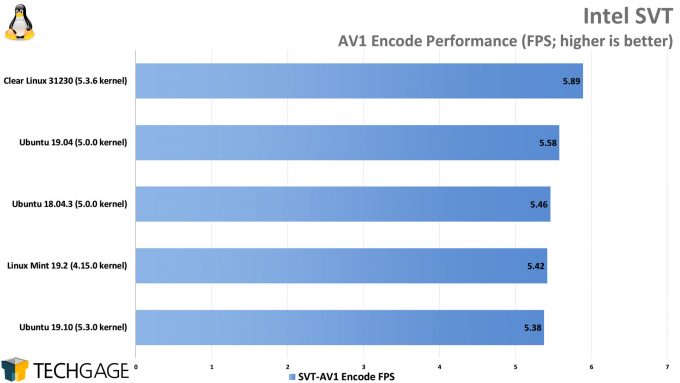

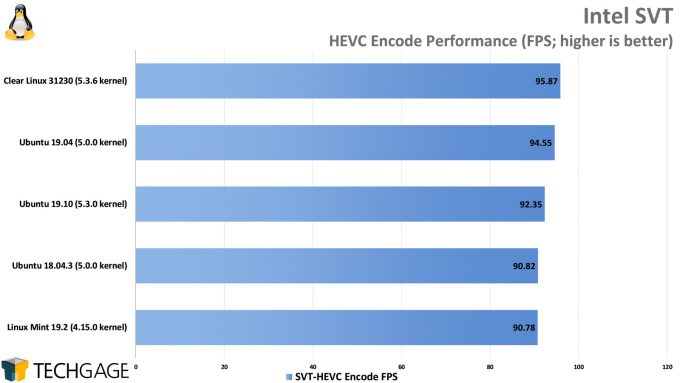

As mentioned before, we had planned to include HandBrake testing here, but half of our distros were inadvertently tested with version 1.2.1 even though the 1.2.2 flatpak was used for installation. Nonetheless, we fortunately had Intel SVT tests to act as a suitable backup, which bodes well once again for Clear Linux since it manages to rule the top of the charts for all three codecs.

While these results might not seem too different overall, streaming companies will be keenly interested in understanding what configuration will churn through encodes quicker than another. If a machine has a single purpose, then it makes all the sense in the world to go with the software that’s going to deliver the best overall performance (and efficiency). “Time is money.”

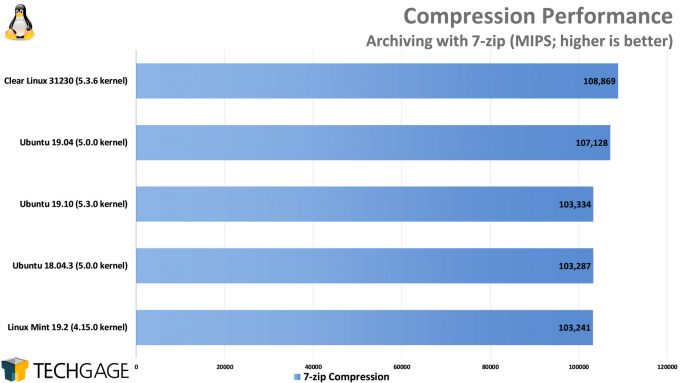

Compression

With our compression test, we’re not seeing major gains with Clear Linux, but that’s only when it’s compared to Ubuntu 19.04 and 19.10. Fedora unfortunately errored out on this test, so we’re not sure where it’d slide in. This is another interesting case where an older Ubuntu will crunch a test quicker than a newer one. This certainly isn’t anything an end-user should ever fuss over, but it’s fun to highlight.

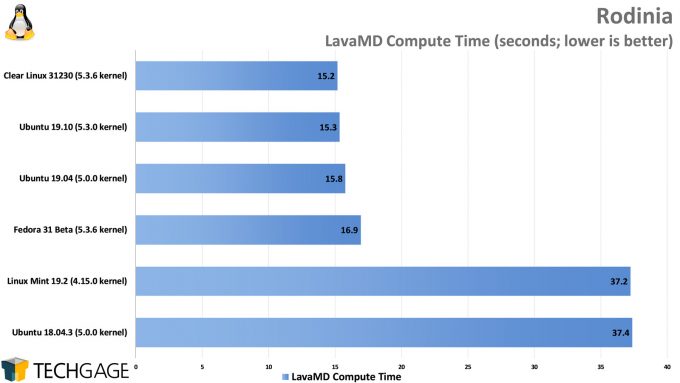

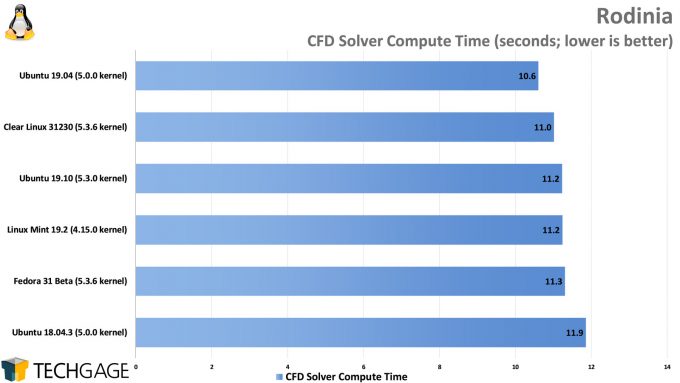

Scientific

As we saw with a couple of the ray tracing tests earlier, the older distros in this list didn’t fare too well with Rodinia’s LavaMD test. We’re not sure what configuration change caused such a dramatic bump over time, but what that result tells us is that if you don’t have a good reason not to update, then you’ll want to update to hopefully avoid performance niggles like these. The CFD Solver test fared a lot better overall, with no distro really dominating.

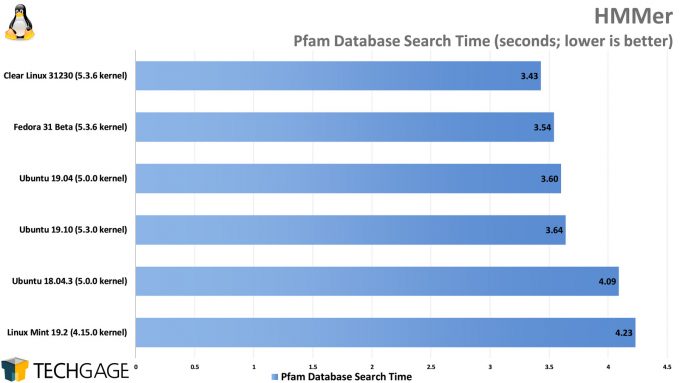

Single-threaded

HMMer makes a return to our suite after we absent-mindedly removed it before realizing we were completely lacking (largely) single-threaded tests. We didn’t expect to see such large differences here, but this is another test that works to Clear Linux’s advantage. And, yet again, we see the caveats of sticking to an older Linux with the Ubuntu 18.04 and Mint 19.2 results.

And now, for some truly standout results:

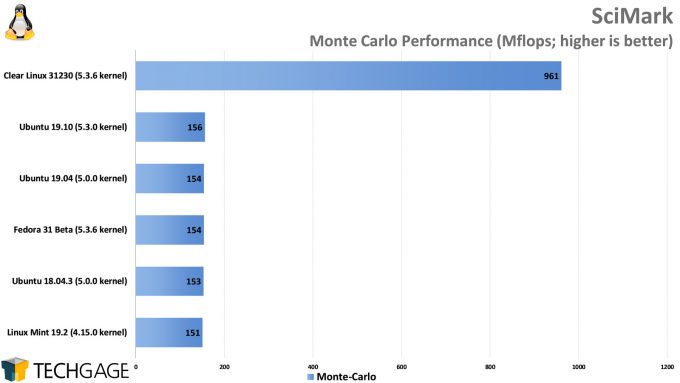

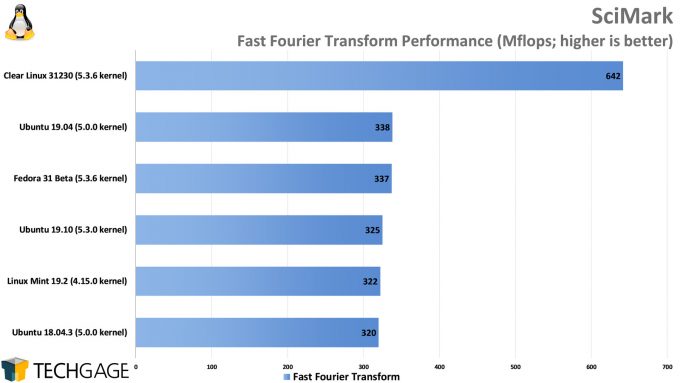

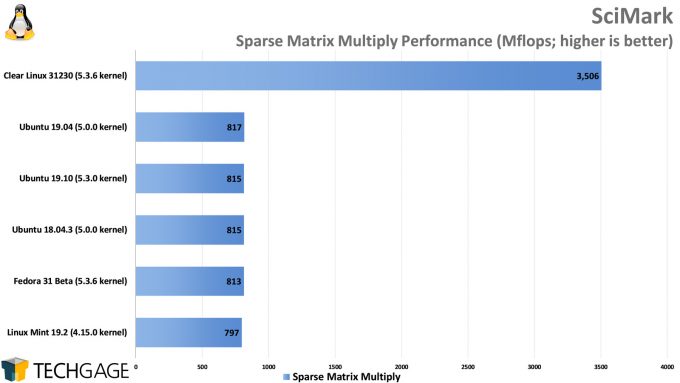

SciMark was dropped from our suite a while ago due to issues that arose, but we wanted to give it another go to reintroduce it alongside HMMer. Well, we sure didn’t expect the kinds of results we saw. In fact, we thought something was outright broken, but sure enough, Clear Linux dominates these SciMark results in a huge way.

What’s interesting about these results is that SciMark is a completely single-threaded benchmark, so the performance boosts here boil down entirely to compiler optimizations in the OS. Clear Linux does something that SciMark likes, but this is definitely an extreme case of (built-in) optimization. What it does highlight is that configuration changes can make an enormous impact on performance in some cases, so it pays to know your workload in-and-out if performance truly matters.

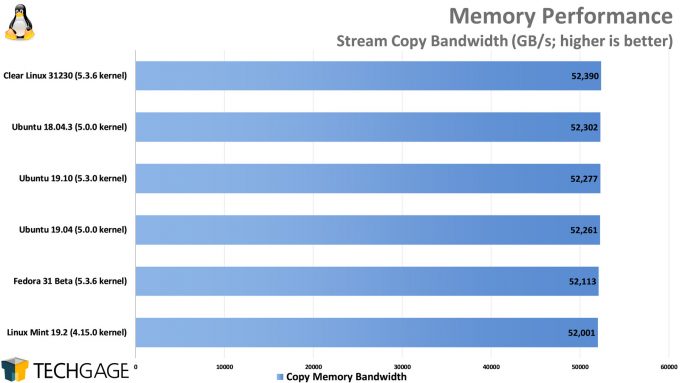

Memory

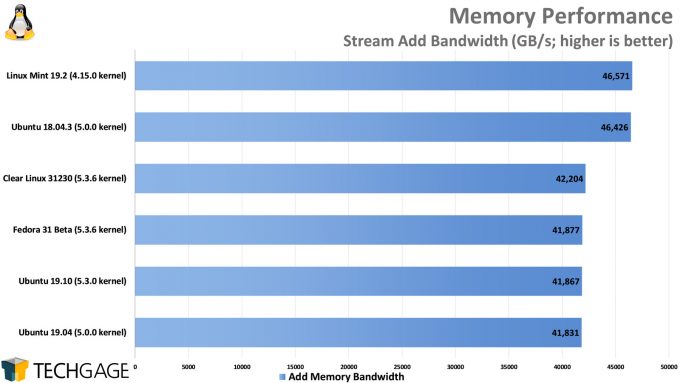

We’re wrapping up our performance testing with memory bandwidth, as it’s one of the simplest tests to run, and the results are important. If there is a fault, after all, it means that the rig is not working at peak efficiency. For some reason, the older distros have an edge in the Add test, but all six of the distros pretty much align in the Copy test. Ultimately, there’s nothing too interesting to see here, which is a good thing.

Final Thoughts

As this is not a review of Clear Linux, there is not much we can say outside of what our performance results told us, and overall, we were pretty impressed with what we saw. We originally felt like we might complete testing and see very few interesting results, but we were pleasantly surprised to see just how big of a difference various distro configurations can make to a workload.

Clear Linux isn’t quite ready for mainstream audiences, and it may not ever plan to target them. In our usage of the OS so far, it’s clear to us that it’s an OS better-suited for those who already know a bit about Linux, and are not afraid to learn about slightly different ways of doing things.

Because Clear Linux requires Secure Boot to run, we originally ran into an issue where the OS would simply error on boot, and to this day, we’re not sure what exactly caused the issue. However, the problem went away completely after we reflashed the PC’s EFI, so if you run into a similar issue, that could be your solution. We also experienced graphics-related issues at the desktop with the Nouveau driver on our TITAN RTX, but that’s become par for the course in our testing. We have a similar issue with Fedora 31 and Wayland, and both issues can be fixed by installing the proprietary NVIDIA driver.

If you’re happy with your distro of choice already, Clear Linux is still worth checking out in case it grabs you and inspires you to use it for a server or secondary desktop box. We feel the OS has a bit of a steeper learning-curve than usual, but the end result is attractive. While we encountered some graphics issues, the OS itself performed extremely well, and with certain animations turned off by default, GNOME feels satisfyingly fast.

We plan to explore Clear Linux more over time, as well as other expanded Linux testing. One of the reasons we dove into this kind of testing right now is that we were forced to take a break on Windows testing until some firmware and OS updates come along. This all means that we’ll be retesting our stack of CPUs soon, and when we do, we’ll put it through our new expanded Linux test suite. Our 9900KS look will come soon, while our Cascade Lake-X Core X and third-gen Threadripper looks will come next month. Stay tuned!

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!