- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

AMD Ryzen 5 3600X & Ryzen 5 3400G CPU Performance Review

Having taken a look at Linux performance with AMD’s Ryzen 5 6-core 3600X and 4-core 3400G last week, we’re now turning our attention to Windows. We’re tackling everything from encoding to rendering and math to gaming with the ultimate goal of finding out how these chips stack up, and see where the greatest strengths lay.

Page 4 – Rendering: Arnold, Blender, KeyShot, V-Ray Next

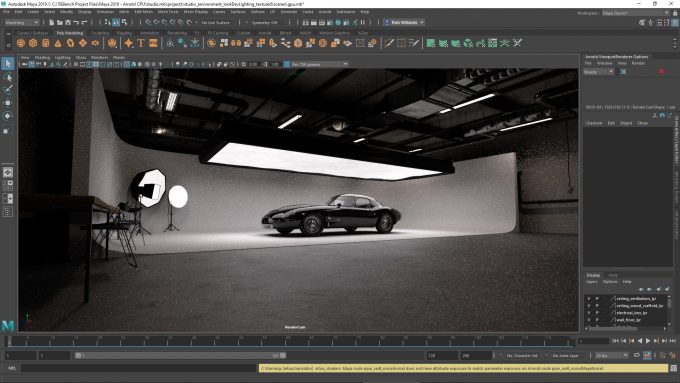

There are few things we find quite as satisfying as rendering: seeing a bunch of assets thrown into a viewport that turn into a beautiful scene. Rendering also happens to be one of the best possible examples of what can take advantage of as much PC hardware as you can throw at it. This is true both for CPUs and GPUs.

On this page and next, we’re tackling many different renderers, because not all renderers behave the same way. That will be proven in a few cases. If you don’t see a renderer that applies to you, it could to some degree in the future, should you decide to make a move to a different design suite or renderer. An example: V-Ray supports more than just 3ds Max; it also supports Cinema 4D, Maya, Rhino, SketchUp, and Houdini.

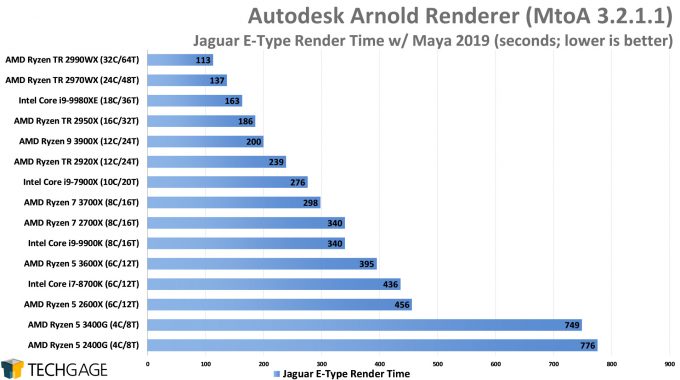

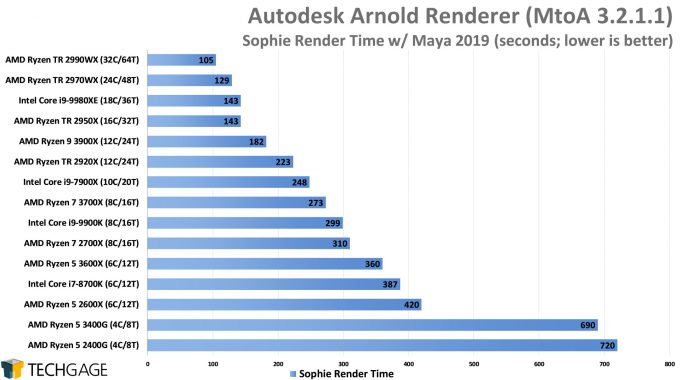

Autodesk Arnold

Ahh, now this is what we call satisfying scaling. Clearly, rendering is one of the most intensive tasks someone can do on a computer, but if we had to bet, we’d wager a lot of pixels on the fact that few people opting for the 3600X and especially 3400G will be doing much rendering. However, the times are changing, and solutions like Blender are making it easier than ever to create cool content with modest hardware. That said, for those who render a lot to the CPU, the more cores, the better.

It’s great to see AMD perform well in Arnold overall, since the GPU version of the renderer supports only NVIDIA’s CUDA. At some point, RTX OptiX acceleration is going to be added in, and at some point later than that, we should see heterogeneous OptiX/CPU support available. Ultimately, if you can take advantage of both your CPU and GPU, the biggest gains should be possible.

On that note, let’s move onto a solution that has had CPU+GPU rendering built-in for a little while: Blender.

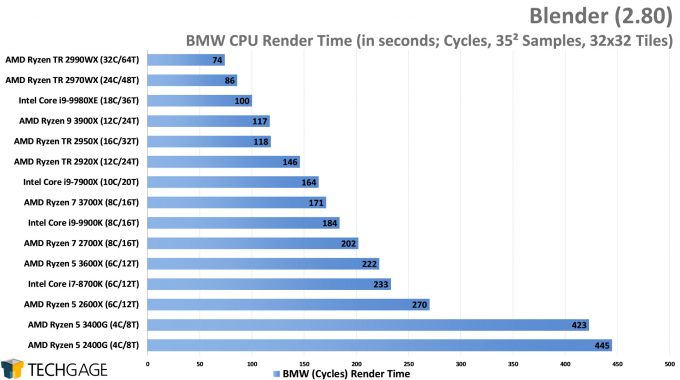

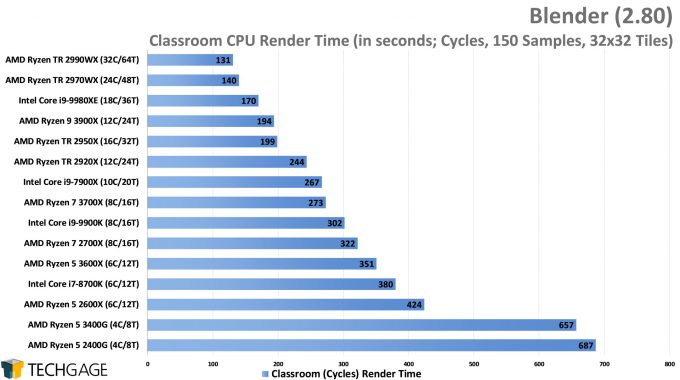

Blender

Blender never ceases to impress us with its ability to use both the CPU and GPU effectively – or we should specify “Cycles”, since the new Eevee render don’t use the CPU much at all for its actual rendering process. Meanwhile, Cycles is like fine wine that continues to get even better, and soon, that “better” will include NVIDIA OptiX support for seriously accelerated rendering.

Given its ability to scale well, Blender is giving us a similar picture as some of the other tests, with the current-gen Zen 2 chips showing good improvement over the last, and the G chips proving themselves to definitely belong to a different price segment, but that price is of course also bolstered with integrated graphics, which we’re not testing for this CPU-focused article.

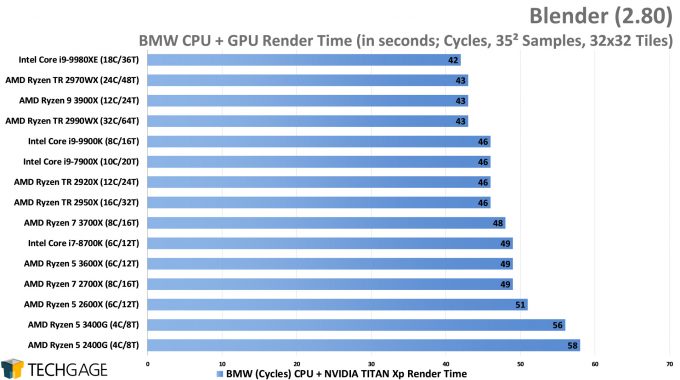

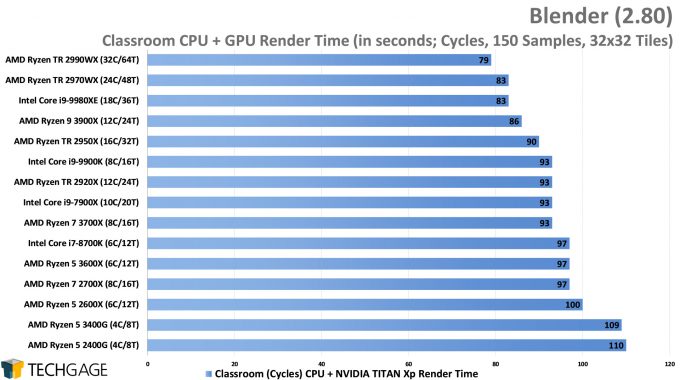

Speaking of GPUs, but those of a much larger sort, let’s see what changes once a TITAN Xp is introduced:

It’s immediately clear that Cycles really digs GPUs, with massive acceleration being seen when both the CPU and GPU are engaged in a render. If you’re a serious Blender user, you should check out our recent in-depth look at performance, which includes heterogeneous render tests with six sets of CPUs and GPUs all tested together, as well as viewport testing.

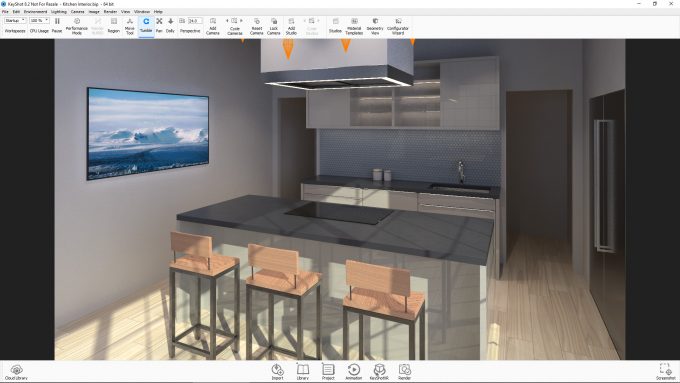

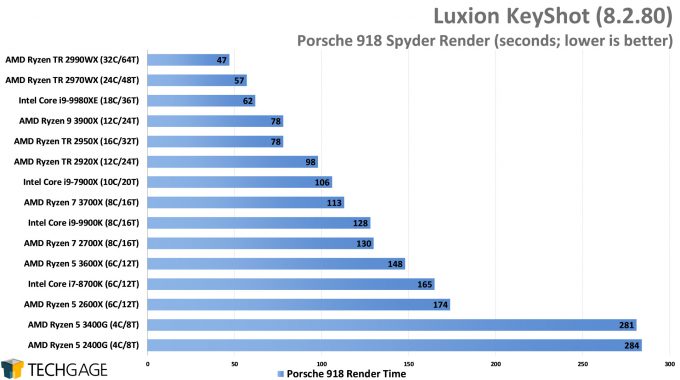

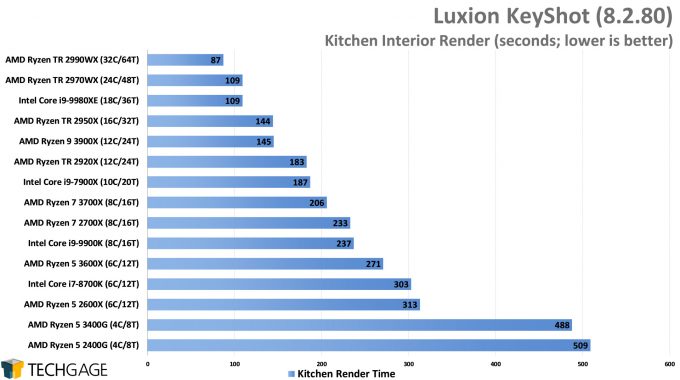

KeyShot

KeyShot continues to be one of our favorite tools to test with, primarily because it’s a lot of fun to use, and it also happens to scale really well. We’re not seeing much deviation from trends here in comparison to previous results, so we can take it as yet another form of proof that smaller CPUs are not ideal for CPU rendering. Again, 281s or so for the Porsche 918 model might not seem like a big deal, but that’s just one resolution, and one render. Since KeyShot is also a live renderer, that means your real-world interactions with the software will improve as your CPU’s capabilities grow.

At the moment, KeyShot doesn’t have heterogeneous rendering capabilities, but it is set to gain OptiX support with KS9, and since OptiX can use the CPU as well, we’re hoping we’ll see OptiX + CPU rendering in the future. We’re not trying to be greedy, we swear!

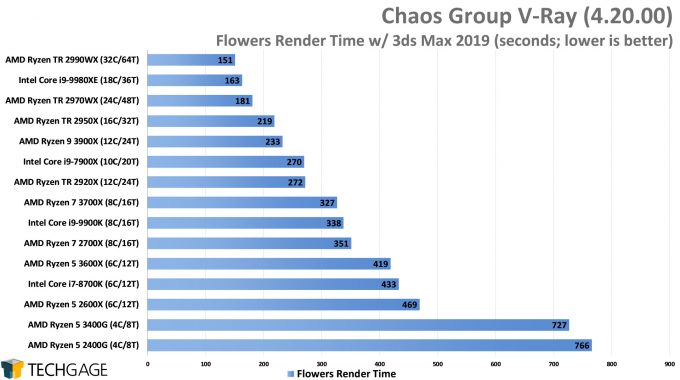

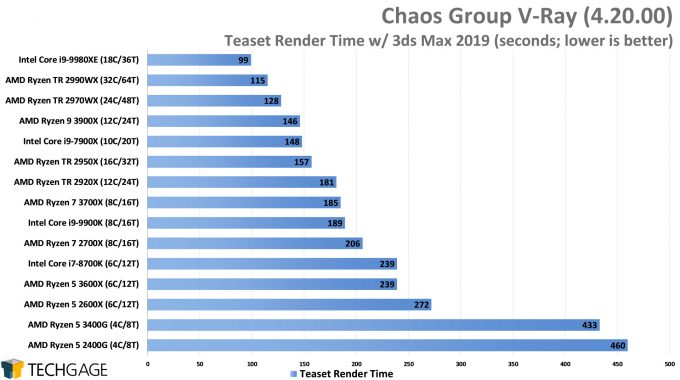

Chaos Group V-Ray Next

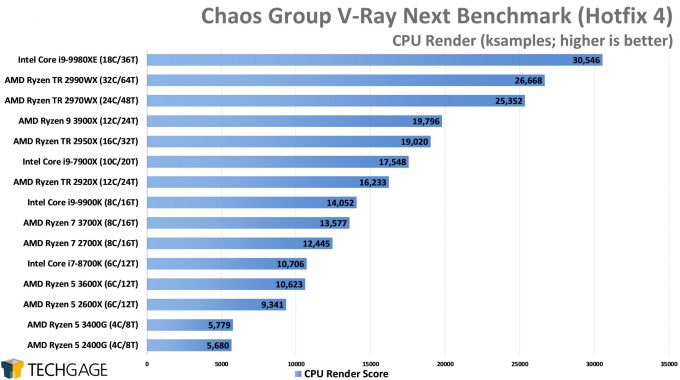

To help wrap-up this page, V-Ray chimes in and effectively backs up the rest of the results by showing that more cores matters a lot in such an intense workload. Unlike the others, though, Intel’s chips seem to get a bit of optimizations here, with the 18-core easily beating out the 32-core AMD chip in the more complex Teaset project, but AMD still manages to edge it out in the Flowers project.

Chaos Group offers a standalone benchmark, so we can’t help but compare our real-world results with it:

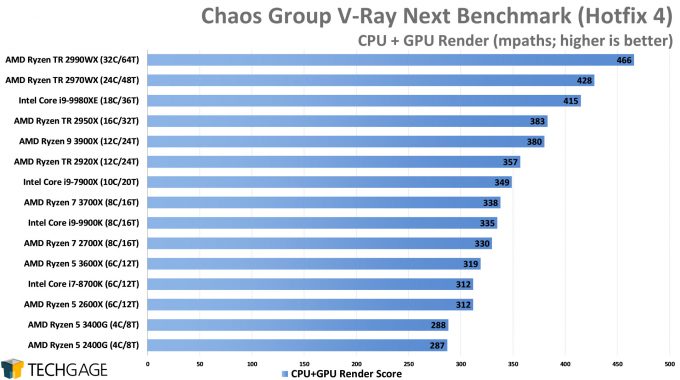

This test seems to agree that Intel has an advantage, but only with straight CPU rendering, and only in seemingly more complex projects. When the GPU is introduced, we return to scaling that we’d expect to see. For our future V-Ray performance results, we’re going to test with both a mid-range and high-end GPU so that we can better understand advantages of certain combinations over the others. Thankfully, those changes are coming soon, as some new CPUs are set to launch in the month ahead.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!