- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

An In-depth Look At AMD’s Ryzen 7 1800X, 1700X & 1700 Processors

To call AMD’s Ryzen family of processors highly anticipated would be an understatement. The market has been craving innovation in the CPU space for some time. With a trio of competitively priced desktop chips configured with 8 cores that have experienced major IPC boosts over past chips, all of that waiting has paid off.

Page 6 – Gaming: 3DMark, Ashes, Battlefield 1, GR: Wildlands, RotTR, TW: Attila & Watch Dogs 2

(All of our tests are explained in detail on page 2.)

It’s been easy to highlight the performance differences across our collection of CPUs on the previous pages, since most of the tests used take advantage of every thread we give them. But now, it’s time to move onto testing that’s a different beast entirely: gaming.

In order for a gaming benchmark to be useful in a CPU review, the workload on the GPU needs to be as mild as possible; otherwise, it could become a bottleneck. Since the entire point of a CPU review is to evaluate the performance of the CPU, running high detail and high resolutions in games won’t give us the most useful results.

As such, our game testing revolves around 1080p, and 1440p, with games being equipped with moderate graphics detail (not low-end, but not high-end, either). These settings shouldn’t prove to be much of a burden for the GeForce GTX 1080 GPU. For those interested in the settings used for each game, hit up page 2 (a link is found at the top of this page).

In addition to 3DMark, our gauntlet of tests includes six games: Ashes of the Singularity (CPU only), Battlefield 1 (Fraps), Ghost Recon: Wildlands (built-in benchmark), Rise of the Tomb Raider (built-in benchmark), Total War: ATTILA (built-in benchmark), and Watch Dogs 2 (Fraps).

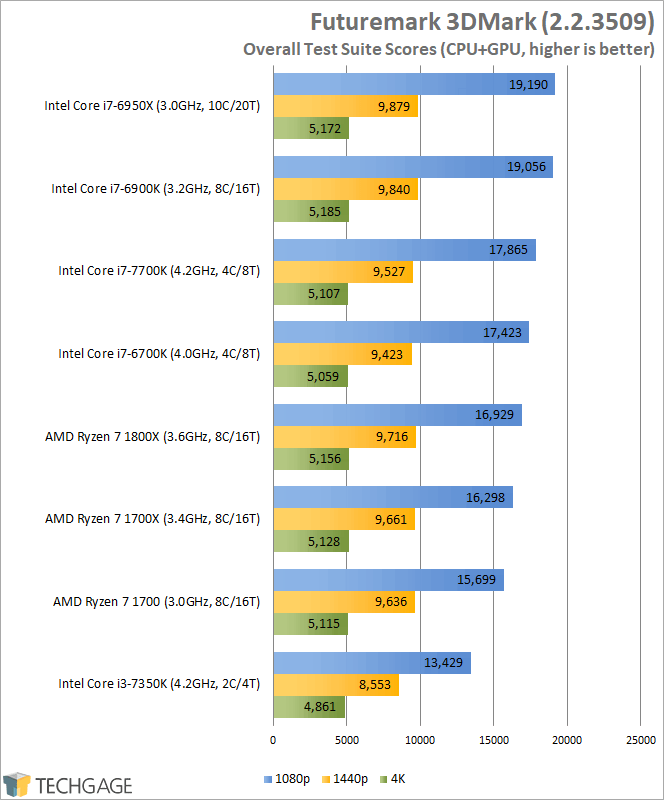

Futuremark 3DMark

As mentioned above, it’s going to be hard to see performance differences between CPUs if the GPU is a bottleneck, and these results explain why. At 1080p, there are grand differences between the bottom and top of the stack, but at 4K, where the GPU becomes the biggest bottleneck, there’s effectively no difference at all (except for the dual-core, but even that difference is mild).

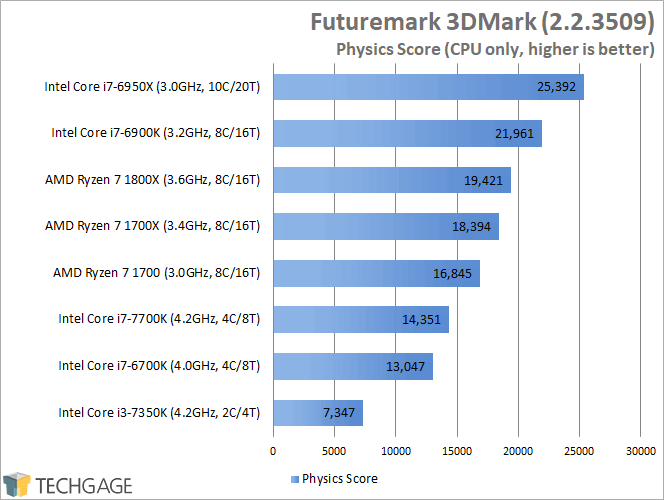

When we separate the physics score, which explicitly uses the CPU, we can see why the overall scores above managed to get their boost:

As with most of the tests prior to this page, huge differences can be seen when the CPU is specifically singled out in a gaming test. But just how useful is this data? It’s really only useful if you consider it to represent theoretical gaming performance. On this page, the 10-core places at the top of the chart in the CPU-specific tests, but in actual gaming, the higher-clocked (but lower-end) chips perform better.

So, theoretically, if a game can take advantage of 8- and 10-core chips, the graph above would represent the benefits of many core CPUs. The closest I think we have to hitting the mark comes up next:

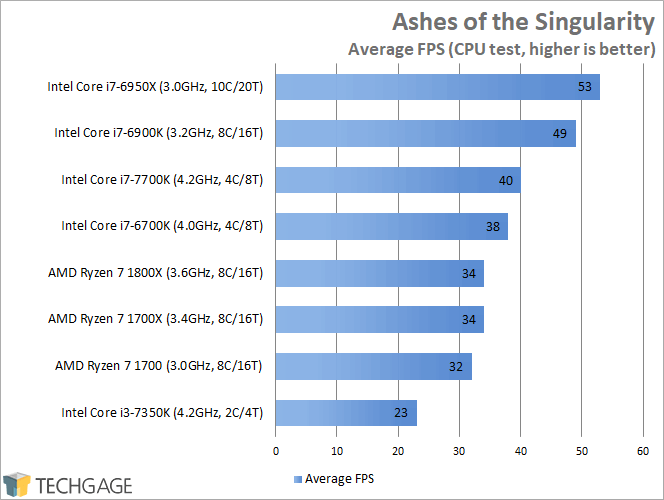

Ashes of the Singularity

Ashes is a game popular for benchmarking because it’s flexible, can run in either DX11 or DX12, and can take great advantage of the CPU it’s given. Above, we saw that 3DMark give us great scaling in its physics test, and with Ashes, we see similar scaling, with the 10-core chip ruling the roost.

It’s interesting that AMD’s Ryzen 7 chips fall behing both the 6700K and 7700K in Ashes, where as they didn’t in 3DMark, but it could be due to the fact that 3DMark is smarter than this Ashes version for proper CPU utilization.

A couple of weeks ago, AMD’s Robert Hallock posted about an Ashes update that takes better advantage of Ryzen, but until the time came to write about these results, I had no idea that the specific version he was talking about was Escalation. As such, the results above are not as useful as ones from Escalation, because the original Ashes has clearly not been treated to this same core upgrade.

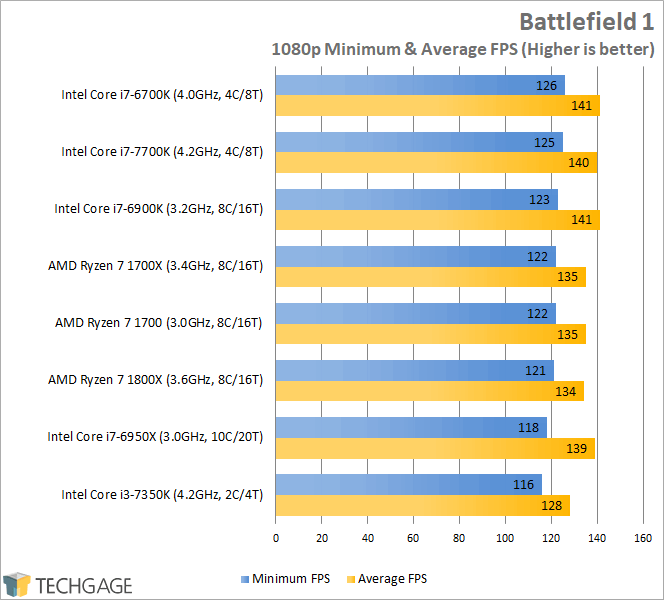

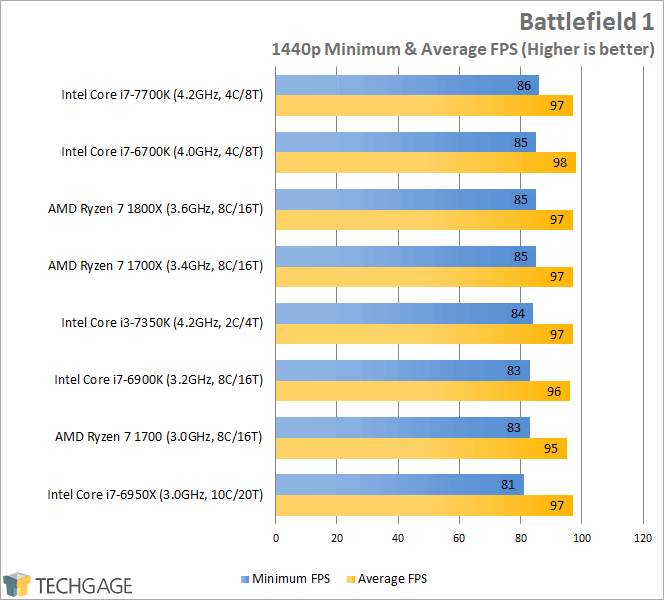

Battlefield 1

BF1 is one of the more recent examples of games that can actually take decent advantage of today’s processors, but as we see here, the overall differences are not that stark. At 1080p, the delta between the top and bottom of the stack is a mere 10 FPS (we’re talking 126 peak), and at 1440p, that gap tightens to just a 5 FPS difference.

I should stress the fact that this testing represents offline play, and that online play will likely give you different results. But because of the variable nature of the gameplay, real-world testing is complicated, especially by someone who’s not familiar with the game and would drag an unsuspecting team down. Thus, I stick to offline tests for the sake of sanity.

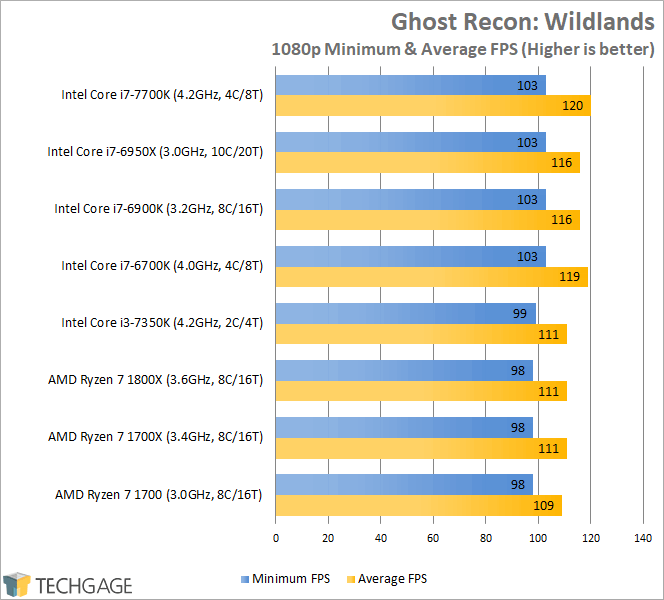

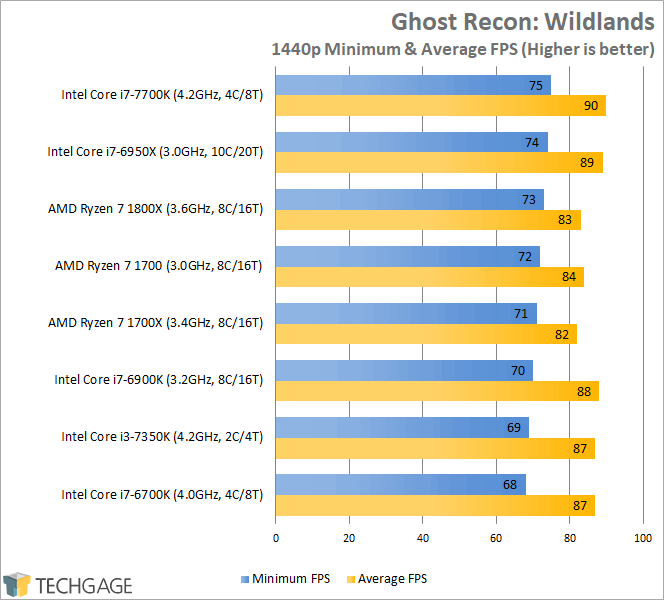

Ghost Recon: Wildlands

We’re seeing more of the same here, with very little overall differences being seen between the top and bottom. At 1080p, Ryzen 7 struggles to get an extra few frames per second that would push it higher, but at 1440p, the gap tightens, with there being a 7 FPS gap between the 1800X and 7700K.

If it looks a bit strange that the 1700 comes ahead of the 1700X in the 1440p test, that’s just the result of variance, and doesn’t suggest that it’s actually somehow better. When results are this close, this kind of thing is just bound to happen.

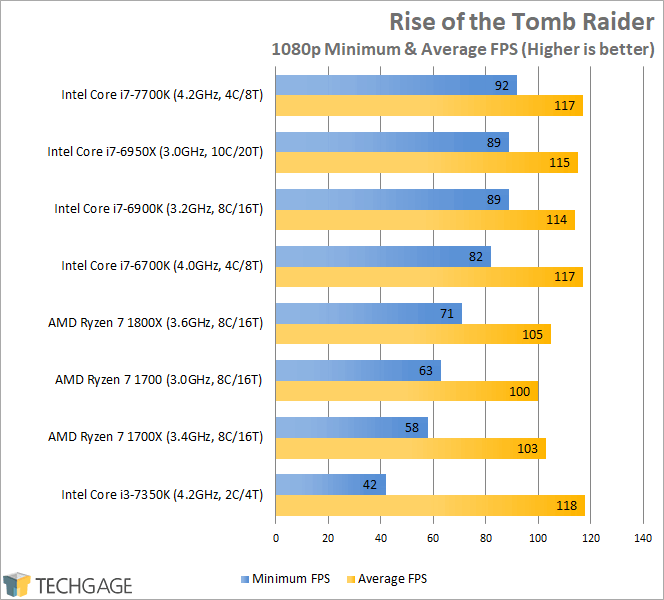

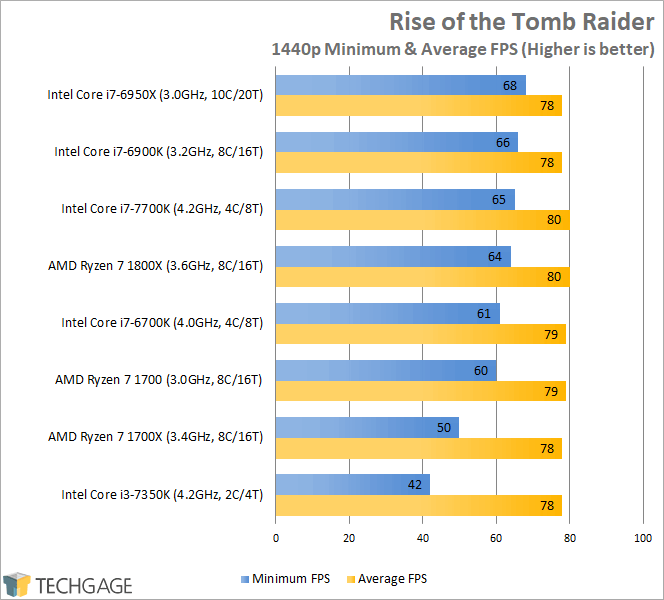

Rise of the Tomb Raider

So far, it’s been difficult to spot actually notable performance differences in gaming, but Rise of the Tomb Raider spices things up. While the average framerates are fair enough, the minimum framerates can take a real nose-dive on slower CPUs. Interestingly, while the i3-7350K has a high clock speed, that wasn’t enough to ensure that the minimum FPS kept close to 60 – despite delivering nearly 120 FPS overall (at 1080p).

At 1440p, the gap once again tightens, with the entire stack of CPUs delivering the same average framerate. But, we do see another oddity: the 1700X falling 10 FPS short (at the minimum) against the slower 1700. What gives?

I wish I knew. All of the games were run with the same graphical settings, and the same motherboard settings (verified often), and yet the 1700X still delivered 10 FPS less at the minimum. Benchmarking inherently involves variables, but a gap like this isn’t seen too often without an actual reason for it. The 1700X was the final chip of the entire bunch I benchmarked, so I tested both resolutions multiple times, and the performance held. The reason escapes me.

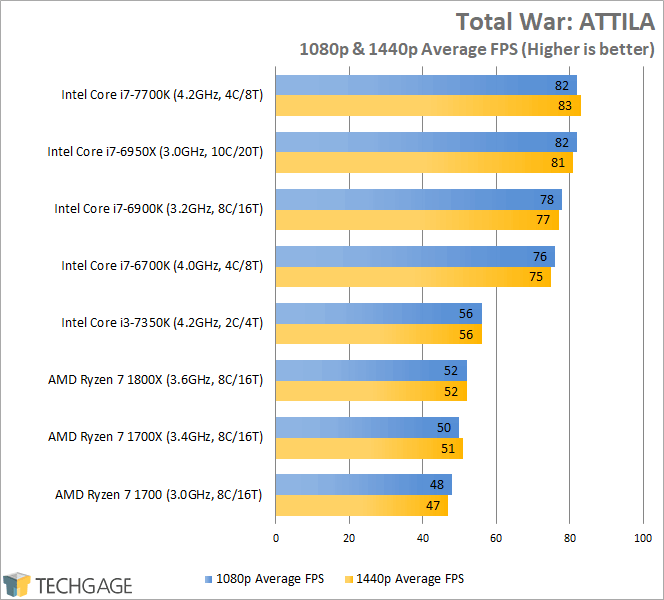

Total War: ATTILA

ATTILA‘s built-in benchmark isn’t advertised as being a CPU test, but it acts as one. While it might not take advantage of 8 or more threads all that often, a good CPU can ensure that the framerate isn’t being held back. The i3-7350K, despite its high clock speed, doesn’t have enough threads to handle this game at its best, and conversely, the 8- and 10-core chips perform no better than the Intel quad-cores.

What about Ryzen, and its occupancy at the bottom? It seems glaringly clear that ATTILA was heavily optimized for Intel architectures, because there’s just no reason these 8-core Ryzen chips should fall so far behind Intel’s 4-core, IPC be damned.

With Ryzen being the most competitive processor AMD’s had in quite some time, it goes without saying that future games are going to be built with more focus on supporting the Zen architecture. It might just take time, and thankfully, if this small sampling is a gauge to anything, performance degradation to this extent on Ryzen shouldn’t be common.

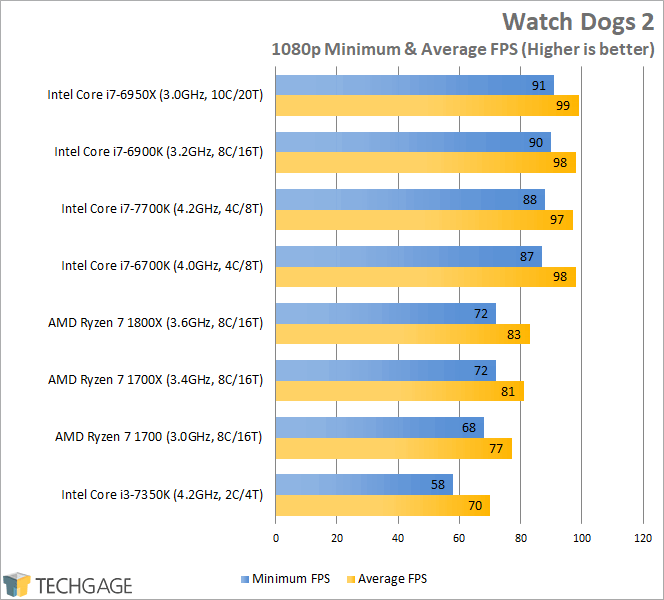

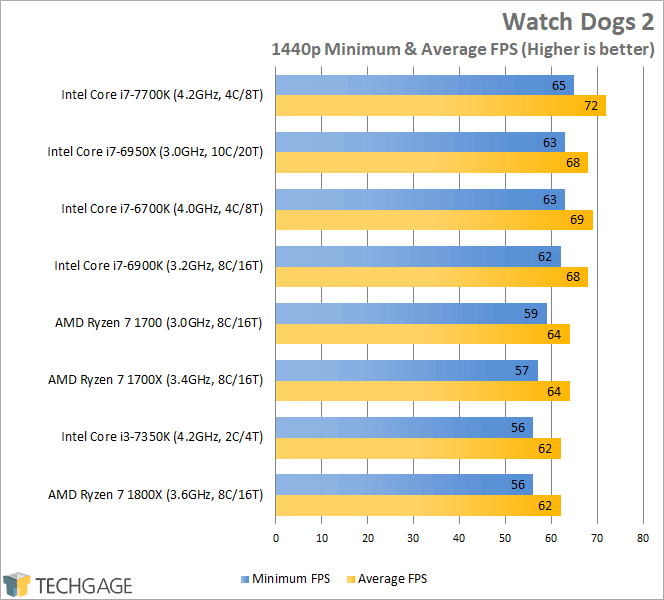

Watch Dogs 2

With Watch Dogs 2 at 1080p, we see one of the most notable drops in performance on this page, with the 1800X falling about 15 FPS short on both the minimum and average against Intel’s 7700K. Like ATTILA above, it seems Watch Dogs 2 doesn’t take too kindly to Ryzen’s unique architecture. Fortunately, at higher resolutions, the performance gap becomes less noticeable.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!