- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Exploring GPU Photogrammetry Performance With RealityCapture

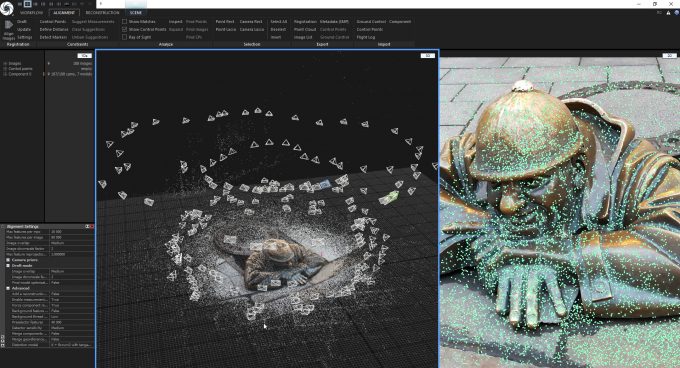

Turning a series of photographs into 3D models is a compute intensive process, one that can make good use of CPUs and GPUs alike. Capturing Reality’s RealityCapture becomes our second photogrammetry benchmark, and we’re introducing it with a dedicated look at graphics card performance.

At Techgage, we strive to cover as many realistic workloads in our benchmarking as possible, but over time, it’s been obvious that our photogrammetry angles have been a bit lacking. For a couple of years, we’ve tested with Agisoft’s Metashape, but we now welcome Capturing Reality’s aptly named RealityCapture to the suite, thanks to reader feedback.

As with Metashape, RealityCapture is designed to accelerate the process of turning a series of photographs into a high-quality model, to be later imported into design software. RealityCapture is meant to be as easy as possible to use, although out-of-the-box settings might not be ideal for everyone. In the simplest workflow, you’ll be able to simply drag and drop your photos in, hit “Start”, and wait for the process to finish so that you can fine-tune and export it.

Photogrammetry can make good use of CPUs and GPUs, but the nature of the computation means scaling will not be as satisfying as what we might see with rendering, or possibly even encoding. If there’s one thing we’ve learned over our fifteen years of benchmarking, it’s that image manipulation tests are really hard to scale, especially throughout the entire process.

Performance Testing RealityCapture

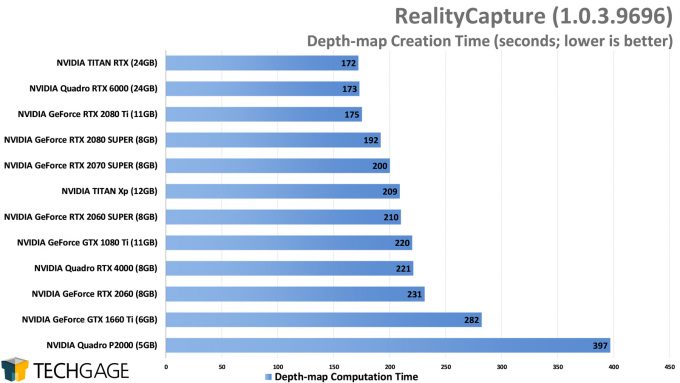

Turning photos into 3D models requires multiple steps, and if you are to monitor CPU and GPU usage during the entire process, you’ll notice that each of those steps will use the available processors differently. In some cases, you’ll even see forced lulls caused by steps that can’t be (or haven’t been) parallelized. This ultimately means that when we post an “Overall” time, that includes these lulls. Fortunately, the available depth-maps measurement is as close to a GPU-only result as we can get.

The reason we’re kicking off our look at RealityCapture with only GPUs in focus is because our original CPU-based testing was flawed, so we don’t currently have useful data to share. RC stores a log file around the file system that we thought would give us an accurate result inside, but it doesn’t. Instead, the proper way to see accurate times is with the report option in the software itself.

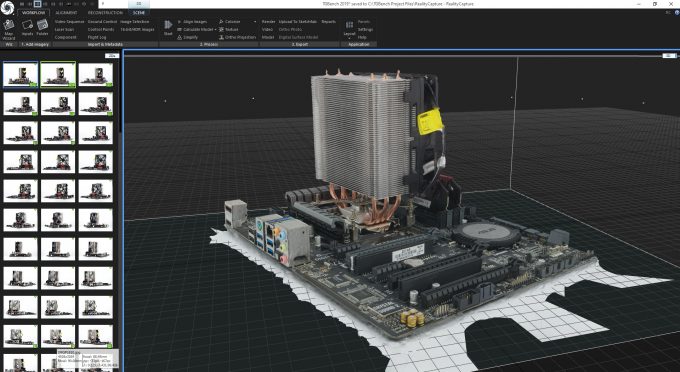

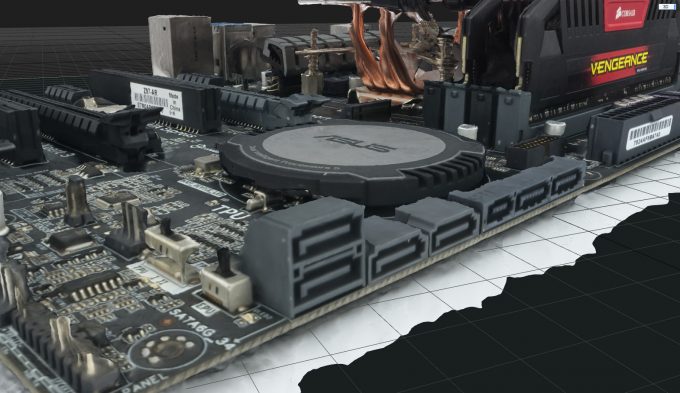

Given the lack of CPU performance here, we feel like this article is a bit lacking, but it’s a start, and will improve the next time we do a full round of testing. In our test, we drop 123 photos that Techgage Senior Editor Jamie Fletcher took of an ATX motherboard and CPU cooler into the software, and hit start. That produces similar to what you see above.

This isn’t a perfectly shot project, but that doesn’t impact the test in any way. One thing we goofed on is not having also tested texture generation performance, as we had not configured the settings correctly. That will be included in the future, along with CPU performance in the next article.

With that preamble out-of-the-way, here’s a look at the test system used:

| Techgage Workstation Test System | |

| Processor | Intel Core i9-10980XE (18-core; 3.0GHz) |

| Motherboard | ASUS ROG STRIX X299-E GAMING |

| Memory | G.SKILL Flare X (F4-3200C14-8GFX) 4x8GB; DDR4-3200 14-14-14 |

| Graphics | NVIDIA TITAN RTX (24GB, GeForce 441.66) NVIDIA TITAN Xp (12GB, GeForce 441.66) NVIDIA GeForce RTX 2080 Ti (11GB, GeForce 441.66) NVIDIA GeForce RTX 2080 SUPER (8GB, GeForce 441.66) NVIDIA GeForce RTX 2070 SUPER (8GB, GeForce 441.66) NVIDIA GeForce RTX 2060 SUPER (8GB, GeForce 441.66) NVIDIA GeForce RTX 2060 (6GB, GeForce 441.66) NVIDIA GeForce GTX 1080 Ti (11GB, GeForce 441.66) NVIDIA GeForce GTX 1660 Ti (6GB, GeForce 441.66) NVIDIA Quadro RTX 6000 (24GB, Quadro 441.66) NVIDIA Quadro RTX 4000 (8GB, Quadro 441.66) NVIDIA Quadro P2000 (5GB, Quadro 441.66) |

| Audio | Onboard |

| Storage | Kingston KC1000 960GB M.2 SSD |

| Power Supply | Corsair 80 Plus Gold AX1200 |

| Chassis | Corsair Carbide 600C Inverted Full-Tower |

| Cooling | NZXT Kraken X62 AIO Liquid Cooler |

| Et cetera | Windows 10 Pro build 18363 (1909) |

As the graphics card list above hints, RealityCapture is suited for those with NVIDIA graphics cards, as it has crucial operations that require CUDA. With non-CUDA GPUs, RealityCapture will generate a sparse point cloud, which is basically image alignment and feature detection on the images projected into 3D. The mesh process, along with texturing and shading, all require a CUDA GPU, i.e. an NVIDIA card made in the last few years. Support for AMD GPUs has been requested by the community, but are not supported at this time.

RC is the seventh or eighth CUDA-only test in our suite, which is notable from the standpoint that we don’t have a single AMD-only test – because they don’t really exist. Hopefully in time, companies will work harder to support their full feature sets through OpenCL or Vulkan. The fact we have so many CUDA-only tests has to be a testament to how easy CUDA is to develop with, but locking out competitor GPUs is unfortunate.

As we do with most tests, our RealityCapture benchmark was run three times per GPU, although in some cases, we ran an additional round in order to increase our confidence level. Photogrammetry can allocate resources slightly differently from run to run, sometimes resulting in a larger delta from run A to run B than is reasonable. In the end, all of these reported results were accomplished at least three times on the rig, with less than 1% variance between them.

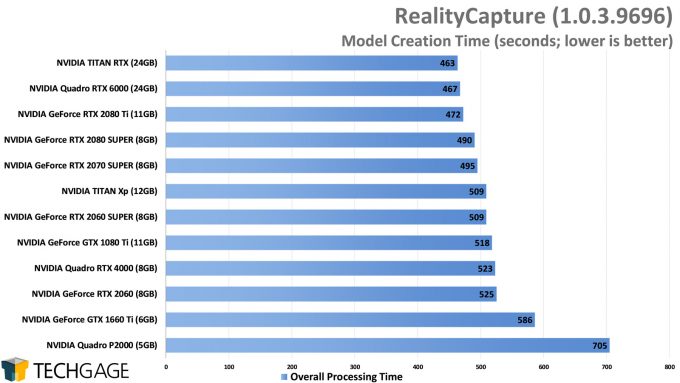

One thing is made immediately clear here: you don’t want to go too low-end with your GPU. While the overall process time is going to include a number of lulls, a faster GPU is ultimately going to help you get a project done quicker, and while the performance delta between some of the top models is modest, the gains at the low-end are enormous.

At the top-end of this chart, the gains, or supposed gains, are minimal. In reality, further runs could have changed the order of those top three just a bit. Both the Quadro RTX 6000 and TITAN RTX have similar hardware, but the 2080 Ti loses some cores and still manages to keep up.

When we break things down and single out the depth-map creation time, which is entirely GPU-based, we see slightly more interesting scaling, but only slightly:

We regret not having results for the texturing process here, but that will be rectified in the future. We don’t expect adding that will change the outlook any, but instead just add more data (which we happen to love). Again, lower-end GPUs should be avoided, with the Turing-based GeForce RTX 2060 being a good bare minimum.

From a value perspective, the RTX 2080 SUPER looks to be the best value overall. It keeps behind the big three, but the least-expensive option of those is $1,000 SRP (more like $1,200 in reality), so at $700, the 2080S seems like a good compromise between mid-range and top-end.

All things considered, the GeForce GTX 1080 Ti deserves praise for where it lands. If you can find one of those on the cheap nowadays, that could be a really desirable option, especially since it has an 11GB framebuffer, rather than just 8GB – although this is something that will not likely impact RC, as it’s extremely memory efficient (our project peaks at 1.3GB VRAM, and 8GB system RAM).

Wrapping Up

At around the time we began our GPU testing with RealityCapture, the company had released version 1.0.3.10298, but the actual download would still give us the old version – thus we stuck to the previous build. The updated version includes the ability to save reports to the cloud, which is something we’re keenly interesting in checking out.

Another perk with the new update: you can now run four instances of the software at once, in case you want to queue up a batch and then go chill doing something else for a while. There’s actually a lot of good stuff in the update, so you can read up on the rest at the release notes page. Nothing looks to change anything on the performance aspect in any notable way vs. the version we tested.

We regret taking so long to get some RealityCapture performance posted, but as we have a better grasp of how to test it now, our future testing will be more elaborate. If you have feedback based on this first go, or are still not sure which GPU might suit you best, please feel free to hit-up the comments.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!