- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

How Low Should You Go? ASUS Radeon R7 250X Graphics Card Review

After we were done benchmarking AMD’s $110 Radeon R7 260 last winter, we were impressed enough to call it a “console killer” (thanks in part to the ‘next-gen’ consoles having been released not soon before). Will we be able to say the same thing about the R7 250X, a model a mere one-step down? That’s what we’re going to find out.

Page 9 – Power & Temperatures, Final Thoughts

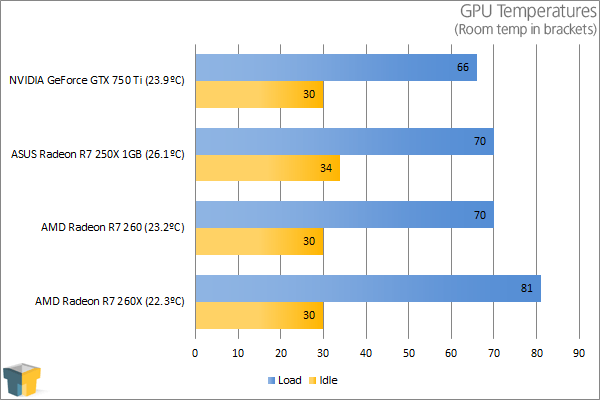

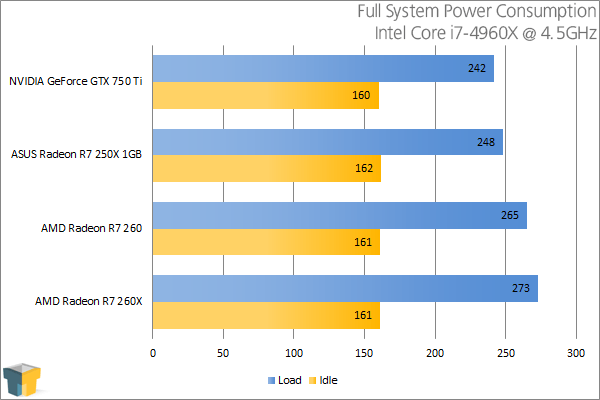

To test graphics cards for both their power consumption and temperature at load, we utilize a couple of different tools. On the hardware side, we use a trusty Kill-a-Watt power monitor which our GPU test machine plugs into directly. For software, we use Futuremark’s 3DMark to stress-test the card, and AIDA64 to monitor and record the temperatures.

To test, the general area around the chassis is checked with a temperature gun, with the average temperature recorded. Once that’s established, the PC is turned on and left to site idle for ten minutes. At this point, we open AIDA64 along with 3DMark. We then kick-off a full suite run, and pay attention to the Kill-a-Watt when the test reaches its most intensive interval (GT 1) to get the load wattage.

NVIDIA’s GeForce GTX 750 Ti is quite a bit faster than the R7 250X, but it manages to run cooler – and that’s with an absolutely simple reference cooler. On the power side, the NVIDIA card continues to dominate, with the R7 250X predictably placing beneath the R7 260.

Final Thoughts

After having taken a look at the $110 R7 260 last December, I had figured that the R7 250X wouldn’t differ too much in testing, but it took little time after jumping into benchmarking to realize I was wrong. The 250X might not have lost many cores, but its drop from 6000MHz on the memory to 4500MHz made a world of difference.

Compared to the R7 260, the 250X almost halved the overall framerate in AC IV: Black Flag, and while it didn’t fall so far behind in all tests, our Best Playable results showed that a lot more than usual had to be fine-tuned. In Black Flag once again, we actually had to introduce the Low detail setting, and we had to use it across-the-board in Crysis 3. The R7 260 by contrast avoided having to do that and still got better framerates in the end.

The big question for this little card is this: Is the R7 250X worth it? Ultimately, not really. Based on SRP alone, the R7 260 is well worth the $10 extra for the massive boost in memory performance and the 20% additional cores. We’re talking a mere 10% price boost for a performance boost of at least 25% – and more like 50%+ in most cases.

Beyond SRPs though, GPU pricing changes a lot, and it’s not uncommon to see overlapping products for the same price at etailers like Newegg. At the outset, I said that an R7 250X could be had for $75 after mail-in rebate, but the exact same price (including the non-MIR price) can be had for the R7 260. The kicker? It’s the exact same brand, and the exact same card on the surface. Admittedly, that card could be a one-off kind of deal, but it goes to show that the R7 250X, R7 260, and even the R260X, are all priced very similarly at etail.

Speaking of the R7 260X, Newegg has many cards for $100 after mail-in rebate. That’s still $25 more than the R7 250X I mentioned before, after mail-in rebate, but the performance boost is quite substantial. Not to mention, that card would boost us up to 2GB of VRAM.

In the end, if you’re looking for a ~$100 GPU, the Radeon R7 260 is the one to look at.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!