- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Intel Core 2 Duo E7200 – The New Budget Superstar?

At 2.53GHz and $133 USD, the E7200 promises to become the new Dual-Core budget superstar. After taking a hard look at the upcoming offering, we would have to readily agree. Overclocking only sweetens the deal further, with 3.0GHz on stock voltages being more than possible. We have a winner!

Page 11 – Linux: GCC, Archiving, Image Suite

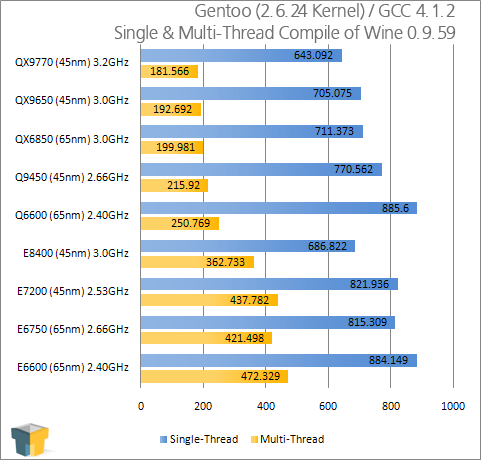

GCC Compiler

When thinking about faster processors or processors with more cores, multi-media projects immediately come to mind as being the prime targets for having the greatest benefit. However, anyone who regularly uses Linux knows that a faster processor can greatly improve application compiling with GCC. Programmers themselves would see the greatest benefit here, but end-users who find themselves compiling large applications often would also reap the rewards.

Even if you don’t use Linux, the results found here can benefit all programmers, as long as you are using a multi-threaded compiler. GCC is completely multi-core friendly, so the results found here should represent the average increase you would see with similar scenarios.

For our testing, we are using Gentoo 2007.0 under the 2.6.24-r3 Gentoo-patched kernel. The system is command-lined-based, with no desktop environment installed, which helps to keep processes to an absolute minimum.

Our target is a copy of Wine 0.9.59 (with fontforge support). We are using GCC 4.1.2 as our compiler. For single core testing, “time make” was used while dual and quad core compilations used “time make -j 3” and “time make -j 5”, respectively.

45nm benefits aren’t just for Windows’ users, as seen with our dual Quad-Cores at 3.0GHz, and though the E7200 proved a smidgen slower than our E6750, the differences are quite small.

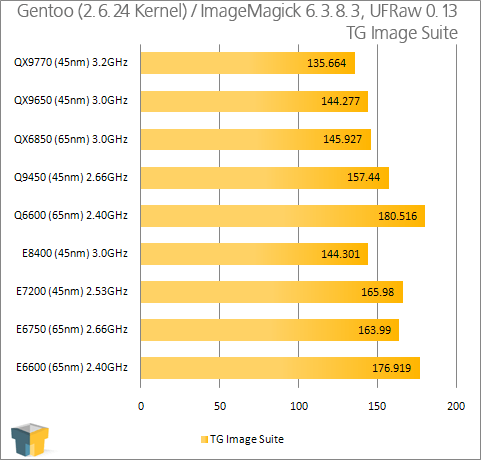

Image Suite

Even though multi-core processors are not new, it’s tricky finding a photo application that handles them properly. Lightroom was one, Photoshop is another. In light of the fact that it’s difficult to write scripts for more popular image manipulation applications, we are going to test the single core benefit of ImageMagick and UFRaw, two command-line-based applications for Linux.

ImageMagick is a popular choice for those who run websites, as it does what it does well, and that’s altering of images on the fly. Maybe websites and forums use ImageMagick in the background, which is why its performance is included here. UFRaw on the other hand is strictly a RAW manipulation tool which includes both a command-line and GUI-based version of the application. The command-line version is ideal for converting many images at a time, which is why we use it here.

For our test, our script first calls on UFRaw to convert 100 .NEF 10 megapixel camera files using our settings to JPEGs 1000×669 in resolution. ImageMagick is then called up to watermark all 100 new JPEGs and also to create thumbnails of each. This entire process is similar to how we convert/watermark our photos here. An example snippet is below.

ufraw-batch –exif –wb=auto –exposure=0.60 –size=1000,670 –gamma=0.40 –linearity=0.04 –compression=90 –out-type=jpeg –out-path=../files/ *.nef;

composite -gravity SouthEast -geometry 254×55+3+3 whitewatermark.png 001.jpg ~/Output/001.jpg;

Nothing too surprising here. Overall, it scales well to our assumptions.

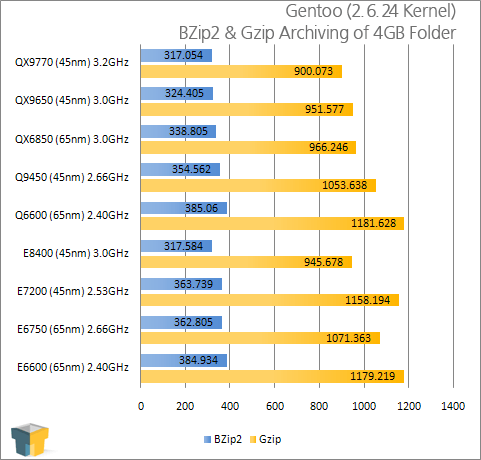

Tar Archiving

To help expand our Linux performance testing, we are now including Tar as a benchmark. For the test, we take a 4GB folder with numerous files within and compress it.

Because both GZip and Bzip2 are popular solutions for Linux users, we are using both in our tests here. Default options are used for both compressors, with the simple syntax: tar z/jcf Archive.tar Archive/.

Is it just me, or is Gzip slow? Regardless of that, Tar compiling lives with a simple concept. The faster the CPU, the faster the process. If only multi-threading was possible!

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!