- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Intel Opens Up About Larrabee

Intel today takes a portion of the veil off their upcoming Larrabee architecture, so we can better understand its implementation, how it differs from a typical GPU, why it benefits from taking the ‘many cores’ route, its performance scaling and of course, what else it has in store.

Page 2 – Larrabee Breakdown

During the briefing, Intel also unveiled Larrabee diagrams and lots of information on what helps to make the architecture an innovative one. Sadly, we are unable to post most of the information we were given, but I would expect that to change either next week at SIGGRAPH, or later this month during their Developer Forum.

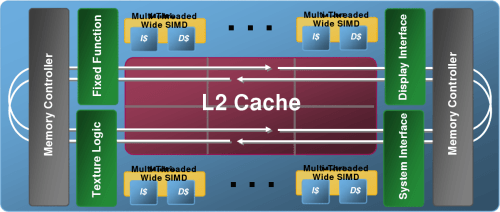

In the block diagram below, we can see how Larrabee is structured, and I should mention that this is unlikely to be an accurate representation of scale of each component. The center of the processor is comprised of the L2 Cache, which is shared amongst all of the available cores, and will likely grow in density depending on the number of cores implemented.

These multi-threaded cores are found on top and bottom, and are connected to other cores and the memory controller via a 1024-bit wide ring bus (512-bit in each direction), and also handles the fixed function logic. Each one of the cores offers four threads of execution, and includes 32KB instruction cache and also 32KB data cache.

The L2 Cache in Larrabee is designed a little differently than how it’s implemented on a normal desktop CPU. Rather than being ‘banked’, the Cache is divided into sub-sections where each section is directly connected to a specific core. If one core is reading data not being written by the other cores, it’s stored in its local cache, which improves latency and also bandwidth.

Further information of the cores themselves were provided to us, but as mentioned earlier, we are unable to share the slides themselves. However, I can describe that the x86 in-order scalar core features both a Scalar Unit and Vector Unit attached to the Instruction Decode, and are also connected to respective registers before being passed off to the L1 and L2 caches.

The Vector Unit that each core employs is one of the key features of Larrabee, and acts a little different than other vector units out there. We won’t get into the nitty gritty, but thanks to features included within the ‘Vector complete instruction set’, Larrabee features high computational density. Thanks to the fact that each vector unit operates at 16-wide and applications can take advantage of all 16 vector lanes, along with all other improvements in place, efficiency sits at a comfortable 85%.

How does Larrabee differ?

That’s as much technical bits I’ll touch on now, but I’ll shift focus briefly to why Larrabee is ultimately different than typical GPUs. As mentioned earlier, the architecture employs numerous cores which act together to deliver the overall speed, whereas a normal GPU sticks with a single core, which is rather large and elaborate in itself. Intel believes that bigger is not better, but rather more is better.

Because each core is actually based on the x86 architecture, development is made so much easier on coders looking to utilize the processor to its full potential. C and C++ are widely used in development of all types of software, including games, so to make the shift over to developing for IA shouldn’t be too difficult.

Key features of a Larrabee core include context switching and preemptive multi-tasking, virtual memory support in addition to page swapping, and also fully coherent caches at all levels of the cache hierarchy. Intel also boasts an efficient inter-block communication with the help of the 512-bit wide ring bus. In layman’s terms, it means that the communication between all the various components will be swift, resulting in low latencies and higher performance.

One of the most important features is that while a fixed function logic exists, it doesn’t get in the way, allowing excellent load balancing and general functionality. Intel touched on the fact that there is no such thing as a ‘typical workload’, and then showed off slides that proved the theory. Different games will act differently during gameplay. One might be heavier on rasterization, while another is heavier on the pixel shader. Because this logic was in mind during the development of Larrabee, the architecture can handle all workloads effectively.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!