- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

NVIDIA GeForce RTX 4070 Ti 12GB Creator Review

NVIDIA’s third Ada Lovelace-based GeForce has landed: RTX 4070 Ti. This artist formerly known as RTX 4080 packs in 12GB of memory, effectively delivering a 50% boost to VRAM gen-over-gen. Our first look at the new GPU will be focusing on creator: rendering, encoding, and more.

Page 2 – NVIDIA GeForce RTX 4070 Ti: Encoding, Viewport & Final Thoughts

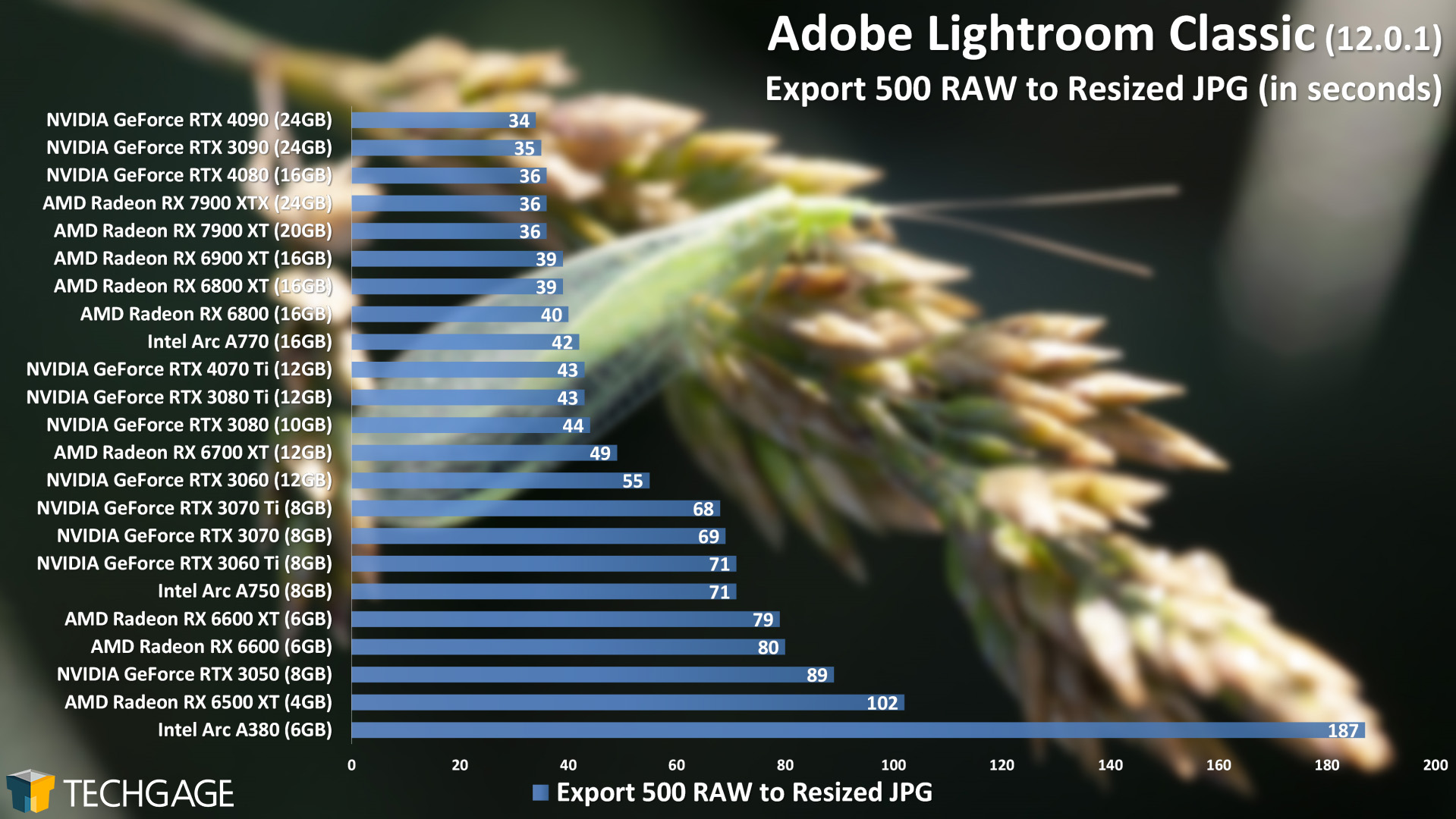

Adobe Lightroom Classic

We begin our look at the non-rendering performance of NVIDIA’s GeForce RTX 4070 Ti with Adobe’s Lightroom Classic, and right off-the-bat, we can see some interesting scaling. While it’d be assumed that the RTX 4070 Ti would place ahead of the RTX 3090 in this test, it actually falls behind GPUs even further back. That even includes falling behind Intel’s Arc A770.

With its 192-bit memory bus, it’s easy to single that out as the reason the RTX 4070 Ti places further behind than it’s expected to, but it could also be the frame buffer that’s acting as the limitation. Similar to how we see the RTX 3060 12GB place ahead of technically superior 8GB GeForces, maybe we would have seen the 4070 Ti jump ahead more if it had been equipped with 16GB.

Ultimately, Lightroom is one test where the scaling won’t matter quite as much as it would in rendering. After exporting 500 RAW images, the differences at the top are mere seconds from each other. It’s interesting to note the scaling differences nonetheless.

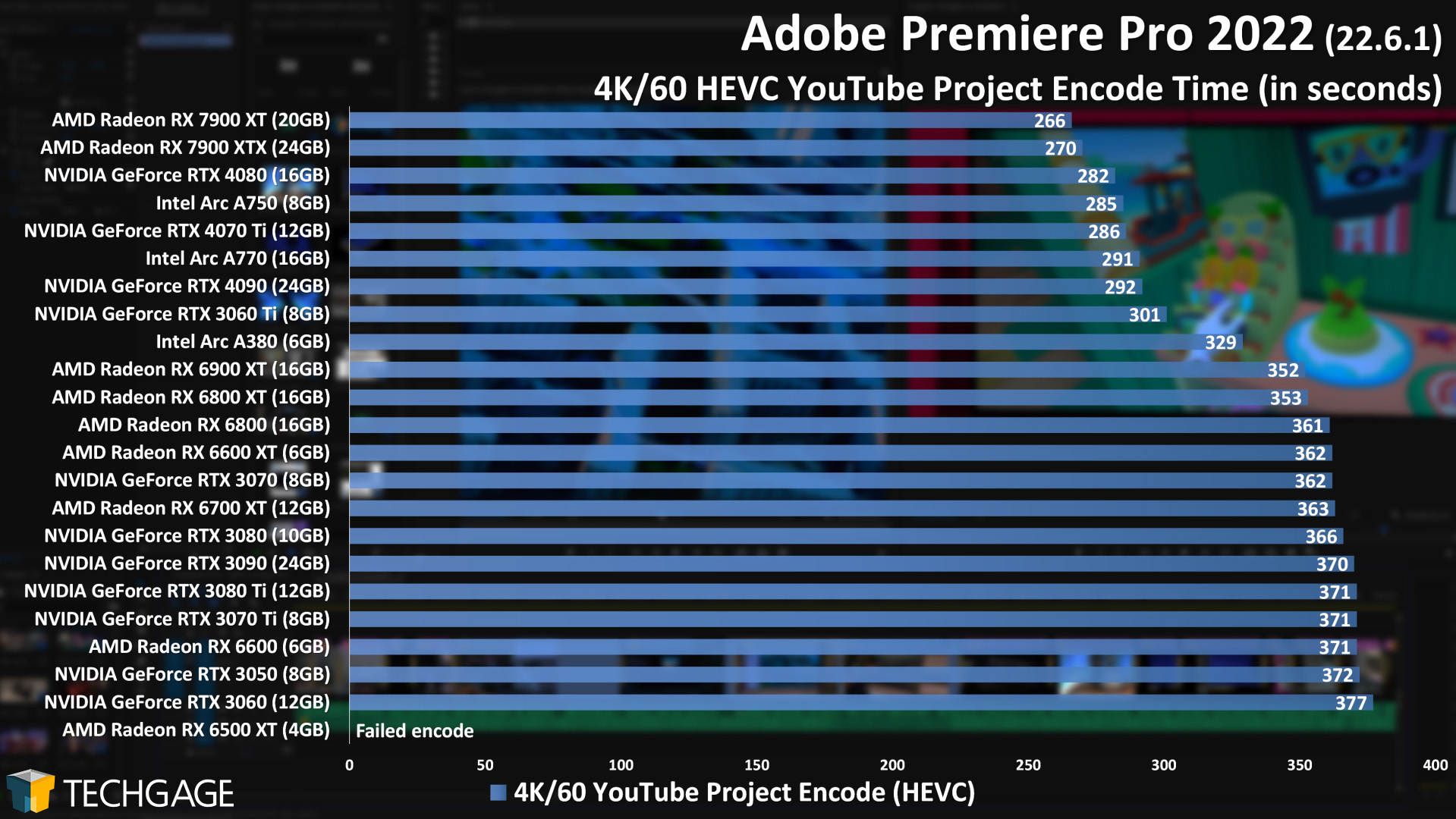

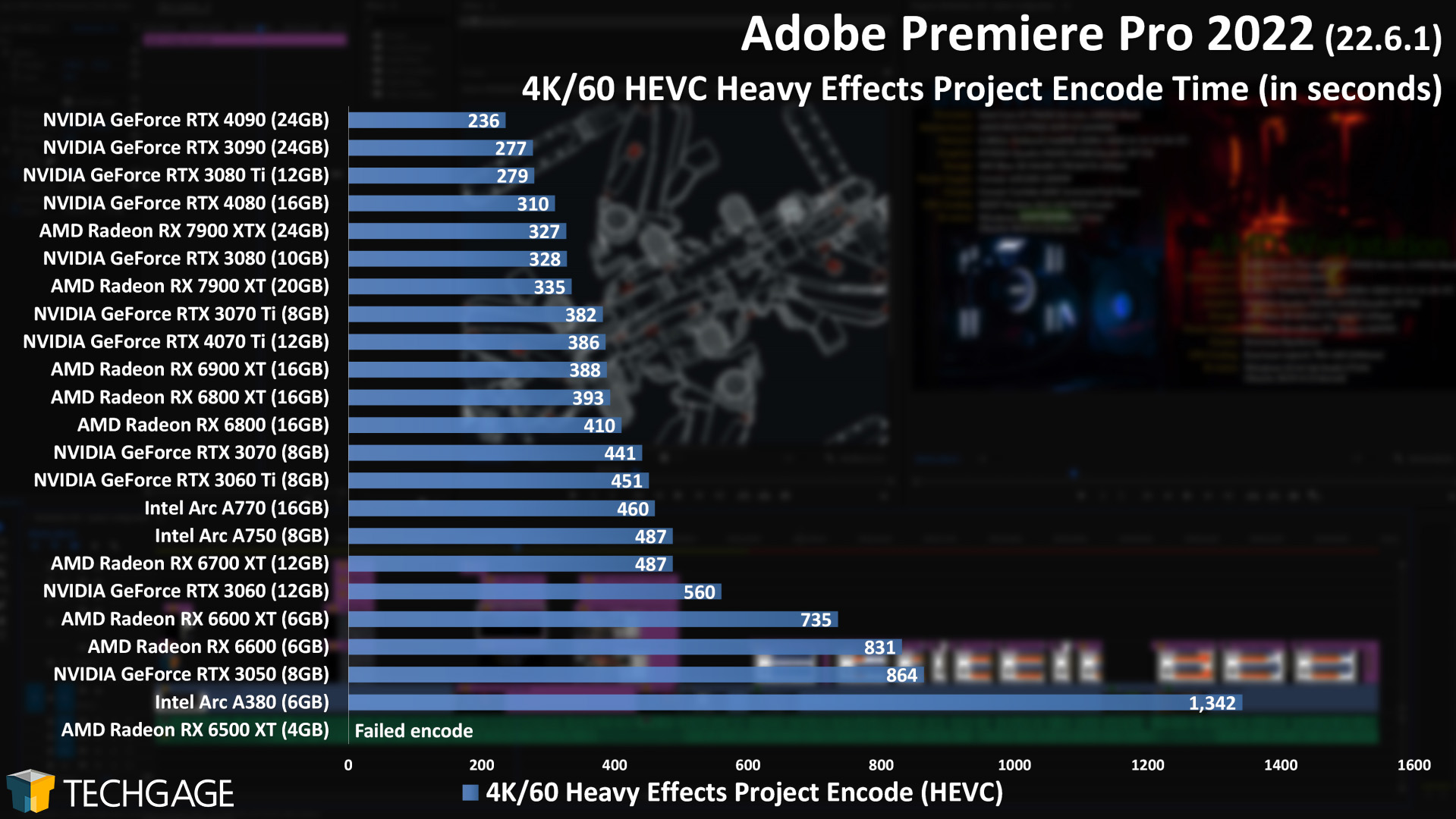

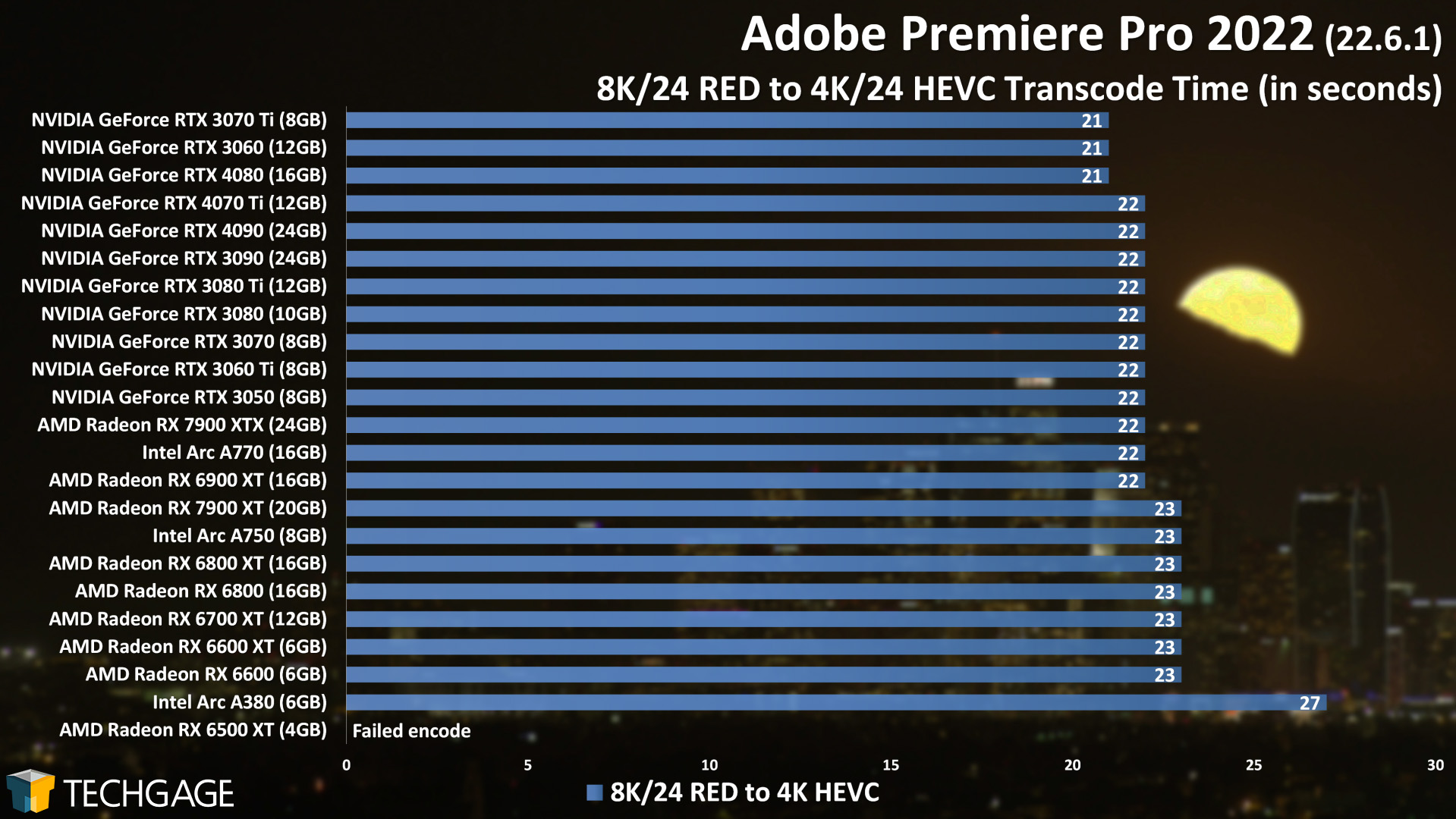

Adobe Premiere Pro

Across these first two Premiere Pro projects, we can glean that AMD’s latest-generation Radeons are really strong at encoding normal projects without many effects, while NVIDIA shows its strengths a bit more when heavier GPU effects are piled on. Even there, though, the $999 Radeon RX 7900 XTX goes toe-to-toe with NVIDIA’s $1,199 RTX 4080.

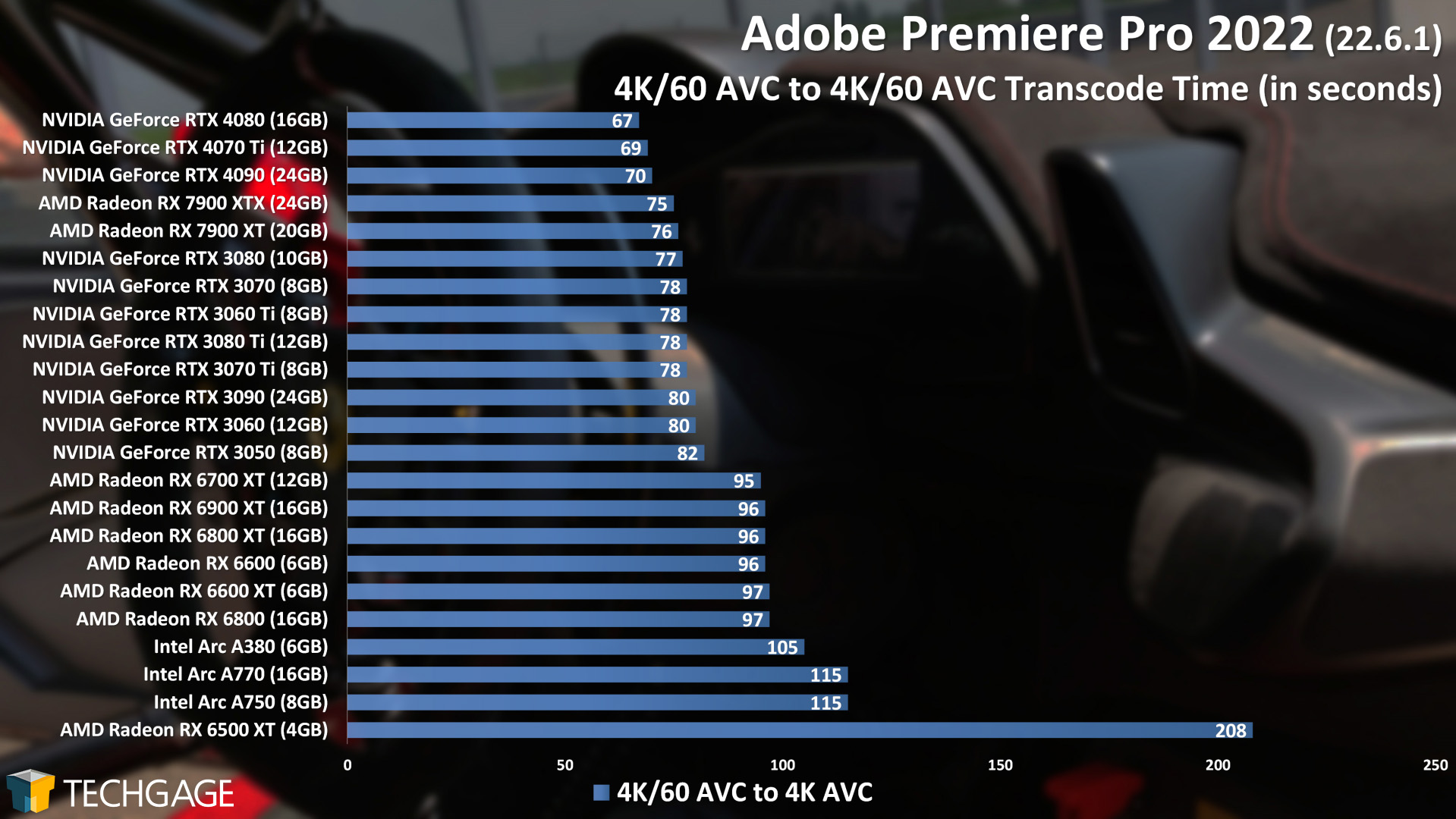

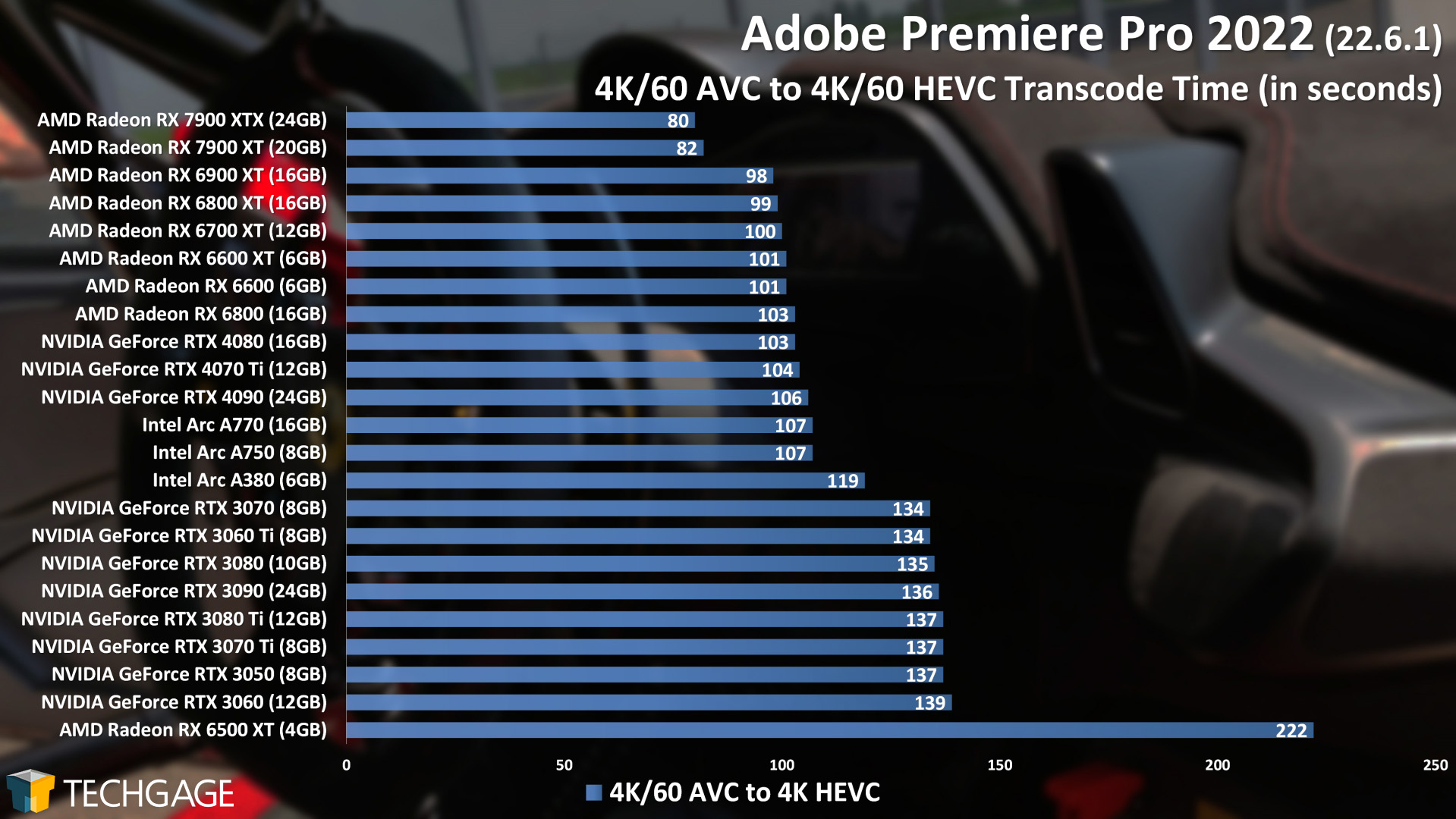

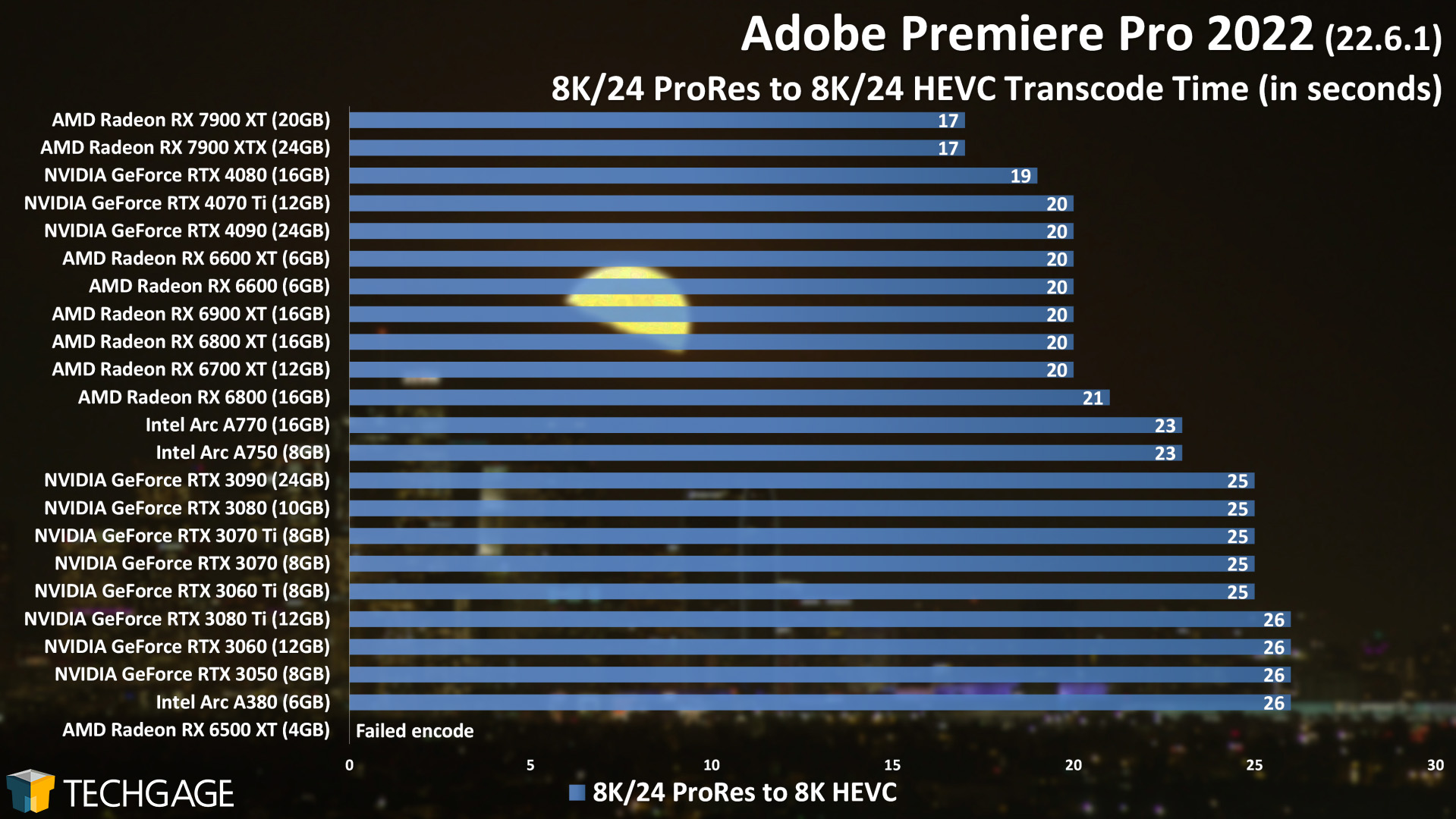

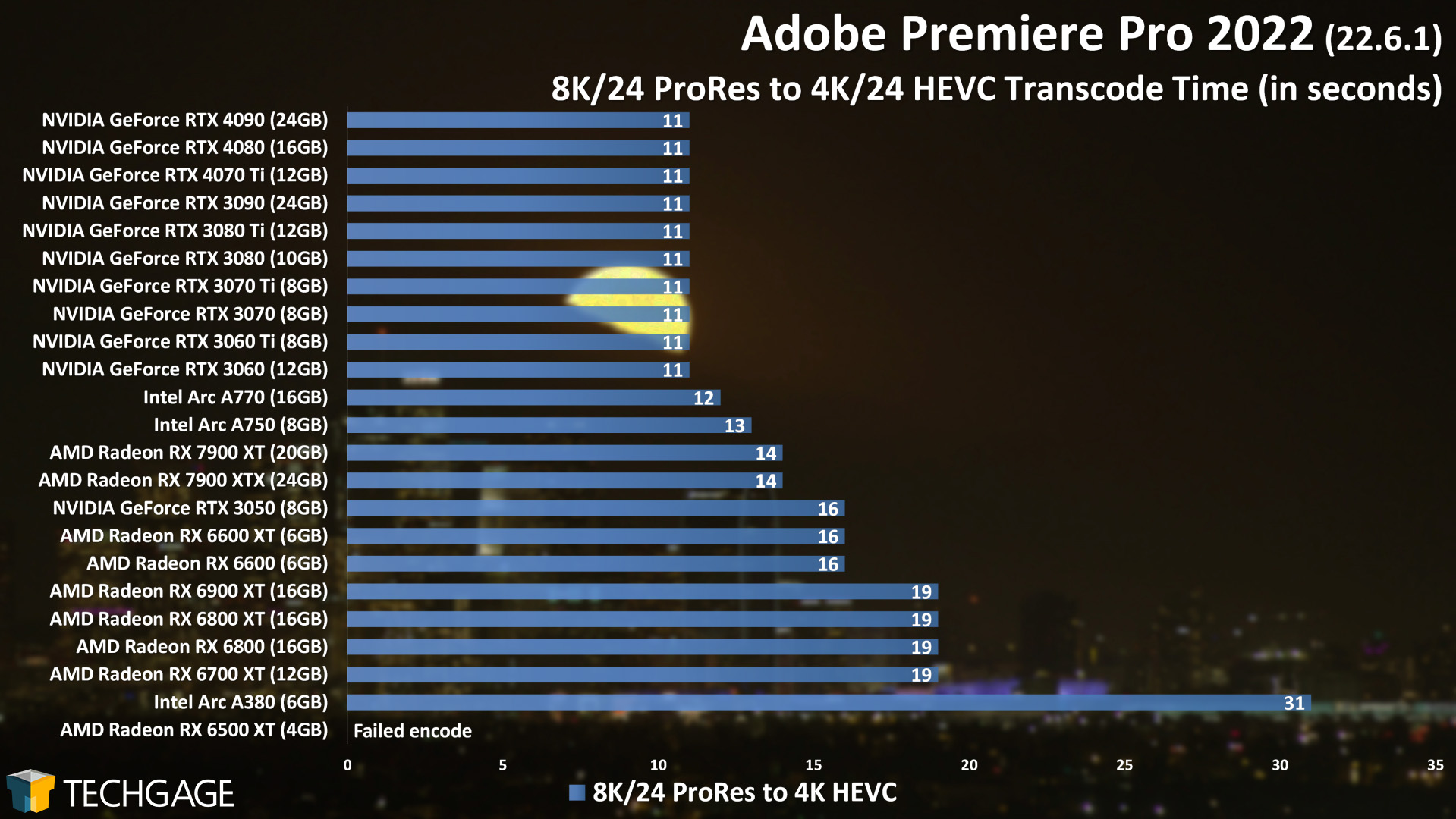

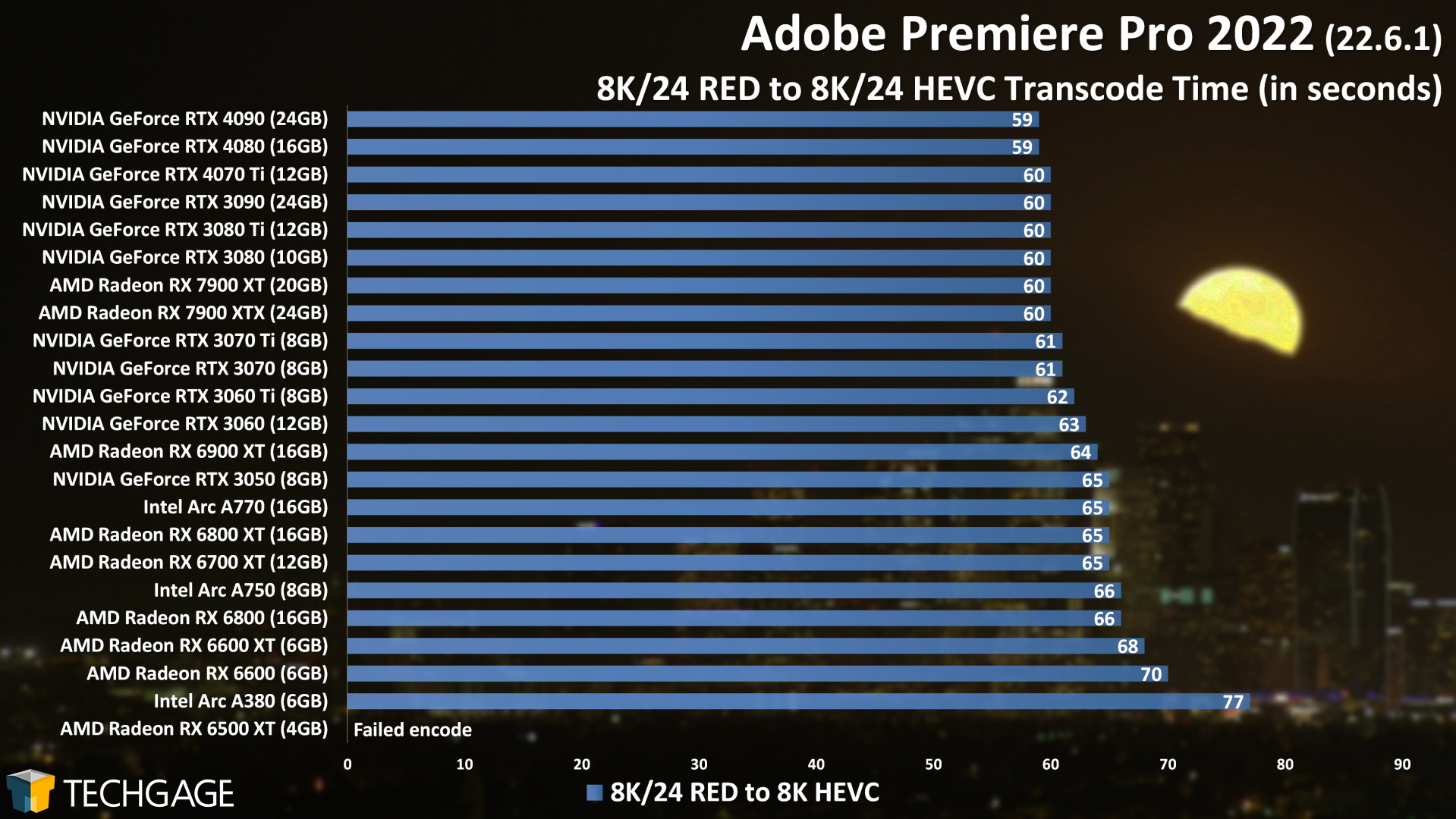

Here are some straight-forward transcode tests involving AVC, HEVC, ProRes, and RED:

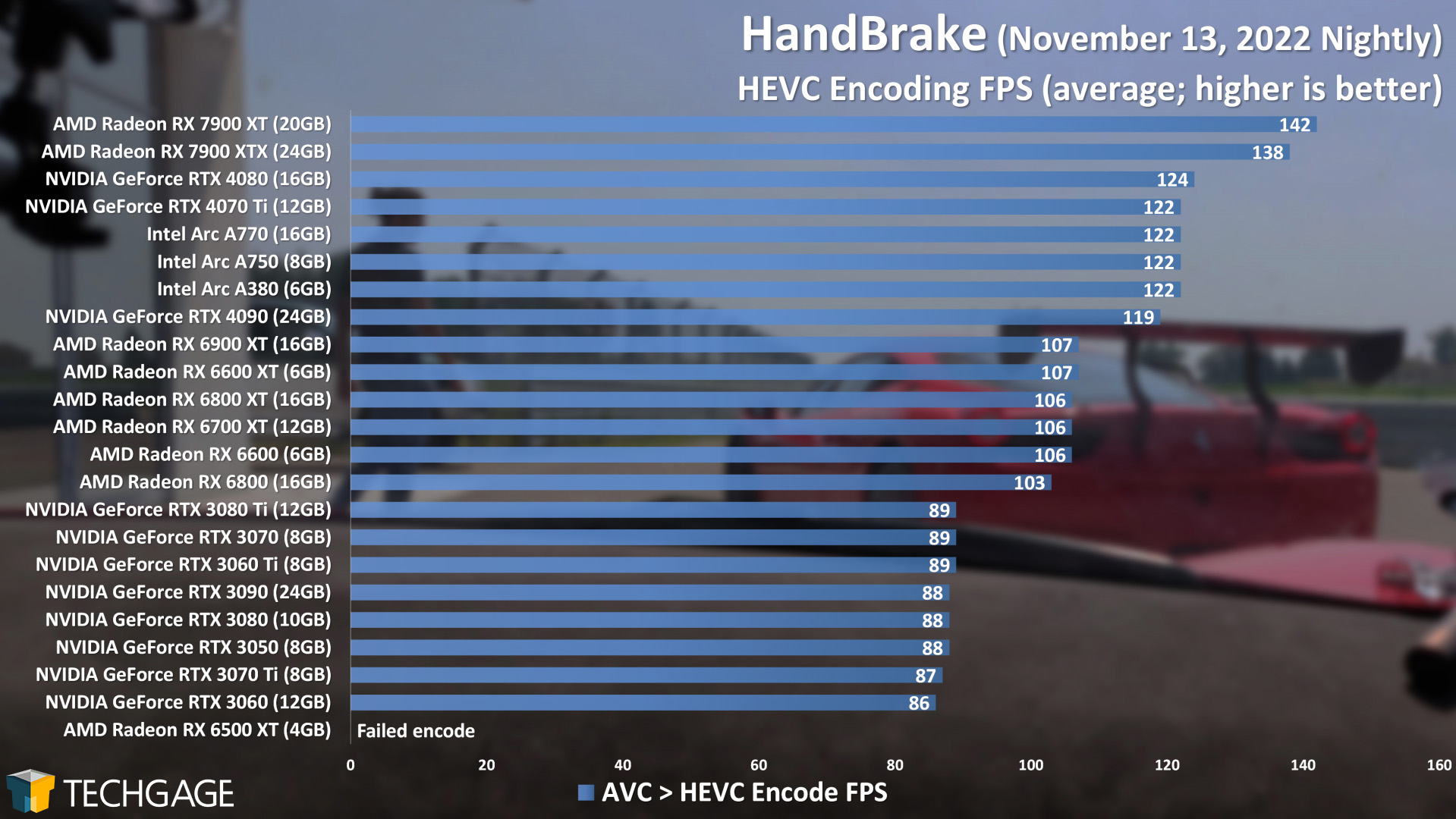

These transcode results highlight the fact that not all video encode tests are built alike. Even when we compare 8K to 8K and 8K to 4K tests with ProRes and RED, each behaves quite a bit differently. Overall, it seems AMD’s latest Radeons have particular strength in HEVC encoding, while NVIDIA’s latest-gen shows similar strength in AVC.

We’re running behind on planned AV1 encode testing across the three vendors, but plan to tackle it soon. The problem has been that so few solutions have offered support for all three vendors, and the concern is that any one of the vendors may not be as optimized as they could be. HandBrake just released a new stable version of the software after nearly a year, but unfortunately, it only includes AV1 support for Intel’s Arc GPUs (which, it must be said, is thanks largely to Intel’s own efforts).

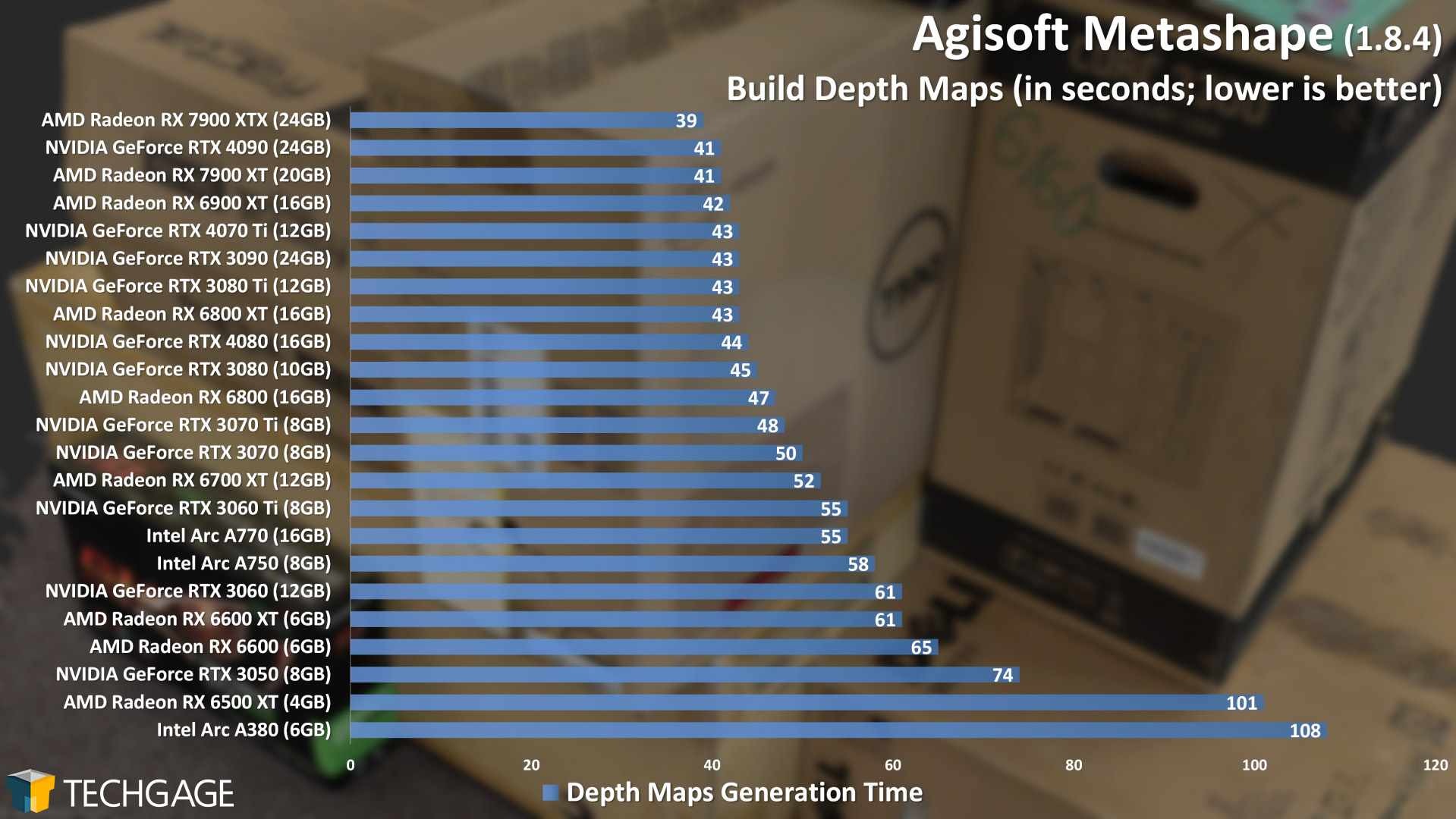

Agisoft Metashape

Image manipulation of any sort can lead to strange variability between GPU models, something we’ve seen in particular from Adobe Lightroom tests in the past. In Agisoft’s Metashape, the RTX 4070 Ti performs quite well… even well enough to surpass the RTX 4080. Of course, though, when the entire top-half of the graph represents differences of mere seconds, it’s not uncommon to see models trip over each other. The 7900 XTX still manages to stand out with a two-second gain over the RTX 4090.

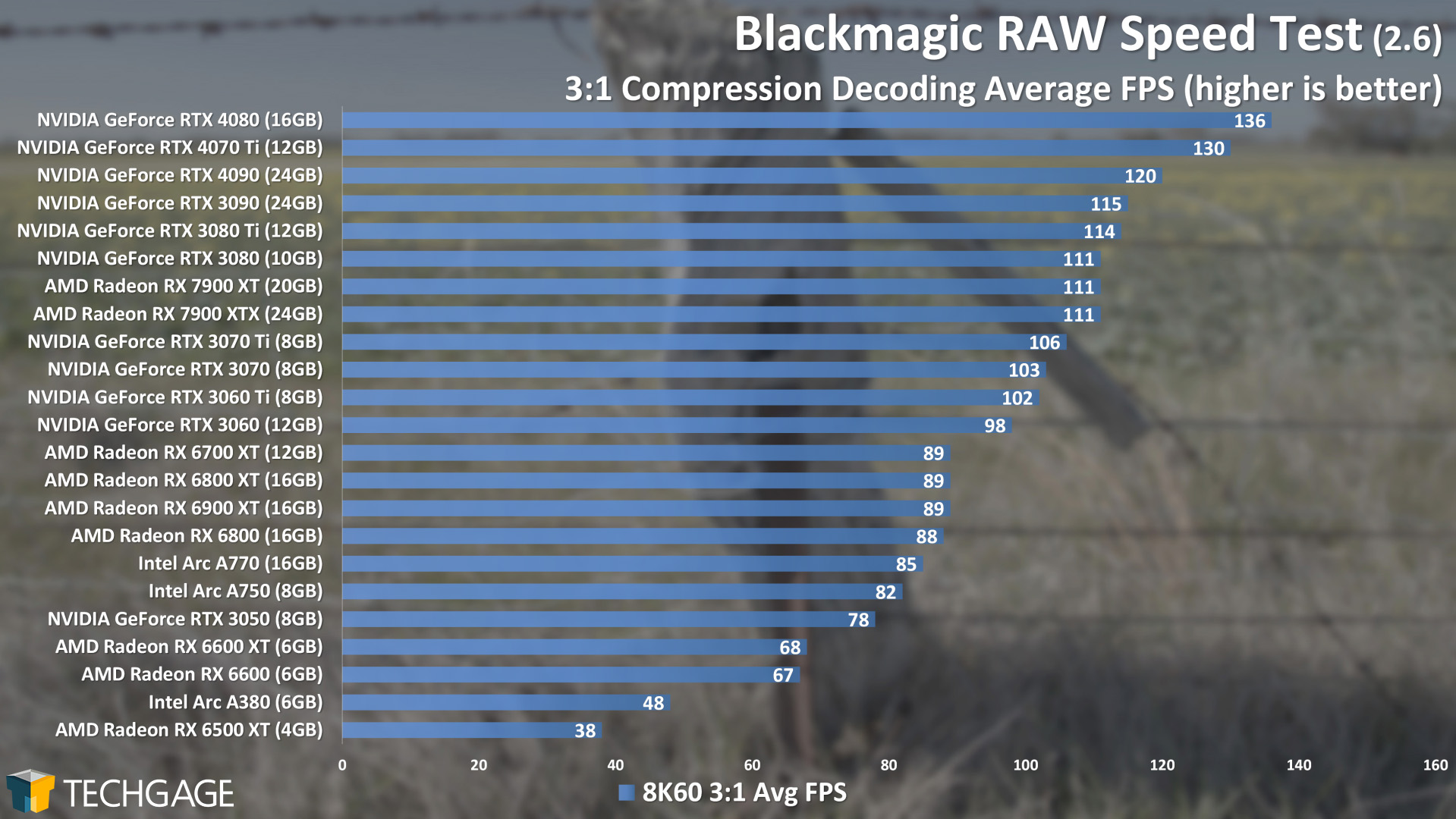

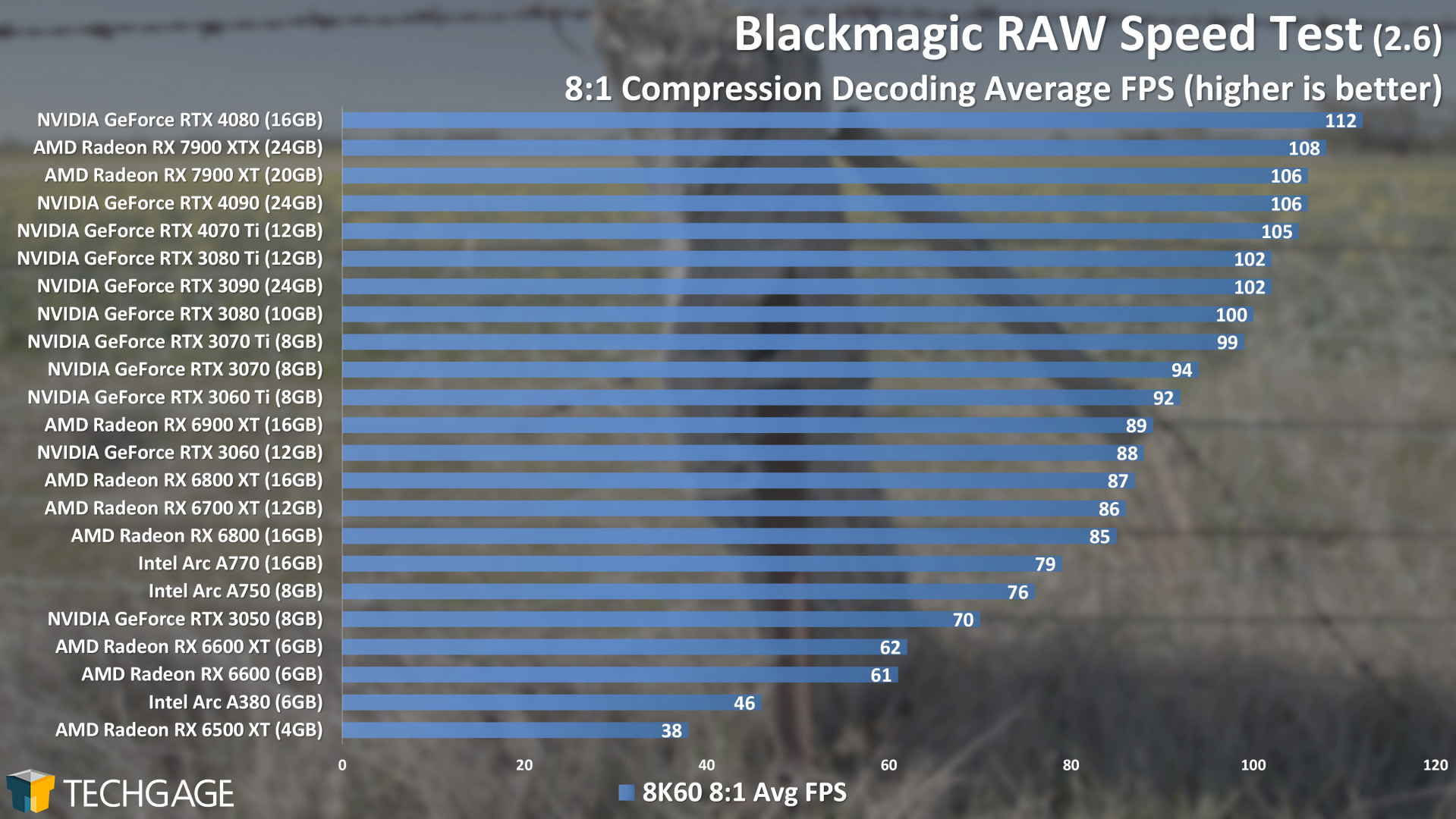

BRAW Speed Test

If we’re looking at 60 FPS footage specifically, then most of the GPUs in this lineup will handle the task fine – at least if we’re dealing with a simple decoding test. When playing back actual projects that have GPU-accelerated effects found within, then faster GPUs are going to deliver notably better performance. When dealing with 8K content, as well, a good GPU target seems to be any with more than 8GB of VRAM.

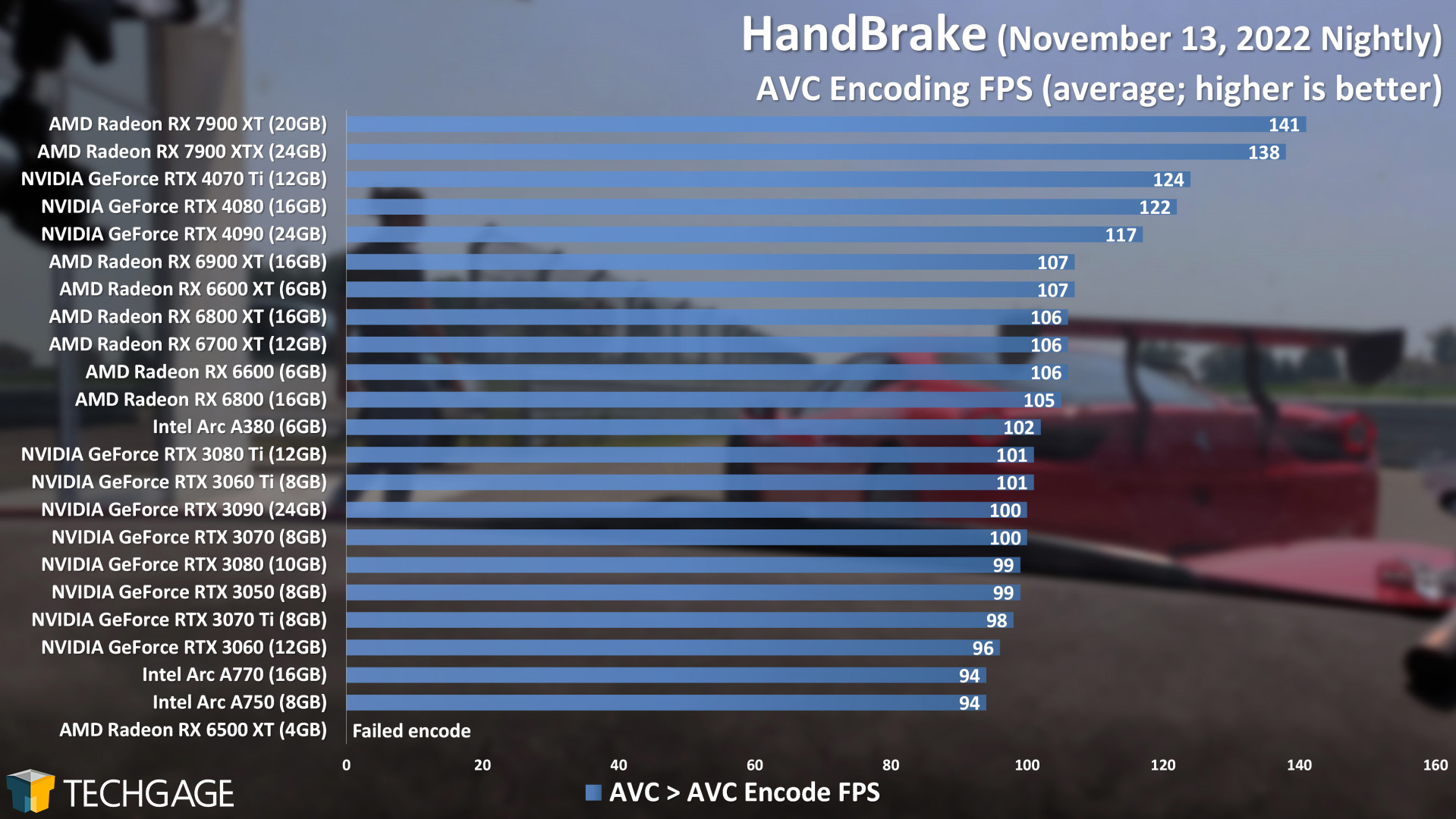

HandBrake

As mentioned earlier, HandBrake just gained a new stable version, but it only supports AV1 encoding to Intel Arc GPUs. So, for now, we’ll continue to test just AVC and HEVC. Looking at these results, it doesn’t take long for one set of results to stand out above the rest: of AMD’s Radeon RX 7900-series GPUs. Both released RDNA3 cards soar to the top, while NVIDIA’s Ada Lovelace collection settles in just behind them.

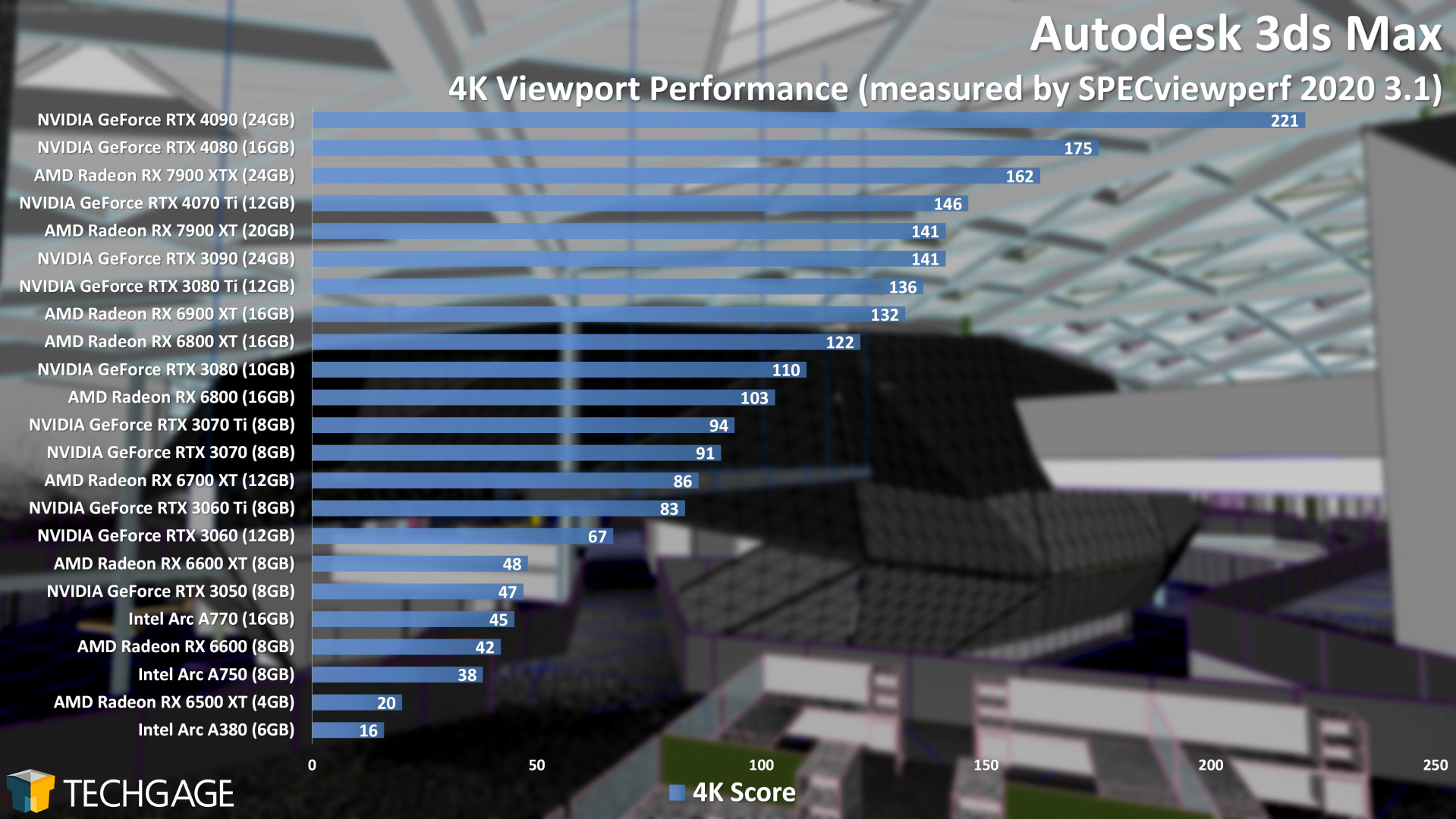

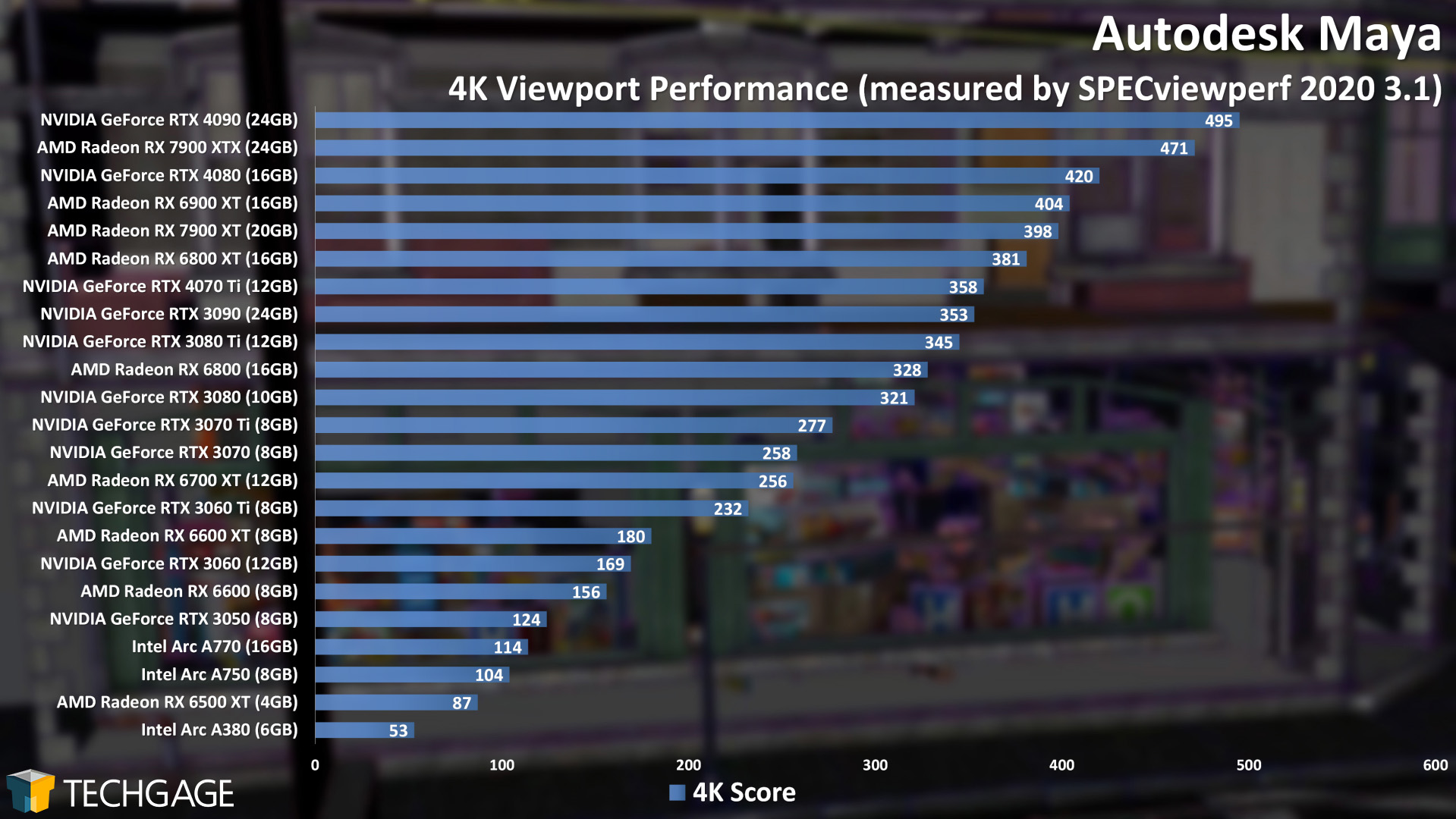

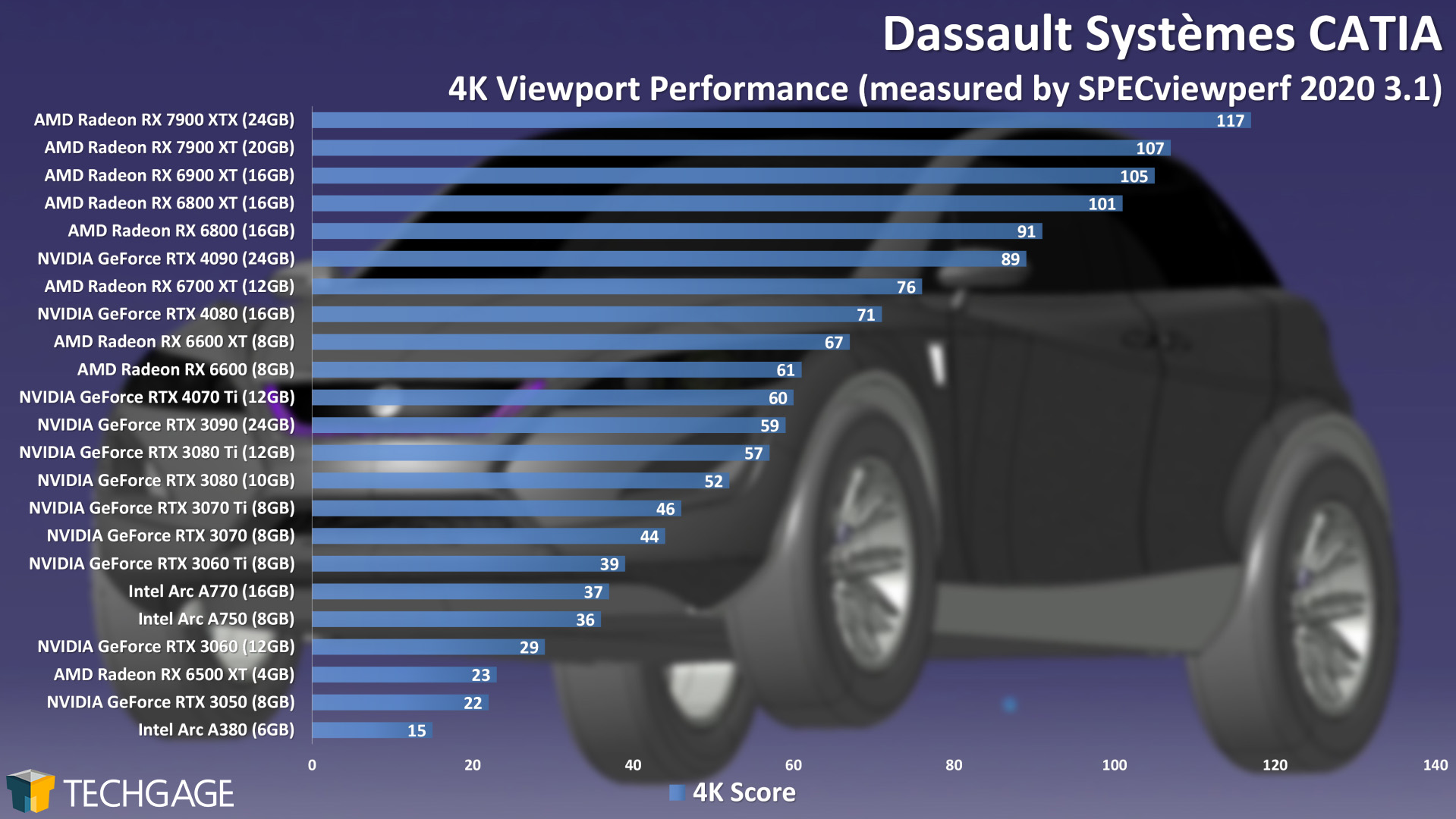

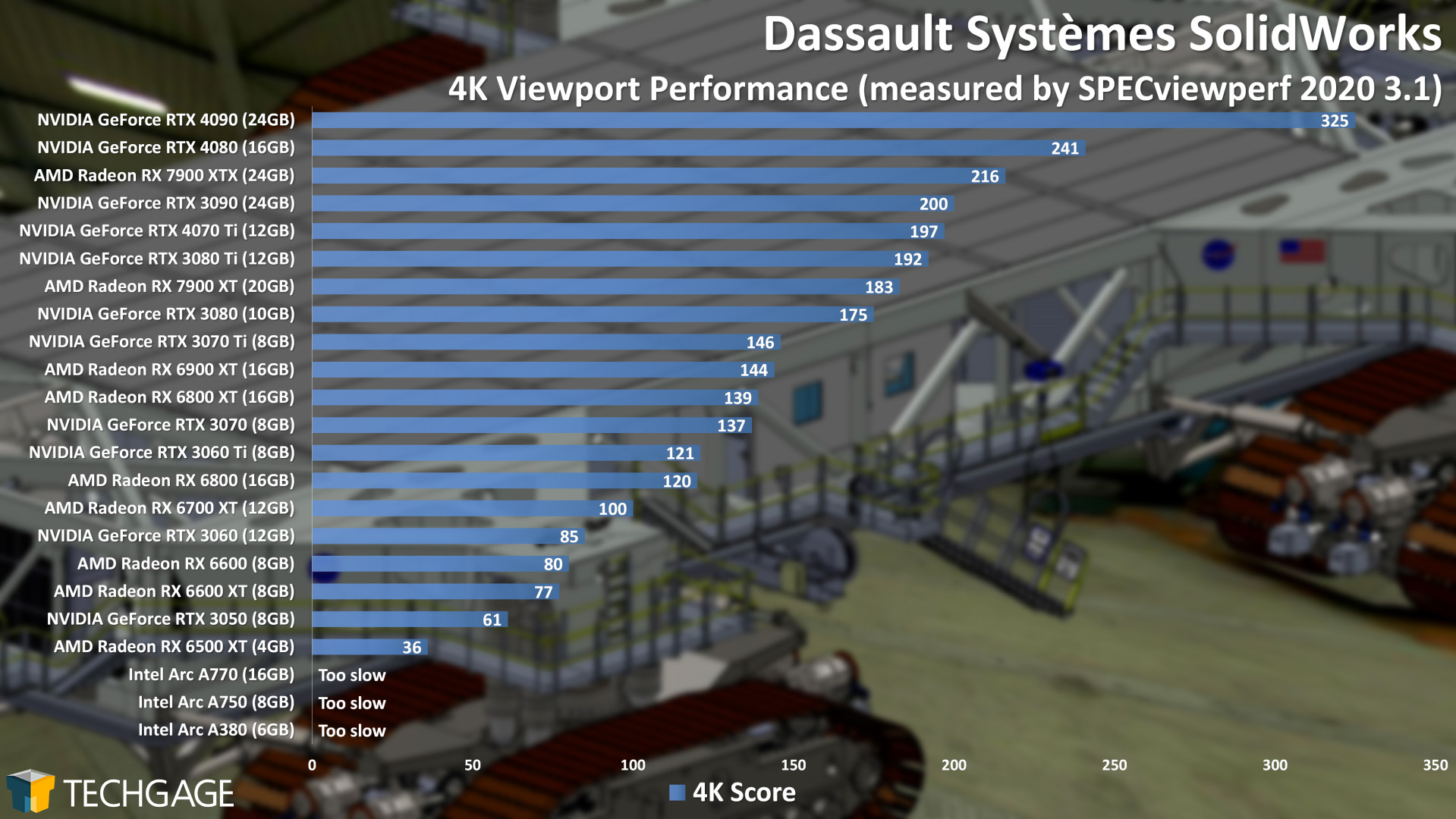

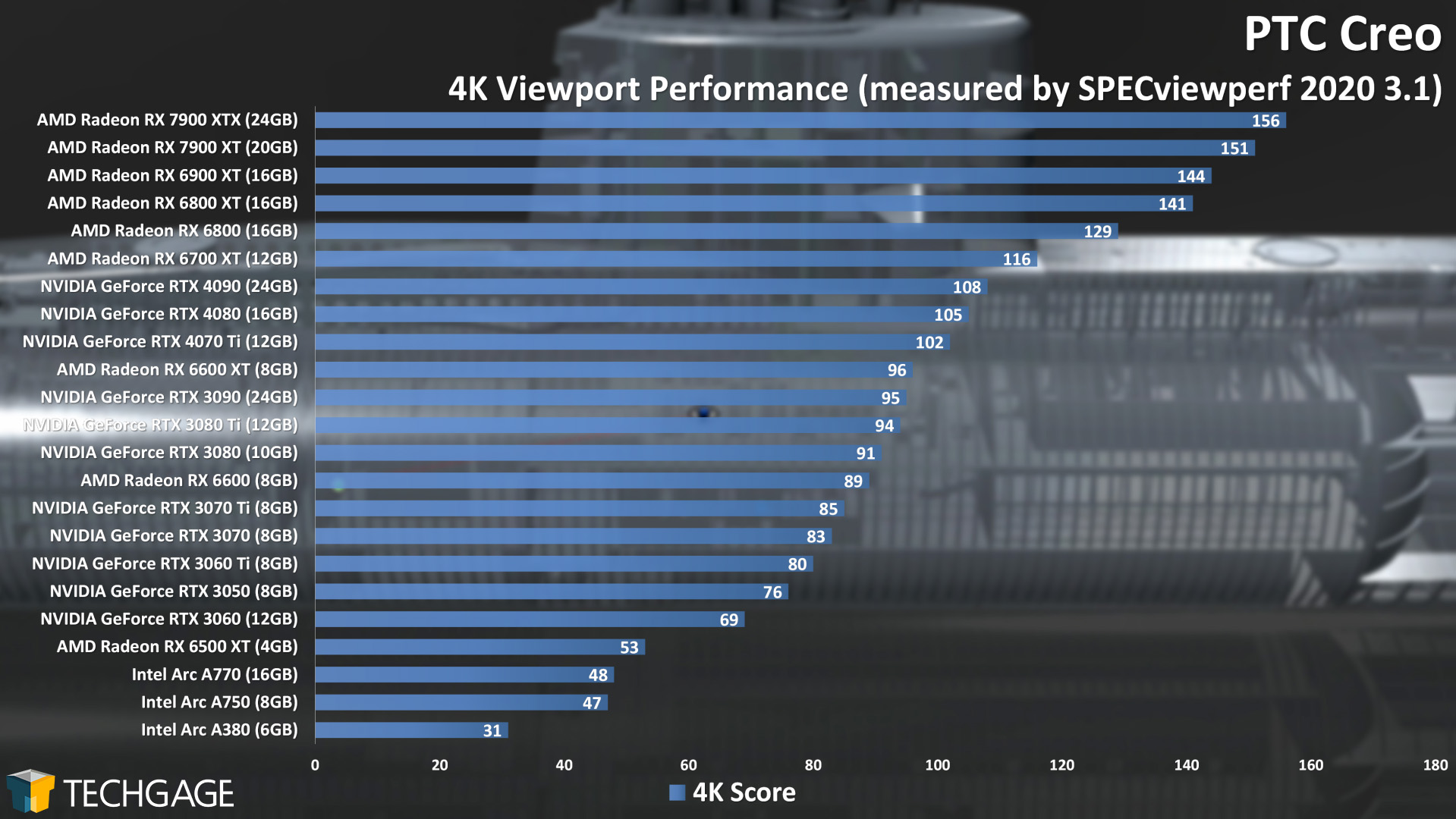

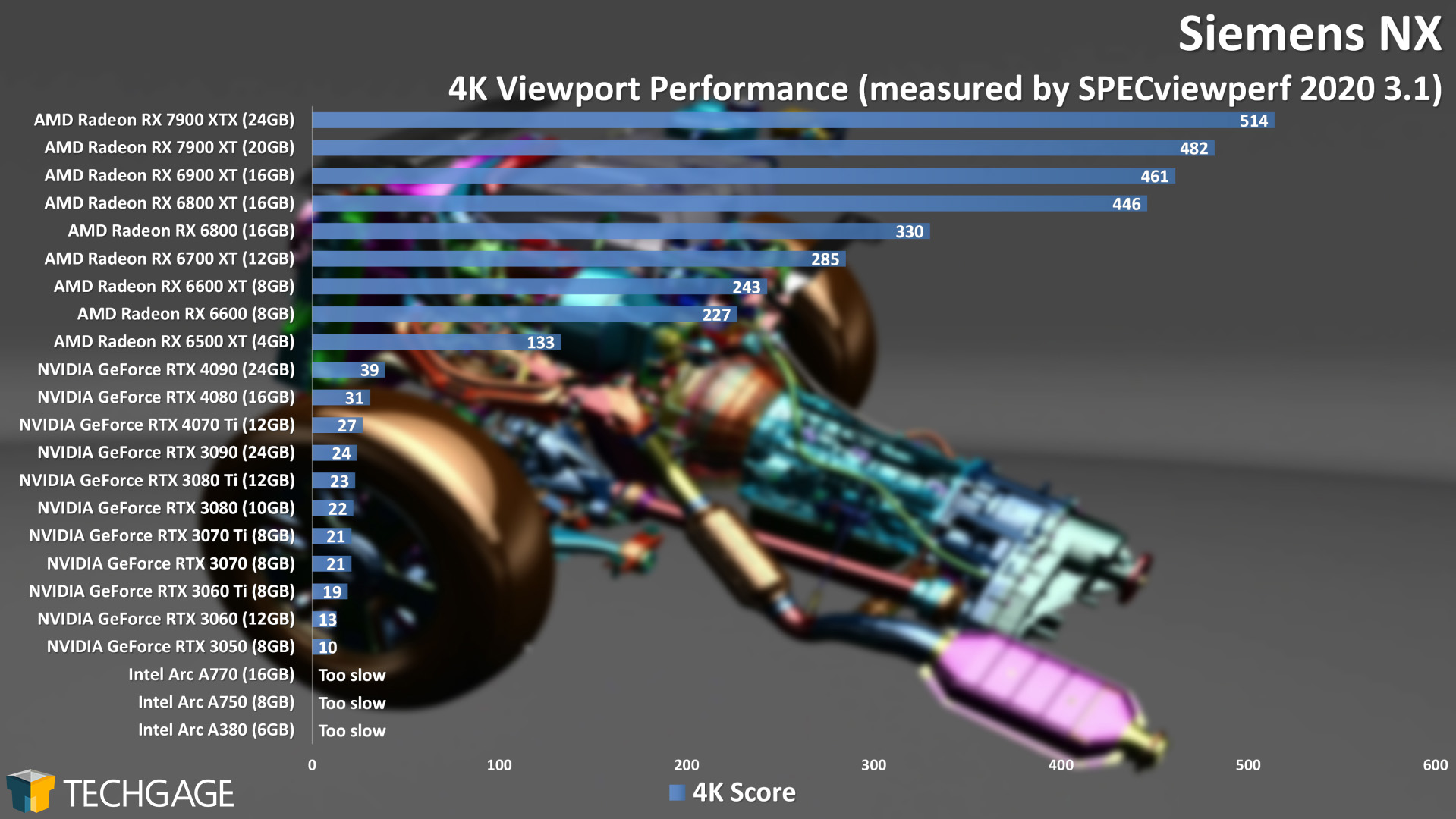

Viewport Performance

As with most of the tests found in this article, the RTX 4070 Ti largely scales as we’d expect it to across these viewport tests. As we discovered in our Radeon RX 7900-series creator article, AMD applied some obvious polish to its latest generation cards along with its Adrenalin drivers, helping both land at the top of multiple charts – like CATIA and Siemens NX. Overall, the RTX 4070 Ti performs well. Beyond viewport, the next concern for choosing a GPU will be rendering performance, which was well taken care of on the previous page.

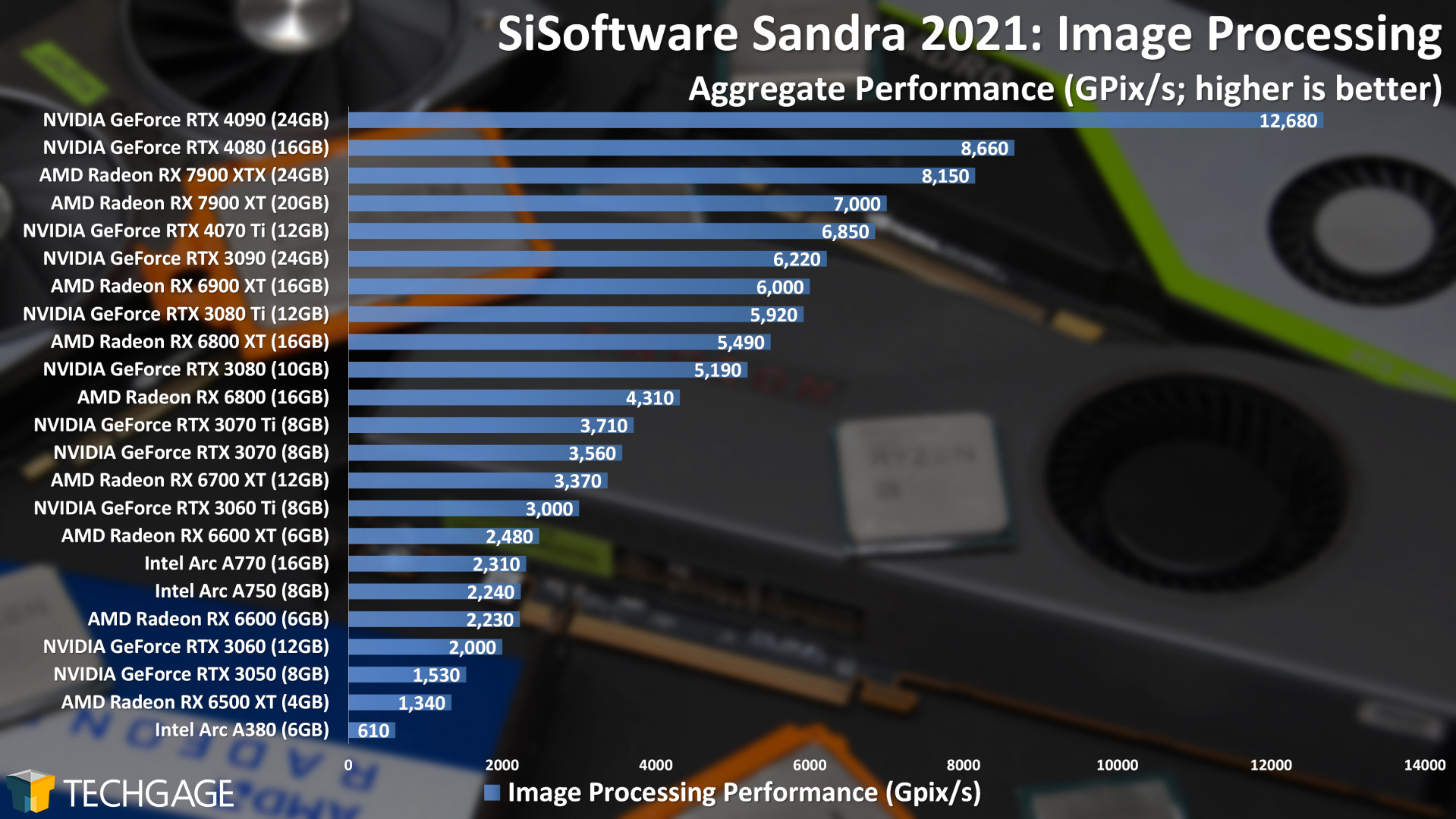

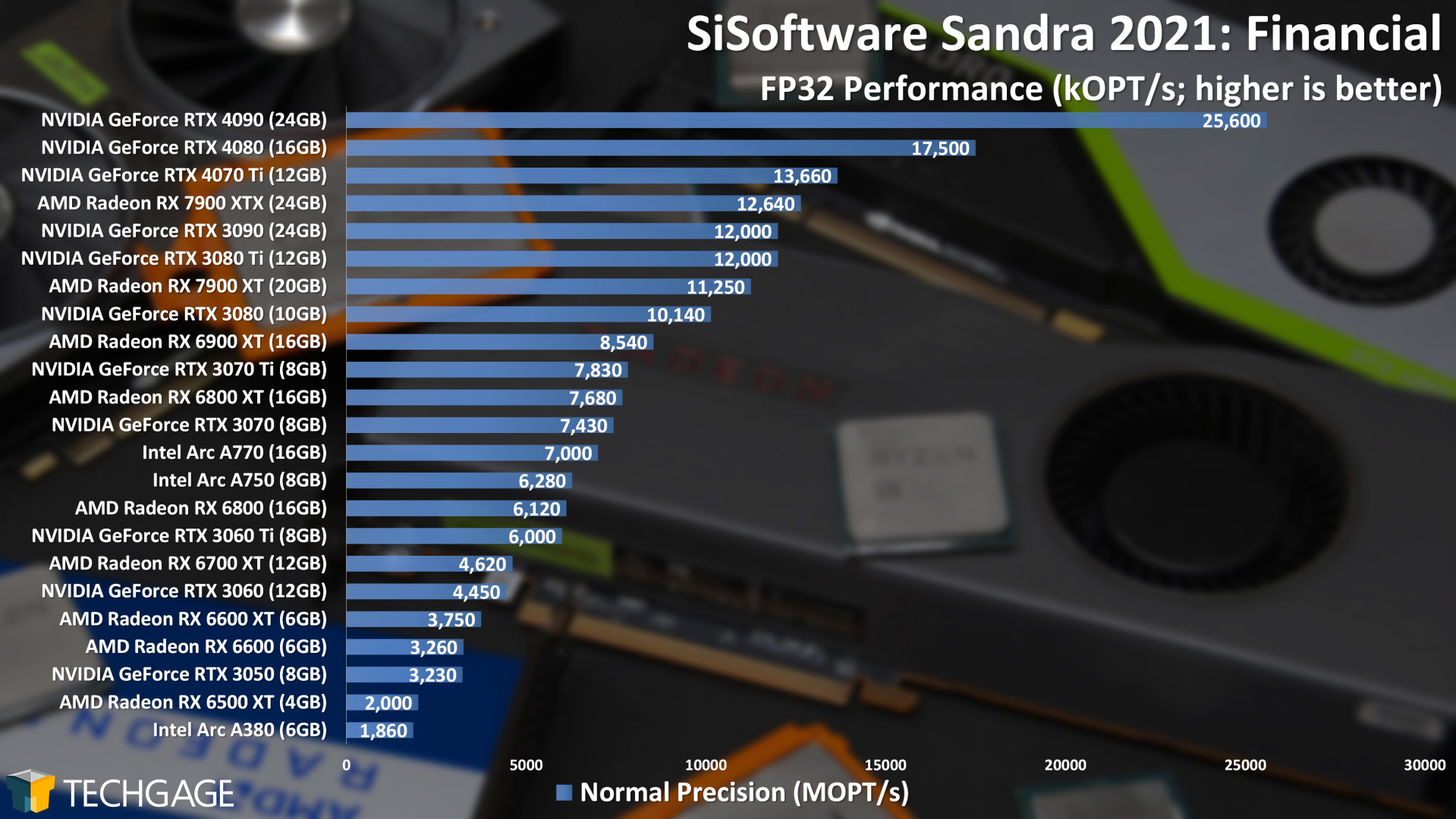

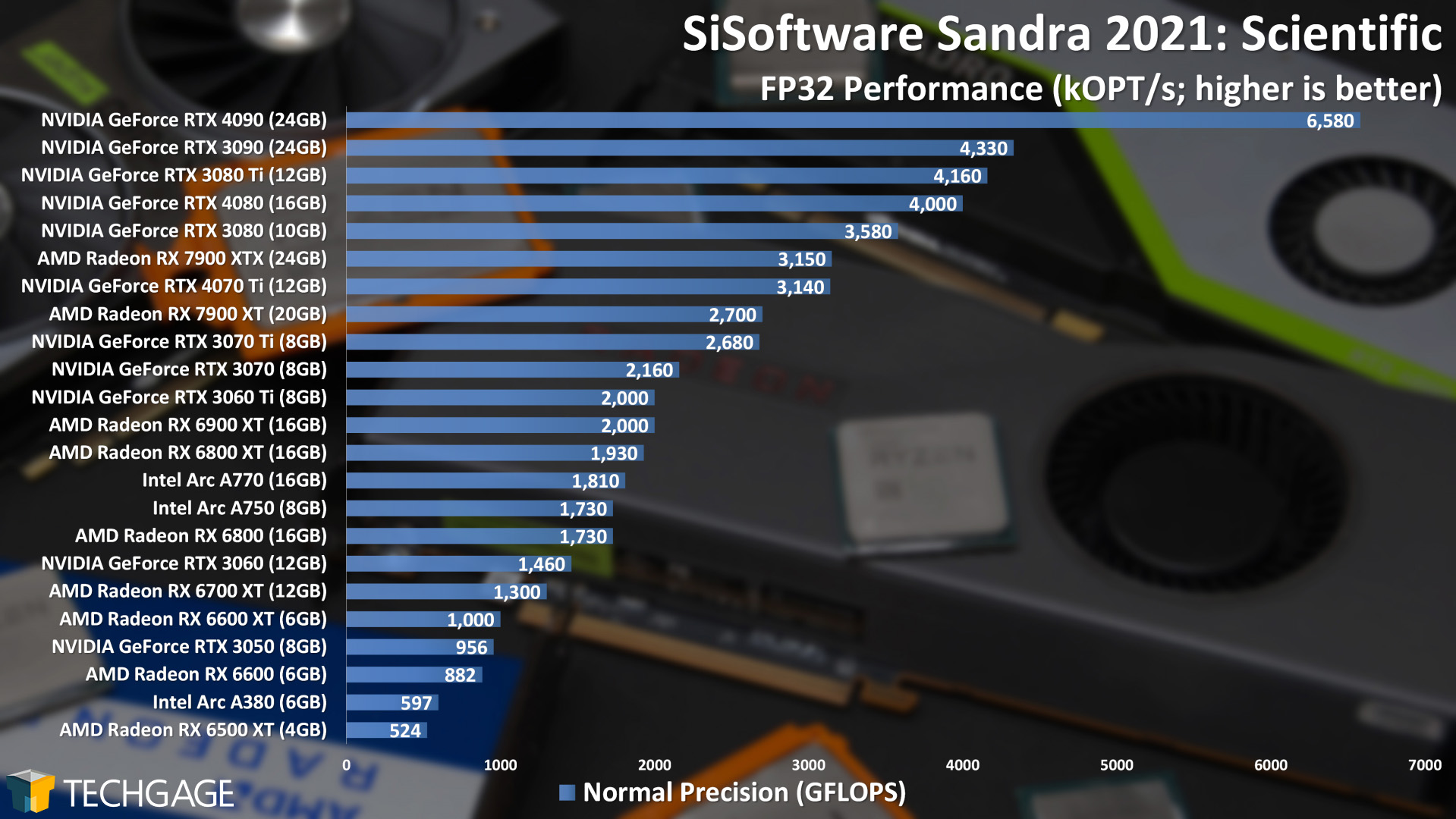

Synthetic Performance

Real-world tests are unquestionably more important than synthetic ones, but those synthetic tests can sometimes reveal performance differences that can’t be easily seen in other scenarios. Take a look at the Cryptography test, for example, where the RTX 4070 Ti fell behind the RTX 3070 Ti. Granted, the difference is minor, but the fact is, the 4070 Ti should leap ahead.

If we look at a breakdown of the crypto results, we can see that the RTX 3070 Ti had much better hashing performance (231 GB/s vs. 125 GB/s), whereas the RTX 4070 Ti excels with encryption/decryption (119 GB/s vs. 65 GB/s). These differences have flown under our radar up to this point, but when comparing the RTX 3090 to RTX 4090, we see similar scaling between them. It seems like it might be a good idea to begin breaking down the cryptography result to show these separate values instead.

In the three other tests, the RTX 4070 Ti performs about where we’d expect, although in some cases, we can’t help but feel like the 192-bit memory bus is holding its performance back a wee bit. There’s no other reason the RTX 4070 Ti should fall behind the RTX 3080 in the Scientific test, when it can beat RTX 3090 in most other tests.

Final Thoughts

With those nearly 40 performance graphs behind us, what do we think of NVIDIA’s new GeForce RTX 4070 Ti? Well – it really depends on how you look at things. If you compare the 4070 Ti to the last-gen RTX 3090, the performance looks stellar. It’s a current-gen $799 card that bests the last-gen $1,499 option – ignoring the fact that the 3090 and 3090 Ti both had double the VRAM.

Speaking of VRAM, it’s one aspect of the RTX 4070 Ti that makes it look like an odd duck. This is an XX70 model that costs $100 more than last-gen’s XX80 model (RTX 3080), and despite having more memory, the bandwidth has been reduced. The RTX 3070 Ti, with its 8GB, clocked in at 608 GB/s. This RTX 4070 Ti, with its 12GB, meanwhile, scales back to 504 GB/s.

NVIDIA might be able to justify the 192-bit memory bus, but from a consumer standpoint, it’s hard to make sense of why it wasn’t kept to at least 256-bit, because some tests do give us a hint that its lower memory bandwidth is holding back performance. The only GPU in the last-gen Ampere lineup to have a 192-bit memory bus was the RTX 3060, so to see that spec carried over to an $800 GPU is hard to grasp, even if the memory architecture has improved from gen-to-gen. To see a 192-bit memory bus in a GPU that takes up more than 2 PCI slots feels straight-up bizarre.

Nonetheless, as mentioned earlier in this article, what really matters at the end of the day is the resulting performance, and as far as that goes, it’s hard to ignore the fact that the RTX 4070 Ti is in fact one super-fast creator GPU. In rendering, it beats out the RTX 3090 easily, and it largely performs as well as we’d expect it to in most of the other tests.

With rendering, one of our core workloads, it’s much easier to justify the $799 price of the RTX 4070 Ti than it might be with gaming – the results are tangible. Unless you can find a last-gen Ampere GPU at a heavy discount, you may actually find it difficult to find a great alternative at the given price-point. If last-gen GPUs plummeted in price, it’d be one thing, but from all we can find on eBay selling used and etailers selling new, last-gen pricing remains high.

As always, you need to do your due diligence to find the right GPU for your next upgrade. And, of course, you can post a comment here if you want a second opinion before you pull the trigger.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!