- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

AMD Athlon II X4 620 – Quad-Core at $99

Last month, AMD became the first company to bring a $99 quad-core processor to market, the Athlon II X4 620. The question, of course, is whether or not it delivers. At 2.60GHz, it looks to offer ample performance, but the lack of an L3 cache is sure to be seen in some of our tests. Luckily, the chip’s overclocking-ability helps negate that issue.

Page 1 – Introduction

In the fall of 2006, Intel launched its Core 2 Extreme QX6700 processor, and as a result, it became the first company to deliver a consumer-targeted quad-core. Sure, the chip retailed for $999, and it was built using a “non-native” design, but it wasn’t until almost a full year later that AMD launched its own quad-core to compete with. While Intel rightfully holds onto its bragging rights from its launch that fall, AMD, as of this month, has its own accomplishment to boast about.

With its Athlon II X4 620, AMD becomes the first company to release a desktop quad-core for under $100… $99 to be precise. Think about that for a moment. Less than three-years-ago, we saw the first-ever desktop quad-core, for $999, and today, we have one for $99. Isn’t the rapid progress of technology grand?

So… a $99 quad-core. Who’s it designed for? That’s the same question I asked myself when I first learned of the chip, and even now, I’m not quite sure who to recommend it to. To me, it seems like the X4 620 comes off as being a product with two contradicting goals. On one hand, it wants to offer great multi-threading capabilities, while on the other, it wants to be a budget offering, and it is just that, thanks to its lack of L3 cache and modest clock speed.

When I think of a multi-core processor, namely a quad or higher, I picture 3D rendering, video creation, scientific computing and more. But those scenarios don’t only benefit from a quad-core, but a fast quad-core. With the lack of any L3 cache, a lot of the high-performance multi-media goals are going to be thrown out the window. So if not for that, could the X4 620 be designed for mainstream-performance multi-tasking? It seems so.

Closer Look at the Athlon II X4 620

The X4 620 might be a budget offering, but don’t worry, it’s not based on ancient technology. Rather, it uses the Propus core, which is based on Deneb. The primary difference is the total lack of an L3 cache. It seems minor, but Phenom’s make heavy use of that cache in many different scenarios, so to have it taken completely away may take away some of the overall lustre that a $99 quad-core should seemingly have.

Of course, it’s a little too early to be drawing conclusions now, so instead of doing that, let’s take a quick look at AMD’s current processor line-up, consisting mostly of Phenom II’s and Athlon II’s:

|

CPU Name

|

Cores

|

Clock

|

cache (L2/L3)

|

HT Bus

|

Socket

|

TDP

|

1Ku Price

|

| AMD Phenom II X4 965 BE |

4

|

3.4GHz

|

2+6MB

|

4000MHz

|

AM3

|

140W

|

$245

|

| AMD Phenom II X4 955 BE |

4

|

3.2GHz

|

2+6MB

|

4000MHz

|

AM3

|

125W

|

$245

|

| AMD Phenom II X4 945 |

4

|

3.0GHz

|

2+6MB

|

4000MHz

|

AM3

|

125W

|

$225

|

| AMD Phenom II X4 905e |

4

|

2.5GHz

|

2+6MB

|

4000MHz

|

AM3

|

65W

|

$175

|

| AMD Phenom II X3 720 BE |

3

|

2.8GHz

|

1.5+6MB

|

4000MHz

|

AM3

|

95W

|

$145

|

| AMD Phenom II X3 705e |

3

|

2.5GHz

|

1.5+6MB

|

4000MHz

|

AM3

|

65W

|

$125

|

| AMD Phenom X4 9650 |

4

|

2.3GHz

|

2+2MB

|

3600MHz

|

AM2+

|

95W

|

$112

|

| AMD Phenom II X2 550 |

2

|

3.1GHz

|

1+6MB

|

4000MHz

|

AM3

|

80W

|

$105

|

| AMD Athlon II X4 630 |

4

|

2.8GHz

|

2+0MB

|

4000MHz

|

AM3

|

95W

|

$122

|

| AMD Athlon II X4 620 |

4

|

2.6GHz

|

2+0MB

|

4000MHz

|

AM3

|

95W

|

$99

|

| AMD Athlon II X2 250 |

2

|

3.0GHz

|

2+0MB

|

4000MHz

|

AM3

|

65W

|

$87

|

| AMD Athlon II X2 245 |

2

|

2.9GHz

|

2+0MB

|

4000MHz

|

AM3

|

65W

|

$66

|

| AMD Athlon II X2 240 |

2

|

2.8GHz

|

2+0MB

|

4000MHz

|

AM3

|

65W

|

$60

|

As you might notice, the X4 620 isn’t the only Athlon II quad-core. Rather, AMD has also launched an X4 630, which bumps the clock speed by 200MHz. This comes at a premium of $23, however, and without jumping too far ahead in this article, I can confidently say that picking up the X4 620 and overclocking it to 2.80GHz is more than feasible (just wait until you see what we managed for a stable overclock!).

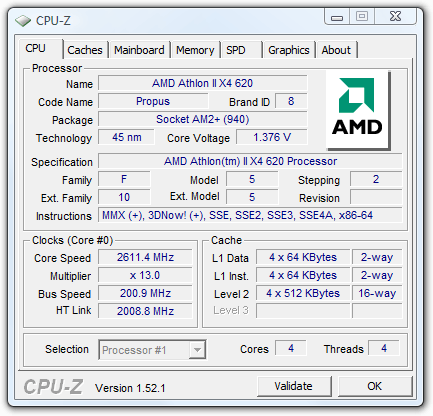

The current CPU-Z release doesn’t have the proper Athlon II X4 logo hard-coded in, so we’re given a generic AMD logo in its place. At stock speeds, you’ll notice that the CPU voltage is higher than you’d expect, at 1.375v. It seems a wee bit high, but in all of our tests, the temperatures were well within reason (<50°C at full load). As this is a non-Black Edition, our 13x multiplier is locked. Again though, there’s no reason for alarm as that’s not going to slow down this chip’s overclocking-ability.

Although the “Package” section in CPU-Z for AMD’s current CPUs tends to be wrong most of the time (it almost always says AM2+), it’s correct in this instance. The X4 620 will work in both AM2+ and AM3 motherboards, so if you want to save as much money as possible, picking up an older board is definitely an option (just make certain that the board has a recent BIOS capable of handling the “unknown” CPU).

At $99, Intel has nothing in the quad-core department to compete with. Its Core 2 Quad Q8200 retails for around $149, although it should perform much better than the X4 620. On the company’s dual-core side, there’s still not much to compete with, although the Core 2 Duo E7400 comes very close, at around $110. Will AMD’s unique market position with its $99 quad make it a winner amongst “high-performance” budget computing? Let’s find out… as soon as we take care of the look at our test system and methodology.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!