- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

ASUS GeForce GTX 1060 6GB Strix Graphics Card Review

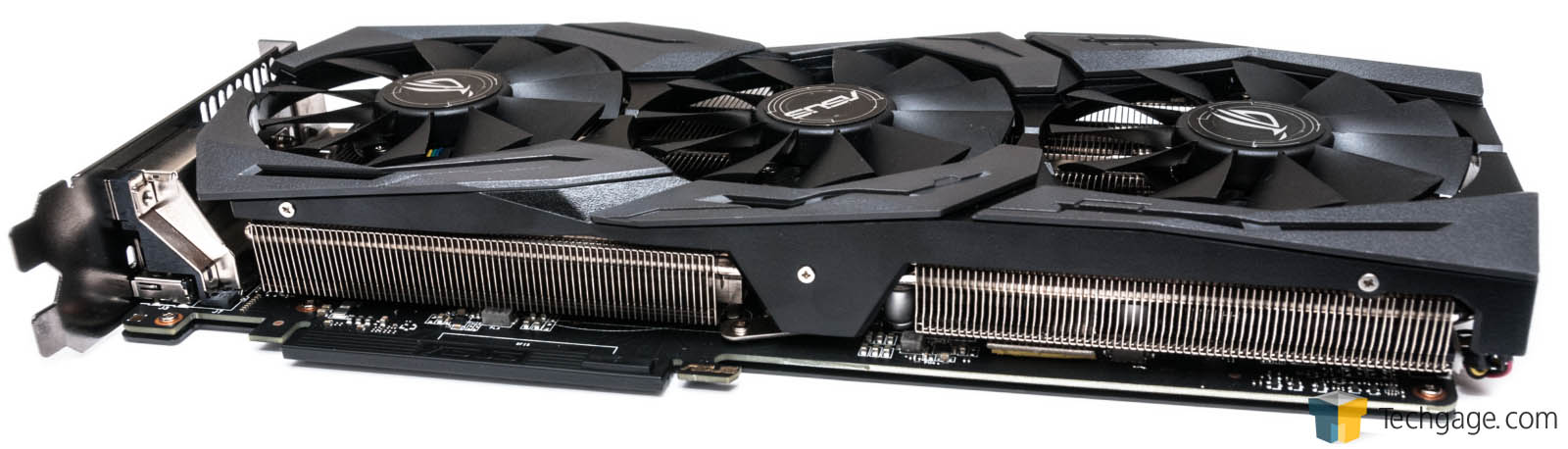

We discovered a couple of months ago that NVIDIA’s GeForce GTX 1060 delivers excellent 1080p performance and admirable 1440p performance, so what happens when ASUS straps on an even larger cooler and gives the card an overclock? Well, we get the Strix, an LED-equipped beast of a card that runs cool and quiet.

Page 1 – Introduction, About ASUS’ GTX 1060 Strix & Testing Notes

We discovered this past summer that NVIDIA’s GeForce GTX 1060 is quite an effective card at its suggested price of ~$249 (6GB model), delivering superb 1080p performance, and solid 1440p performance. As impressive as it was, though, there’s always a little room for improvement, right? ASUS thinks so, and it has its Strix edition to help prove it.

Whereas the reference Founders Edition of the GTX 1060 features a leaf-blower style fan under a closed metal shroud, ASUS offers a beefed-up fin array with its Strix and tops the open shroud with three fans. That makes the Strix edition bulkier overall versus the Founders Edition, but as we’ll see later, the trade-off of space is worth it.

The GTX 1060 Strix supports a couple of predefined modes, with the shipping one being “Gaming”. In that mode, the card supports a GPU Boost clock of 1847MHz. When the GPU TweakII software is installed, “OC Mode” can be used instead, to increase the clock further, to 1873MHz. In the real-world, the top-end of each value will be even higher, something I’ll take a look at on the final page. The important thing to note is that the Strix edition is an overclocked GTX 1060, so out-of-the-gate, it’ll perform much better than a Founders Edition – as we’ll see soon.

Time for some hardware porn:

ASUS equips the GTX 1060 Strix with a DVI-D port, dual HDMI ports, and dual DisplayPorts. While NVIDIA’s design sports a 6-pin power connector, ASUS includes an 8-pin for the sake of possibly improved stability and improved overclocking potential.

As seen in one of the shots above, Strix also includes a backplate, and unlike most, it actually adds to the style of the card. Overall, the entire card is great-looking; it’s not flashy, and would look good in any build. OK – perhaps “not flashy” is incorrect phrasing, as under the shroud, a customizable LED can be found. With downloadable software called Aura, you can change the LED mode and color – great for those wanting to add a bit of pizzazz to their build. Don’t want color? You can turn the LED feature off entirely.

If you’re indifferent to the color of the LED, but use a windowed PC so that you can see the card, you can also use a feature that adjusts the card’s color based on temperature: green for modest temperatures, and yellow/red for peaked temperatures.

| NVIDIA GeForce Series | Cores | Core MHz | Memory | Mem MHz | Mem Bus | TDP |

| TITAN X | 3584 | 1417 | 12288MB | 10000 | 384-bit | 250W |

| GeForce GTX 1080 | 2560 | 1607 | 8192MB | 10000 | 256-bit | 180W |

| GeForce GTX 1070 | 1920 | 1506 | 8192MB | 8000 | 256-bit | 150W |

| GeForce GTX 1060 | 1280 | ≤1700 | 6144MB | 8000 | 192-bit | 120W |

As seen in the table above, the GTX 1060 sits at the bottom of NVIDIA’s current line-up, although that’s not a bad thing considering it’s still a midrange card (the GTX 1050 is rumored to make an appearance soon). So what’s that mean in performance terms? In our opinion:

| NVIDIA GeForce Series | 1080p | 1440p | 3440×1440 | 4K |

| TITAN X | Overkill | Overkill | Excellent | Great |

| GeForce GTX 1080 | Overkill | Excellent | Excellent | Good |

| GeForce GTX 1070 | Excellent | Great | Good | Poor |

| GeForce GTX 1060 | Great | Good | Poor | Poor |

| Overkill: 60 FPS? More like 100 FPS. As future-proofed as it gets. Excellent: Surpass 60 FPS at high quality settings with ease. Great: Hit 60 FPS with high quality settings. Good: Nothing too impressive; it gets the job done (60 FPS will require tweaking). Poor: Expect real headaches from the awful performance. |

||||

As a “Great” 1080p card, the GTX 1060 will hit 60 FPS pretty effortlessly in most of today’s games, although it might not be common to be able to top-out a game’s graphics settings at that resolution with future titles. “Good” for 1440p means that compromises will have to be made in most of today’s current games in order to hit 60 FPS.

What that means for the Strix is that those values would be even more emphasized. It’d take a lot more than a clock boost to upgrade any of these values, though.

Testing Notes

When we need to build a test PC for performance testing, “no bottleneck” is the name of the game. While we admit that few of our readers are going to be equipped with an Intel 8-core processor clocked to 4GHz, we opt for such a build to make sure our GPU testing is as apples-to-apples as possible, with as little variation as possible. Ultimately, the only thing that matters here is the performance of the GPUs, so the more we can rule out a bottleneck, the better.

That all said, our test PC:

| Graphics Card Test System | |

| Processors | Intel Core i7-5960X (8-core) @ 4.0GHz |

| Motherboard | ASUS X99 DELUXE |

| Memory | Kingston HyperX Beast 32GB (4x8GB) – DDR4-2133 11-12-11 |

| Graphics | AMD Radeon R9 Nano 4GB – Catalyst 16.5.3 AMD Radeon RX 480 8GB – Catalyst 16.6.2 Beta NVIDIA GeForce GTX 980 4GB – GeForce 365.22 NVIDIA GeForce GTX TITAN X 12GB – GeForce 365.22 NVIDIA GeForce GTX 1060 6GB – GeForce 368.64 (Beta) NVIDIA GeForce GTX 1060 6GB GeForce 372.90 (ASUS Strix) NVIDIA GeForce GTX 1070 8GB – GeForce 368.19 (Beta) NVIDIA GeForce GTX 1080 8GB – GeForce 368.25 |

| Audio | Onboard |

| Storage | Kingston SSDNow V310 1TB SSD |

| Power Supply | Cooler Master Silent Pro Hybrid 1300W |

| Chassis | Cooler Master Storm Trooper Full-Tower |

| Cooling | Thermaltake WATER3.0 Extreme Liquid Cooler |

| Displays | Acer Predator X34 34″ Ultra-wide Acer XB280HK 28″ 4K G-SYNC ASUS MG279Q 27″ 1440p FreeSync |

| Et cetera | Windows 10 Pro (10586) 64-bit |

Framerate information for all tests – with the exception of certain time demos and DirectX 12 tests – are recorded with the help of Fraps. For tests where Fraps use is not ideal, I use the game’s built-in test (the only option for DX12 titles right now). In the past, I’ve tweaked the Windows OS as much as possible to rule out test variations, but over time, such optimizations have proven fruitless. As a result, the Windows 10 installation I use is about as stock as possible, with minor modifications to suit personal preferences.

In all, I use 8 different games for regular game testing, and 3 for DirectX 12 testing. That’s in addition to the use of three synthetic benchmarks. Because some games are sponsored, the list below helps oust potential bias in our testing.

(AMD) – Ashes of the Singularity (DirectX 12)

(AMD) – Battlefield 4

(AMD) – Crysis 3

(AMD) – Hitman (DirectX 12)

(NVIDIA) – Metro: Last Light Redux

(NVIDIA) – Rise Of The Tomb Raider (incl. DirectX 12)

(NVIDIA) – The Witcher 3: Wild Hunt

(Neutral) – DOOM

(Neutral) – Grand Theft Auto V

(Neutral) – Total War: ATTILA

If you’re interested in benchmarking your own configuration to compare to our results, you can download this file (5MB) and make sure you’re using the exact same graphics settings. I’ll lightly explain how I benchmark each test before I get into each game’s performance results.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!