- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Taking a Deep Look at NVIDIA’s Fermi Architecture

It has taken a bit longer than we all had hoped, but last week, NVIDIA gathered members of press together to dive deep into its upcoming Fermi architecture, where it divulged all the features that are set to give AMD a run for its money. In addition, the company also discussed PhysX, GPU Compute, developer relations and a lot more.

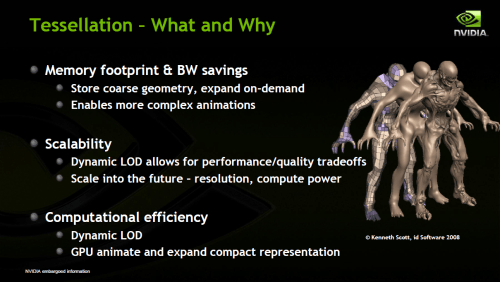

Page 2 – Tessellation, Parallel Design, CSAA/TMAA

The most common method of bringing geometric realism to light is to take advantage of both tessellation and displacement mapping. By now, you’re probably familiar with both, but for a quick recap, tessellation is the art of breaking up larger triangles in models into many smaller ones, while displacement mapping is what handles their positioning.

You might recall the Unigine DirectX 11 benchmark, “Heaven”, which allows the user to enable and disable tessellation on the fly. With it enabled, the scene becomes much more alive, with more defined models and world throughout the scene, whether it be the cobblestone walkway, the clay tiled rooftops, or the defiant dragon which dominates the center of the scene. In general, tessellation, combined with displacement mapping, is designed to add realism to a scene, and its models.

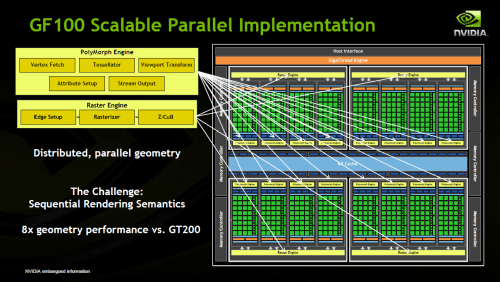

The process of accomplishing this in itself seems simple, but in reality, it requires a lot of horsepower. In the Unigine benchmark, turning on tessellation with an ATI HD 5000-series card can decrease the overall frames-per-second by almost 50%… and you thought enabling anti-aliasing was bad! To combat this issue, NVIDIA’s PolyMorph engines are brought into play. Each of these engines include the architecture for this geometry processing, and because they’re designed for a single purpose (geometry realism), they could in some regards be considered an accelerator, meaning that the tessellation performance compared to a current-gen ATI graphics processor should be far improved.

But where do the “Raster” engines come into play? Once the data is processed by the PolyMorph engines, it’s passed along to the Raster engines where they’re then converted into pixels for displaying on your screen. Other recent graphic architectures have also included Raster engines, but only one per GPU. For GF100, they’ve been increased to four, as there’s a lot more data that will need to be processed thanks to tessellation.

Each PolyMorph engine consists of five parts, the Tessellator, Vertex Fetch, Viewport Transform, Attribute Setup and Stream Output, while the Raster engine consists of three, the Rasterizer, Edge Setup and Z-Cull.

Coupled with these enhancements, NVIDIA stresses that the biggest overarching gain is that GF100 can perform all of this geometric processing in parallel, which is important from both a rendering order standpoint, which automatically results in extra needed performance, and also image quality and realism.

In gist, geometric realism is important to the future of gaming, and it’s not only NVIDIA backing this idea, but ATI also, which is proven by the fact that it’s been pushing tessellation as one of the biggest features of its DirectX 11-equipped HD 5000-series. Though NVIDIA’s GT200 doesn’t support DirectX 11 (and thus tessellation), the company states that GF100’s geometry performance is 8x the previous generation. That’s quite substantial.

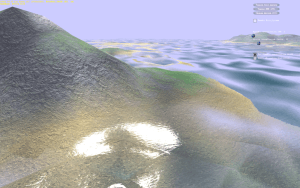

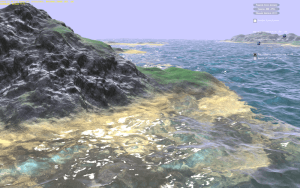

With all this talk of performance boosts, it was only fair that NVIDIA shared some initial data, and that it did. But before diving into metrics, the company first showed us two very cool demos that take huge advantage of both tessellation and compute, “Water” and “Hair” (I’m betting you can’t guess what these tests show off).

The first demo is water, and I have to honestly say that images do nothing to show off the level of detail. In motion, the scene looks incredible, and I’d be willing to vouch for this being some of the best water I’ve ever seen in either a tech demo or a game. With the level of detail very low, the scene is rather “meh”, and the water looks more like a translucent sheet of plastic rather than water. But with a maxed level of detail, the ripples and waves in the water come alive, and it looks far more realistic.

Like the water I saw in the above demo, the subsequent hair demo was equally as impressive. Hair has always been difficult to render inside of a game, and most often, it’s the part of a scene that will stand out, and not in a good way. Even if the hair moves when the character does, it doesn’t move on a strand-by-strand basis, but rather in clumps or as a whole. That effect may work fine, but it’s nowhere near as realistic as what we saw here.

This interactive model, or rather stump, has a full head of beautiful hair that’s rendered with the help of GPU compute and tessellation. Each strand is independent of another, or so it seemed, and moving the model around, shaking it, or blowing wind at it in a certain direction delivered the exact kind of effect we’d expect to see. I sound like a broken record, but this hair demo was the best I’ve ever seen. I have little doubt that the required computation for something like this in a game is too much for Fermi (the performance was good here, but imagine adding multiple models, along with the world, and all of the game mechanics), but we’re definitely peeking into the future here.

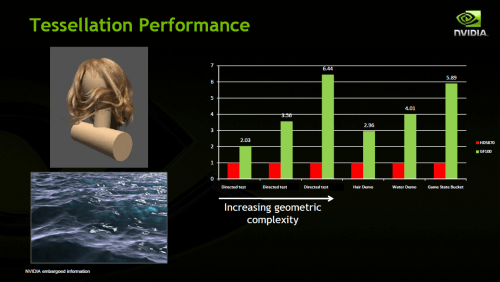

Going back to my earlier mention of performance, NVIDIA provided us with a few slides that directly compare GF100 to what we can assume is the equally high-end ATI Radeon HD 5870. As you can see below, the Fermi architecture far surpasses ATI’s, with the weakest gain showing a 2.03x improvement. But even there, it proves that as the detail is increased, Fermi’s architecture swings into action in a more noticeable way.

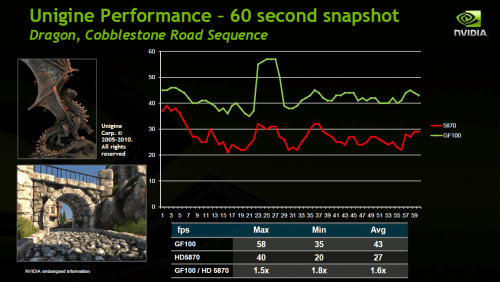

NVIDIA didn’t show us the Unigine DirectX 11 performance first-hand, but we were provided with another graph that once again compares GF100 to the HD 5870 in a 60 second sequence. Here, we can see that the ATI’s maximum FPS almost hits the minimum of GF100, with an overall 1.6x boost for GF100 on the average FPS side of things.

For the sake of article length, I’m not going to tackle every last feature that was discussed during NVIDIA’s editor’s day in great detail, but I will discuss a few more before moving onto a look at non-GF100 information that was also discussed, such as PhysX and game development.

As mentioned in the intro, one focus of Fermi is to improve the image quality, and to do this, NVIDIA introduces such techniques as Accelerated Jittered Sampling, which changes the sampling pattern randomly on a per-pixel basis in order to deliver a cleaner result. This is done with a technique called Gather4, which samples four texels with a given offset on a 128×128 grid with a single texture instruction. This technique would help improve image quality with shadow mapping, AO (ambient occlusion), among others.

If you find 4xAA a bit boring, then NVIDIA’s new 8+24 CSAA (aka: 32xCSAA) mode might be just for you. It’s called 8+24 as it takes eight color samples along with 24 coverage samples in order to generate a much, much cleaner look. NVIDIA gave the specific example of foliage where 32x CSAA would deliver a noticeable improvement, so we could expect that, and things like mesh fences to look much better in this mode. The best part, though? NVIDIA states that moving from 8xAA to CSAA will render just a 7% loss in performance.

And speaking of mesh fences, TMAA (Transparency Multisample AA) is another upcoming feature to look for. Notice that sometimes, looking at a fence or something similar in the distance, some of it may actually be invisible, depending on your perspective? This happens for various reasons, but TMAA is there to help prevent it. As the example below shows, on GT200, there are actually broken lines in the fence, but on GF100, everything is smoothed out, and nothing is broken at all.

We’ve covered all of the bases for GF100’s architecture, but there’s a lot more that was discussed during our deep dive. These things included compute in gaming, PhysX, development, and much more.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!