- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Taking a Deep Look at NVIDIA’s Fermi Architecture

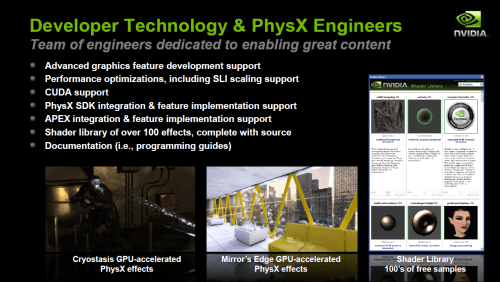

It has taken a bit longer than we all had hoped, but last week, NVIDIA gathered members of press together to dive deep into its upcoming Fermi architecture, where it divulged all the features that are set to give AMD a run for its money. In addition, the company also discussed PhysX, GPU Compute, developer relations and a lot more.

Page 4 – Game Development, Final Thoughts

Over the course of the past few months, and perhaps even years, there’s always been questions about NVIDIA’s “The Way It’s Meant to Be Played” logo, and what exactly it means. Does it mean that NVIDIA pays off companies in order to achieve better performance on its cards? Or does it simply mean that NVIDIA aided the developer throughout the process of developing its game? NVIDIA wanted to make clear that it is wholeheartedly the latter.

In what has been dubbed “Batmangate”, NVIDIA was accused a few months ago of using less-than-ethical means to kick ATI out of the anti-aliasing game in Batman: Arkham Asylum. We posted news about it at the time, like many other sites, and because it’s such a hot issue, it’s one that the company spent a good deal of time discussing, so as to clear the air. Frankly, I’m glad they did, as it’s great to get a better understanding of NVIDIA’s developer relations. Again, for the sake of article length, I’m not going to delve too deep into all of this, so excuse my rapid-fire execution of this information.

NVIDIA’s TWIMTBP means a couple of things, but none of it has to do with encouraging a developer to produce a game that favors the company’s graphics cards. What it does involve is the offering of ideas to the developer to improve the game (content and graphics-wise), and even go as far as to help troubleshoot some of the issues that the developer may be experiencing. Of course, NVIDIA thinks little to provide hardware to those developers who may need it, although the company stresses that supplying hardware is a minor perk, because it does so much more than that.

Part of TWIMTBP involves the hosting of demos, doing PR and making sure reviews of the games are done, and that explains a lot to me behind the reasons that NVIDIA is always offering us copies of games for that purpose. If the company is able, it will also help the developer get published, if it hasn’t been able to up to that point.

It’s easy to just take NVIDIA’s word for things here, but it has a lot of proof to back up its claims. It offers a wide variety of developer tools on its website, for one, along with an incredible amount of documentation. Believe it or not, the company also has a separate non-profit division which is dedicated to game developer relations. The lucky fellows here get to not only play an absurd amount of games (and actually get paid for it), but work closely with the developers in order to help them troubleshoot issues, or to offer advice.

To give further support to its claims, NVIDIA gave two real examples of where it has recently aided developers. The first is with a game called Star Tales, a reality title of sorts. The game didn’t support AA, thanks to its UE3 engine usage, so NVIDIA’s own engineers coded up the support, and it was later added in. Interestingly enough, the solution was simple (the engine lacked G16R16 support), and to help the developer remedy it, NVIDIA supplied them with, get this… AMD hardware. Strange, but seemingly true.

Of course, could NVIDIA avoid the example of Batman: Arkham Asylum? Not bloody likely. And they didn’t. The issue here was somewhat similar to Star Tales, but it was a tad more complicated as it is a DX10 game. Here, the issue was with R16G16F, which wasn’t supported with MSAA on ATI cards at the time of the game’s launch. The final build of the game before its launch was released on 04/29/09, and ATI didn’t add the support needed until its Catalyst driver released on 07/22/09.

This is all very interesting, as with the information discovered a couple of months ago, it looked as though it was NVIDIA which was being the shady of the two companies. AMD admittedly has been very quiet about the entire situation, at least as far as I can tell, so it seems like NVIDIA was being ganged up on without proper knowledge of the situation. That’s understandable, since it’s virtually impossible for us to know the inner-workings of these developer relations. The company cleared up a lot during our meeting.

Final Thoughts

One of the most interesting things discussed during the editor’s day is something I’m unfortunately not going to be able to talk a lot about right now, primarily due to time, and also lack of backup information and screenshots. It has to do with game development, and the ease of both troubleshooting a game, and also adding PhysX to a model or scene.

NVIDIA announced its APEX platform last spring, which essentially allows artists, not coders, the ability to add and tweak PhysX to their character models. I saw this in action, and I have to say it’s incredibly robust. It’s a simple module that plugs right into common 3D modeling applications, like 3ds Max, and allows the artist very fine control over every aspect of whichever PhysX effect they’re applying to their model.

Then there’s Nexus, which was mind-blowing due to the unbelievable control it avails the developer. The technology is super-advanced, but I’ll tame it down as much as possible. In order for the best operation, two graphics cards and two OSes should be installed (this can be done via virtual machine). The reason a second graphics card is needed is because the game that you’re developing will be launched on it, not the primary, so as to increase system stability.

Picture yourself coding your game in Visual Studio, and then compiling it and launching the result in the second OS (which runs a Nexus server). You notice that the shading on your characters helmet is a bit off, which suggests you botched a bit of code. To find the culprit, you can pause the exact frame (this literally freezes the entire GPU, hence the need for a second card), which then loads up a direct screenshot on your main OS. From there, you click on the problematic area, and the development studio will bring you straight to the bit of code that affects it. I’m explaining this in simple terms, but let me say, even as a non-game developer, the sheer control here could be summed up with a “WOW”. And for the record, no, this doesn’t require a professional graphics card to work.

I’ll post more about this at a later date, especially once I’m able to goof around with the technology first-hand. From what I’ve seen, I have little doubt that it’s something developers will want, and want soon. NVIDIA stated that the beta sign-up lists are constantly overloaded for both APEX and Nexus, so that’s a good sign.

Believe it or not, despite this article being just over 4K words, there’s still some aspects of our meeting that I didn’t cover, such as the real-time ray tracing demo as seen above. I won’t tackle that much here, but might take the time to make a news post about it, and other things I also didn’t cover, in the weeks to come. Overall, I’m impressed with what I saw during NVIDIA’s editor’s day, and it looks to be true that Fermi will be worth the wait, at least, assuming pricing is kept reasonable (doesn’t it always boil down to that?)

When I asked NVIDIA when we could expect our media samples of Fermi, which usually helps me figure out when the retail launch will be, I was told, “After today.”, so you can say that I wasn’t exactly given a straight answer! Rumor has it that the launch will occur sometime in March, so stay tuned to the site as we’ll be all over the new card when it hits our labs, ready to publish our findings once we’re able.

Discuss this article in our forums!

Have a comment you wish to make on this article? Recommendations? Criticism? Feel free to head over to our related thread and put your words to our virtual paper! There is no requirement to register in order to respond to these threads, but it sure doesn’t hurt!

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!