- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

XFX GeForce GTX 260 Black Edition

No matter your need for graphics power, the choice of GPUs right now is fantastic. Where high-end gamers are concerned, two popular options are the HD 4870 1GB and the GTX 260/216. We’re taking a look at XFX’s latest release of the latter, which features such an impressive factory overclock, it manages to keep up to the GTX 280.

Page 10 – Overclocking, Temperatures

Before tackling our overclocking results, let’s first clear up what we consider to be a real overclock and how we go about achieving it. If you’ve read our processor reviews, you might already be aware that I personally don’t care for an unstable overclock. It might look good on paper, but if it’s not stable, then it won’t be used. Very few people purchase a new GPU for the sole purpose of finding the maximum overclock, which is why we focus on finding what’s stable and usable.

To find the max stable overclock on an NVIDIA card, we use the latest available version of RivaTuner, which allows us to reach heights that are no way stable – a good thing.

Once we find what we feel could be a stable overclock, the card is put through the stress of dealing with 3DMark Vantage’s “Extreme” test, looped three times. Although previous versions of 3DMark offered the ability to loop the test infinitely, Vantage for some reason doesn’t. It’s too bad, as it would be the ideal GPU-stress test.

If no artifacts or performance issues arise, we continue to test the card in multiple games from our test suite, at their maximum available resolutions and settings that the card is capable of handling. If no issues arise during our real-world gameplay, we can consider the overclock to be stable and then proceed with testing.

Overclocking XFX’s GeForce GTX 260 Black Edition

Overclocking can sometimes be a huge part of a graphics card review, but mostly if the card doesn’t happen to be pre-overclocked. In the case that it is, we always start out rather skeptical, especially with this particular card since XFX did a masterful job of applying a factory overclock that’s actually substantial… not a measly 15MHz boost like some other companies try to pull.

But, were we able to push it even further? Believe it or not, yes. The “stock” clock for XFX’s card is 666MHz Core, 1404MHz Shader and 1150MHz Memory, and after a few hours of tweaking with RivaTuner, our max stable overclock proved to be 700MHz Core, 1475MHz Shader and 1200MHz Memory… a healthy boost.

What was a little odd, though, was that we managed to go much higher than this, especially on the Core and Shader, but there were almost zero differences in the overall scores delivered. The main change was the temperature, so it made all the sense in the world to lower all clocks to a more modest level. If you have a superb after-market cooling system, you may be able to push the card higher than what we’ve achieved and see real benefits, but it’s not likely to happen using the stock cooler.

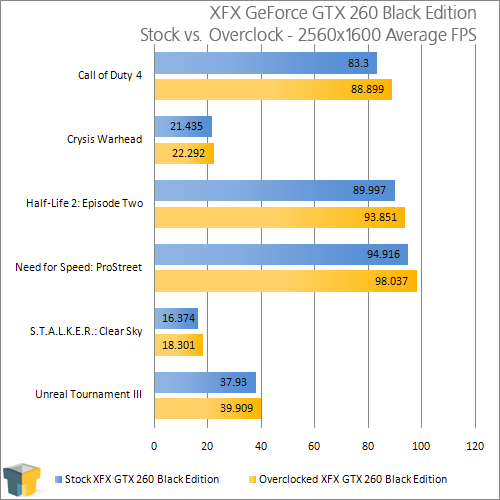

As we’d expect with such a nice boost in clocks, some real differences are seen, especially with CoD4 and NFS: ProStreet. I personally wouldn’t overclock for the sake of gaining an extra 4 FPS with a game that already scores well over 80 FPS, but if you would, then all the power to you.

GPU Temperatures

Regardless of whether or not you plan to overclock, having reasonable system temperatures is always welcomed. Not only will your machine be more reliable with cooler temps, it will likewise not add any unneeded heat to the room you are in (unless it happens to be wintertime and you keep the windows open, then it might be a good thing).

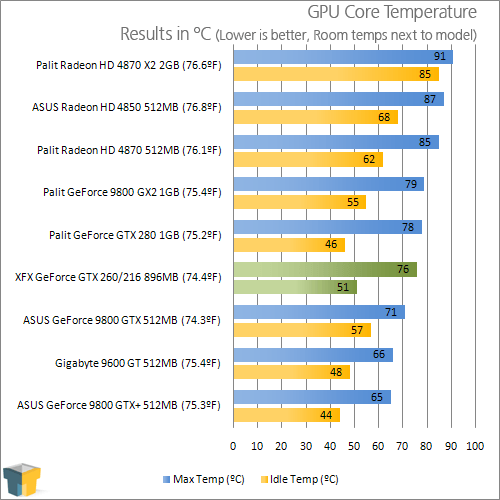

To test a GPU for idle and load temps, we do a couple things. First, with the test system turned off for at least a period of ten minutes, we measure the room temperature using a Type-K thermometer sensitive of up to 0.1°F. The result from this is placed beside the GPUs name in the graph below. Since we don’t test in a temperature-controlled environment, the room temp can vary by a few degrees, which is why we include the information here.

Once the room temp is captured, the test system is booted up and left idle for ten minutes, at which point GPU-Z is loaded up to grab the current GPU Core temperature. Then, a full run of 3DMark Vantage is run to help warm the card up, followed by another run of the same benchmark using the Extreme mode (1920×1200). Once the test is completed, we refer to the GPU-Z log file to find the maximum temperature hit. Please note that this is not an average. Even if the highest point was only hit once, it’s what we keep as a result.

Compared directly to the GTX 280, XFX’s card ran a little warmer while sitting idle, but oddly enough, it proved 2°C cooler at full load. I was a little surprised at this, given the GTX 260/216 is running much higher clocks, but the proof is in the pudding.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!