- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

A Look At AMD’s Radeon RX Vega 64 Workstation & Compute Performance

After months and months of anticipation, AMD’s RX Vega series has arrived. The first model out-of-the-gate is the RX Vega 64, going up against the GTX 1080 in gaming. In lieu of a look at gaming to start our Vega coverage, we decided to go the workstation route – and we’re glad we did. Prepare yourself to be decently surprised.

Page 7 – Power, Temperatures & Final Thoughts

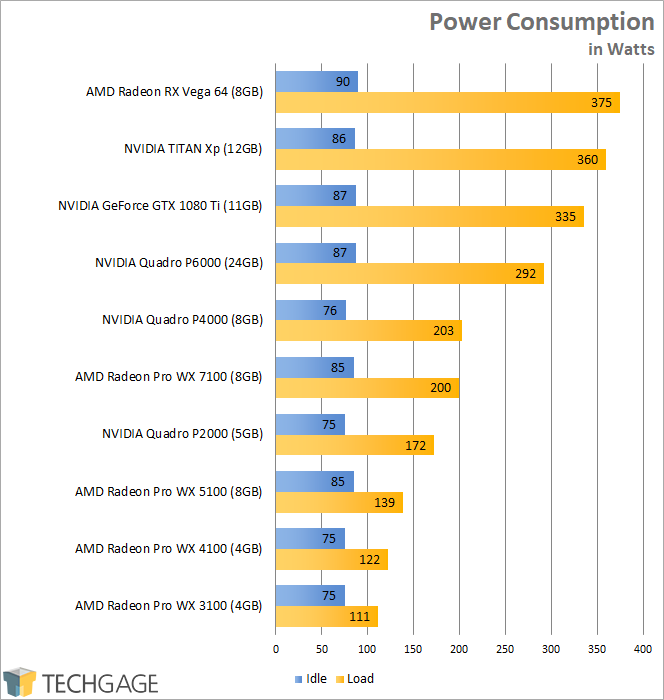

To test workstation GPUs for both their power consumption and temperature at load, I utilize a couple of different tools. On the hardware side, I use a trusty Kill-a-Watt power monitor which our GPU test machine plugs into directly. For software, I use LuxMark to stress the card for a wattage reading, and then start Unigine Superposition to stress the card in a gaming scenario to gauge the worst-case with temperatures (recorded with GPU-Z).

To test, the area around the chassis is checked with a temperature gun, with the average temp recorded. Once that’s established, the PC is turned on and left to sit idle for a few minutes. At this point, GPU-Z is run along with LuxMark. I immediately choose the Hotel render after start, and then OpenGL GPU rendering. Peak power draw is monitored, and then Superposition is kicked-off to push the card as hard as it can for temperature’s sake.

Unfortunately, the current version of GPU-Z doesn’t record the temperatures of RX Vega, and I couldn’t find any quick replacements. Still, I don’t think it will be hard to imagine that it would find itself on the top of the chart, based on what I’ve seen from this reference cooler.

The one graph I am able to provide backs up our assumptions that RX Vega 64 is a market-leader in power consumption – but not in a good way. I will note, however, that AMD provides different power profiles in the Radeon software that will help you drop overall wattages at the minor expense of lost performance. I did not have a chance to explore this too heavily in time for this article, but I did conduct this quick test:

- Default: 412W @ Load; 3DMark 4K score of 5171

- Power Save: 335W @ Load; 3DMark 4K score of 4916

Based on this one test, it would seem that using the power save profile would be wise – at least, if you believe shaving 77W from your total power draw is more important than +5% performance. AMD ships Vega with multiple power profiles, so you can spend some time ekeing as much performance out of your card as possible. I’ll do more testing on this as the week goes on, and report on my findings as soon as I can.

Final Thoughts

When I decided to defy all logic and make my debut look at AMD’s RX Vega one that treats it like a Radeon Pro card, I started to feel regret as I put more time into testing, because I just wasn’t seeing much worth reporting on. In fact, I almost decided to write a much more succinct article mostly looking at the areas where RX Vega shines.

I can honestly tell you that when I decided to suck it up and follow through with testing the entire workstation suite on RX Vega, I didn’t expect it to perform so well in so many different areas. In talking to site friends, all of whom have their looks at RX Vega gaming today, Vega 64 is going to slot under GTX 1080, matching it in some cases, but never besting it. I began to feel like a look at a non-workstation card in workstation scenarios where the card would fall behind its competition would be pointless. At least up until the point when I compiled all of the results, and began to see impressive performance all over the place.

While it didn’t dominate the ProRender benchmark as much as I expected it to, the RX Vega 64 reigned supreme in LuxMark, matching the Quadro P6000 in the Hotel Lobby render, and storming past it in the Neumann and LuxBall renders. In video encoding, RX Vega 64 delivered performance close to on par with NVIDIA’s, and performance that far exceeded that of AMD’s WX Polaris line, including the WX 7100.

In crypto, the RX Vega 64 simply killed it, beating out every single GPU outside of the TITAN Xp in SHA2-256 Hashing (and even then, it was pretty damn close). In CATIA and SolidWorks, AMD’s latest top-end card managed to beat out NVIDIA’s GeForce GTX 1080 Ti. And, last but not least, it delivered market-leading performance in science and finance, while gaining a major advantage in double-precision performance (about 2x NVIDIA).

All in all, this is extremely impressive. If you’re a workstation user wanting a GPU that will give you good performance in both workstation and gaming workloads, the RX Vega 64 is, surprisingly enough, a pretty attractive choice…

…but that all said, there are some (maybe obvious) caveats that work against the RX Vega 64.

To reiterate what I said on the first page, the cooler design of the RX Vega 64 we were given doesn’t do any favors to its performance. I couldn’t record temperatures due to lacking monitoring software, but given how loud the fan could get in gaming, it struck me as pretty obvious that some throttling had to have been occuring. In the power section above, I mentioned that AMD includes multiple power profiles with Vega, and in my opinion, one that’s not default, should be default.

In rendering, total PC power draw hit 375W with RX Vega 64, and in gaming, that got a boost to 412W. At its default value, it delivers a 3DMark score about 2% better than the power saving profile will dish out, but it comes with the gain of shaving a ton off of the power draw. +70W for +2% performance is ridiculous; multiply that +70W by every single Vega gamer out there who will simply use their GPU at its stock configuration. I think it’s great that AMD is giving people a choice, but I really think its default choice should be different.

I plan to delve more into how Vega handles its different power profiles later in the week. Following this review will be a full look at the gaming performance of the card, which will then be followed by a look at the “Best Playable” settings for our current arsenal of games at 4K and 3440×1440.

Ultimately, for gaming, RX Vega 64 sits near GTX 1080, but NVIDIA’s card comes out ahead overall. That’s really saying something considering the GTX 1080 came out 15 months ago. In compute, however, which has been the overall focus of this article, RX Vega 64 struck back, surpassing even the GTX 1080 Ti (and sometimes TITAN Xp) in select tests. That aspect of RX Vega is downright impressive. It seems very likely to me that sooner than later, mining performance will also see a boost on these cards, because the compute advantage is there, just waiting to be exploited.

I won’t have my final conclusions on the gaming aspect of RX Vega for another day at least, but you can read my initial opinions to satiate your appetite in the meantime. For compute workloads? If you use anyone of the tools that RX Vega excels at running, you can definitely reap some nice rewards with this GPU. I’d just recommend holding out for vendor cards with improved coolers.

You can check out Amazon for prices, but be warned that the prices will be a bit silly to begin with, and it’s likely best to wait for AIB cards with better coolers to hit the market first. Even the Vega FE cards a little pricey right now.

Pros

- Cryptographic, scientific, and financial performance is top-rate.

- Solid video encode performance, beating the heck out of WX’s Polaris cards, and about matching NVIDIA’s top-flight offerings.

- CATIA and SolidWorks performance bested the GTX 1080 Ti. V-Ray performance almost matches Quadro P6000.

- Offers good double-precision and unparalleled half-precision performance for a gaming GPU.

Cons

- Default profile draws a lot of excess power for very little performance gain.

- Reference card runs too hot, and too loud. Compute tasks are not as brutal as gaming.

- HBM2 feels like it adds nothing but cost on Vega.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!