- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Blender 2.80 Viewport & Rendering Performance

With Blender 2.80 now launched, we’re taking a fresh look at performance across the latest hardware, including AMD’s latest Ryzen 3000-series CPUs and Navi GPUs, as well as NVIDIA SUPER cards. That includes both CPU and GPU testing with the Cycles renderer, GPU testing with Eevee, and viewport performance with LookDev.

Page 2 – Blender 2.80 Viewport Performance

Good rendering performance is imperative in an efficient workflow, but equally (or more so) important is viewport performance – you know, the window where all of your work is done. If performance there is lacking, you’re going to get an actual headache. You don’t necessarily need 60 FPS, mind you, but you definitely want to avoid jerky movement.

For the default Solid and Wireframe modes, you generally won’t need to worry at all as to whether or not you’re going to get suitable performance. If you’re building a PC today, you’re undoubtedly going to get quality performance with those modes at 1080p at the very least (most often you will be able to do more). It’s with Eevee’s new LookDev mode when GPUs start to get nervous.

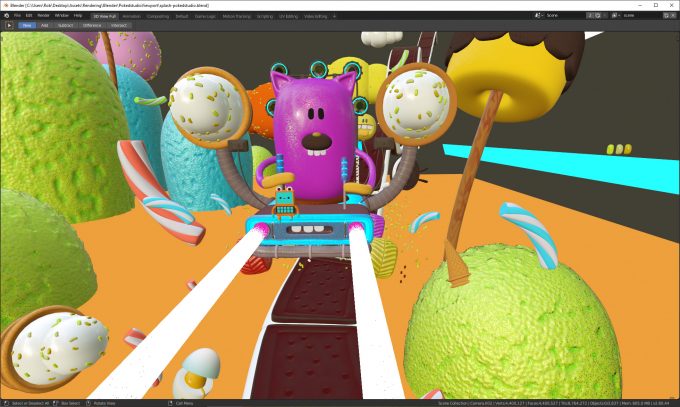

LookDev loads a scene’s assets and enhances the lighting effects to deliver a result that’s much closer to a final render than what you will see in the Solid mode. You can see an example of LookDev in action here:

This scene might not look too demanding, but for LookDev viewport tests, it’s actually very grueling. We’ve tested many free scenes around the web, and ultimately found this one provided by Blender (authored by Pokedstudio) provides a realistically grueling test, and one that doesn’t go into overkill territory (one scene tested hit single digit frame rates on top-end hardware, so it was a bit aggressive.)

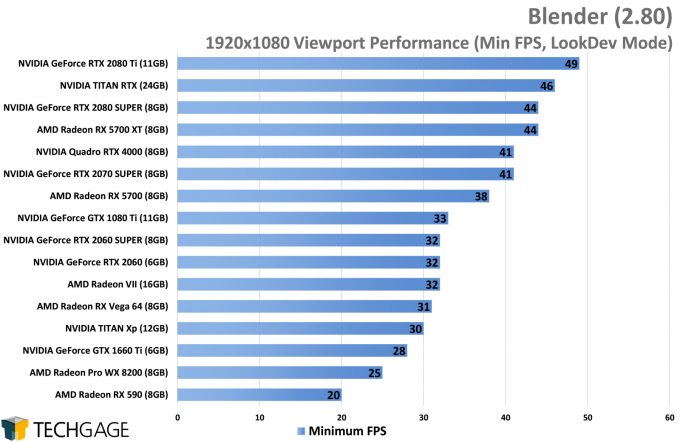

At 1080p, we have a number of GPUs that perform quite well. The RX 5700 XT didn’t particularly grab our attention in the render tests, but it performs very well here, keeping up with some of the best of them. NVIDIA’s Turing-based GPUs seem to have the ultimate advantage, but it’s not bad for AMD that it takes a $500 GeForce to beat out its $400 5700 XT.

To be clear, performance in LookDev doesn’t need to be as fast as it should be for gaming. 30 FPS here is completely suitable. You don’t need extreme fluidity; you just need it to not give you a headache, and be “good enough”. If 30 FPS is good enough for Destiny 2 on console, it’s probably enough for your design work. Of course, if you can afford better, you wouldn’t regret it.

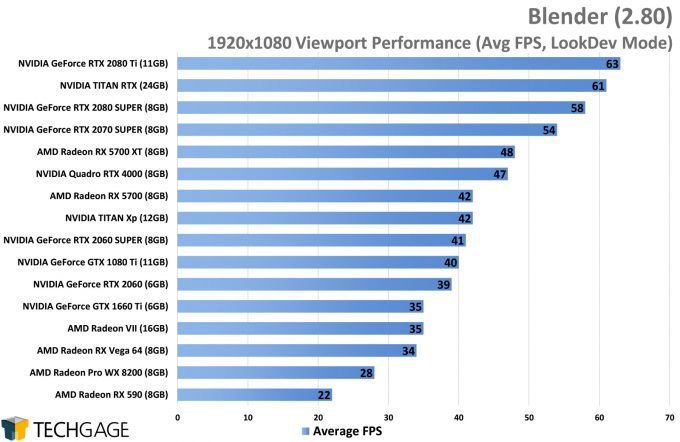

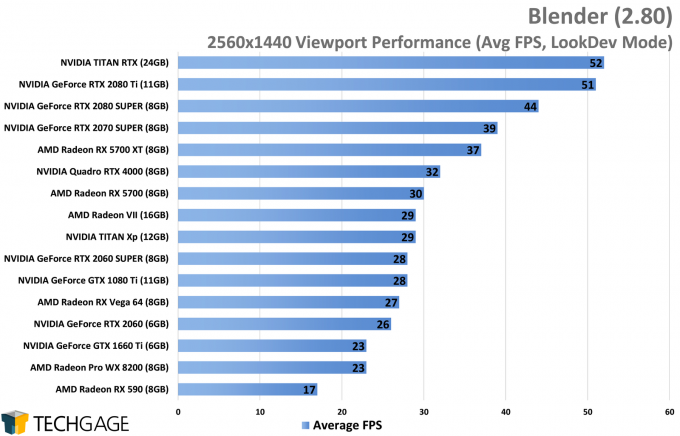

How do things change with a slightly higher 1440p resolution?

With 1440p, the pain increases to the point where some previously acceptable cards should almost be ignored. Granted, this is a rather hardcore scene (in terms of complexity) we’re using for our viewport tests, so your projects may not prove to be quite as grueling on hardware. But, when the going gets tough, there is definitely some separation between the top and bottom SKUs going on.

NVIDIA’s Turing architecture once again leaps to the top of these charts. The RX 5700 XT also performs quite well, but NVIDIA has a huge advantage with its higher-end parts. Still – the 5700 XT even manages to beat out the Radeon VII here, something that carries over to our 4K test. And speaking of:

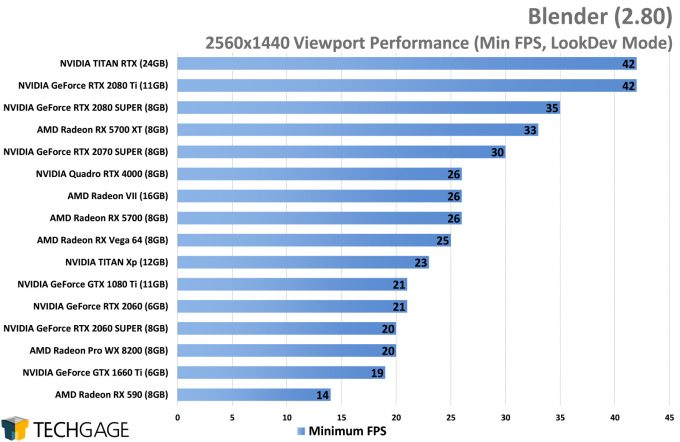

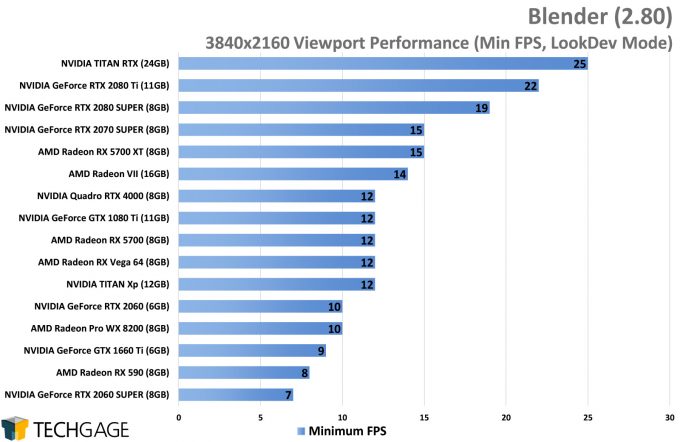

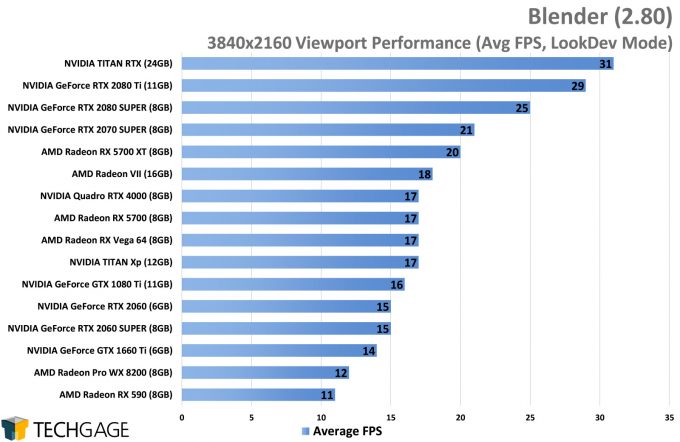

4K resolution might be common nowadays, but for Blender / LookDev work, you are going to need some serious GPU horsepower to enjoy working with complex scenes. Even the top-end TITAN RTX struggles to get much past 30 FPS. That’s still suitable enough, but lower means you will be using LookDev on occasion rather than regularly.

To be clear about one thing, though: all of this testing represents a full-screen viewport. If your viewport only takes up a portion of your overall UI, then its resolution is the effective resolution. So, even if you run 4K, but the viewport itself is scaled much smaller, you won’t see this same performance. The smaller the viewport window, the better the performance, effectively. It’s not Blender Rocket science, but it’s important to note.

Wrapping Up

With all of the charts found in this article, we hope that we’ve helped you figure out what hardware you should be looking at going forward. Your choices will largely depend on your budget constraints, but fortunately, getting good performance out of Blender 2.80 won’t have to be expensive. We saw modest CPUs and GPUs perform spectacularly well.

NVIDIA’s new Turing architecture seems to bring optimizations with it, while AMD’s RX 5700 XT performed well, too. On the CPU side, the more cores you have, the faster either straight CPU or hybrid rendering will be – though you will definitely reach a point of diminishing returns before long with top-end GPUs.

A couple of weeks ago, Blender and NVIDIA jointly announced that RTX ray tracing and denoising support would be coming to Blender. Currently, this is in more of an alpha than a beta, requiring some hands-on work to get it up and running. Once things stabilize a bit, or we catch a whiff that it’s nearing beta testing or the stable branch, we’ll dive in and generate some performance results.

Another thing to note is that we didn’t conduct multi-GPU testing in this article, but our previous testing with dual TITAN Xps showed a great uplift. If you have multiple GPUs at all of the same vendor (AMD or NVIDIA), you should consider retaining the second one in your machine after an upgrade to see if it improves performance further. You don’t need identical GPUs to take advantage of multi-GPU rendering, but the level of benefit will certainly be best if you have duplicate hardware. Similarly, if you are interested in how tile sizes impact Cycles performance, you can hit-up the same link.

If you are still left with questions, please feel free to post them below.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!