- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

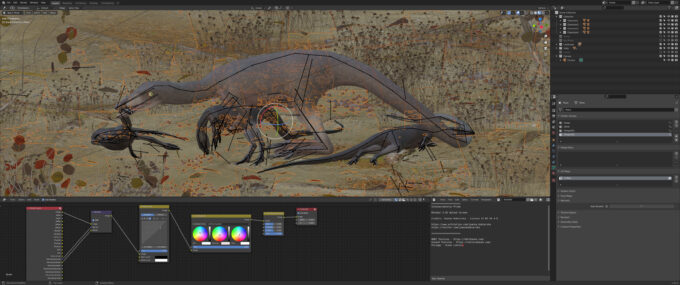

Blender 2.92 Linux & Windows Performance: Best CPUs & Graphics Cards

Blender’s latest version, 2.92, has just released, and as usual, we’re going to dig into its performance and see which CPUs and GPUs reign supreme. For something a bit different this go-around, we’re adding Linux results to our rendering and viewport tests, and not surprisingly, the results are interesting!

Page 1 – Blender 2.92 Rendering Tests: CPU, GPU, Hybrid & NVIDIA OptiX

Get the latest GPU rendering benchmark results in our more up-to-date Blender 3.6 performance article.

It’s time once again to take an in-depth look at CPU and GPU performance in Blender, something we’ve been doing more frequently lately as major Blender releases have accelerated. While a new version doesn’t always mean that we’ll see substantially different results from the previous one, things do change over time, so it’s good to retest.

You can read about Blender’s 2.92 features in our recent news post, where you can click through to an official video that will give you an even fuller run-down. While there are plenty of new features and improvements in 2.92, Geometry Nodes is easily one of the coolest. Be sure to check out some of the official Geometry Nodes demo files to experience it quickly. Likewise, you can grab the latest version of Blender in its many forms on the official downloads page. Lastly, if you want to check out Radeon ProRender performance in Blender, you can refer to our recent article investigating it.

When a new major version of Blender releases, we typically retest all of our hardware in Windows, and only Windows. After hearing your requests loud and clear, this article will also take care of Linux performance. Given the amount of time that it takes to test both OSes, we can’t promise that we’ll do this with every major release, but this certainly won’t be the last time.

This article is going to tackle rendering to the CPU, the GPU, as well as mixed rendering with CPU and GPU combined. Our initial GPU render testing showed that Windows and Linux perform virtually the same, so we opted to show only Windows for the GPU results. There are, however, notable differences in performance with regards to CPU rendering when it comes to Windows vs. Linux, so CPUs were tested on both OSes.

Our viewport tests will be found on the next page, where we will use two projects to see how our collection of graphics cards scale from one viewport mode to the next, again in both OSes.

Without further ado, let’s get to it. Here’s a quick run-down of the hardware tested for this article:

| CPUs & GPUs Tested in Blender 2.92 |

| AMD Ryzen Threadripper 3990X (64-core; 2.9 GHz; $3,990) AMD Ryzen Threadripper 3970X (32-core; 3.7 GHz; $1,999) AMD Ryzen Threadripper 3960X (24-core; 3.8 GHz; $1,399) AMD Ryzen 9 5950X (16-core; 3.4 GHz; $799) AMD Ryzen 9 5900X (12-core; 3.7 GHz; $549) AMD Ryzen 7 5800X (8-core; 3.8 GHz; $449) AMD Ryzen 5 5600X (6-core; 3.7 GHz; $299) Intel Core i9-10980XE (18-core, 3.0 GHz; $999) Intel Core i9-10900K (10-core; 3.7 GHz; $499) Intel Core i5-10600K (6-core; 3.8 GHz; $269) |

| AMD Radeon RX 6900 XT (16GB; $999) AMD Radeon RX 6800 XT (16GB; $649) AMD Radeon RX 6800 (16GB; $579) AMD Radeon RX 5700 XT (8GB; $399) AMD Radeon RX 5700 (8GB; $349) AMD Radeon RX 5600 XT (6GB; $279) AMD Radeon RX 5500 XT (8GB; $199) NVIDIA RTX 3090 (24GB, $1,499) NVIDIA RTX 3080 (10GB, $699) NVIDIA RTX 3070 (8GB, $499) NVIDIA RTX 3060 Ti (8GB, $399) NVIDIA RTX 3060 (12GB, $329) NVIDIA GeForce RTX 2080 Ti (11GB; $1,199) NVIDIA GeForce RTX 2080 SUPER (8GB, $699) NVIDIA GeForce RTX 2070 SUPER (8GB; $499) NVIDIA GeForce RTX 2060 SUPER (8GB; $399) NVIDIA GeForce RTX 2060 (6GB; $349) NVIDIA GeForce GTX 1660 Ti (6GB; $279) |

| GPU test platform (Intel Core i9-10900K) used 8GBx4 DDR4-3200 memory. CPU and CPU+GPU test platforms used 16GBx4 DDR4-3200 memory. OS and chipset drivers are fully updated on each platform before testing.AMD Radeon Graphics Driver: Linux: 20.45 | Windows: 21.2.3 NVIDIA GeForce Graphics Driver: Linux: 460.39 | Windows: 461.40 * * Linux: 460.56 | Windows: 461.72 for GeForce RTX 3060All product links in this table are affiliated, and support the website. |

Each system was tested in a largely stock state, aside from our memory XMP being enabled. In the event that enabling XMP likewise enables a non-reference automatic overclock (eg: ASUS MultiCore Enhancement), we enforce default CPU clock values. In addition to the latest graphics drivers being used for testing, motherboard chipset drivers were updated as well (if there was an update, that is.)

Our Windows 10 testing was performed in the latest 20H2 release, which has been fully updated through Windows Update. For our Linux testing, we’re using Ubuntu 20.04.2, again fully updated. We also upgraded Ubuntu’s Linux kernel from 5.8 to 5.11, as certain GPUs (namely AMD’s RDNA2 series) require it to unlock their full performance potential.

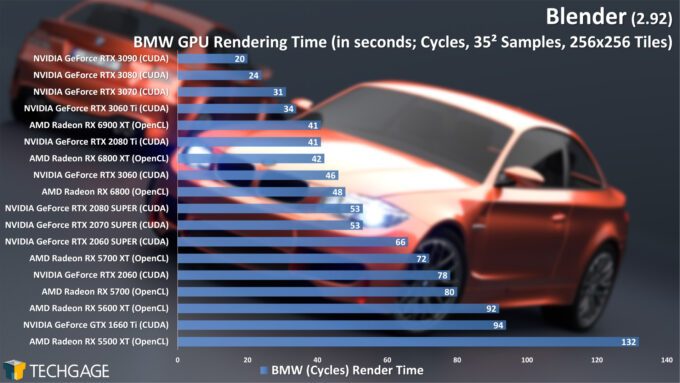

GPU: CUDA, OptiX & OpenCL Rendering

If you’ve read any one of our recent Blender in-depth performance looks, you’re likely aware that GPU rendering is probably the direction you’re going to want to go. CPUs have their place, but for the price of a huge CPU that would make a significant difference to rendering performance, you could purchase multiple mid-range GPUs and likely greatly outperform it. Well – you know, assuming you can find a GPU to buy (grumble grumble).

With a CUDA render time of 20 seconds on the beefy RTX 3090 with the BMW scene, it’s clear that we’ll have to retire this project at some point, or at least crank its sample count. More than likely, though, we’ll eventually replace all of our projects with more recent demo files for a fresh coat of paint.

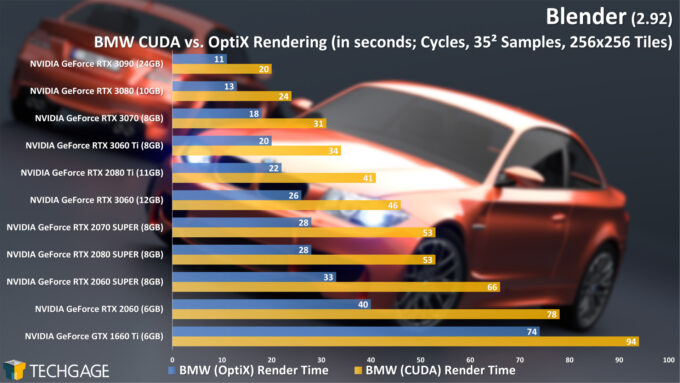

If 20 seconds makes it seem as though there’s still a fair bit of breathing room, lest we forget the trick NVIDIA pulled out of its sleeves a couple of years ago: OptiX ray tracing acceleration. With that API enabled, and the RTX series’ ray tracing cores engaged, render times can be slashed. Such as where the RTX 3090 can cut its 20 second render time down to 11 seconds:

When we take a look at the totality of NVIDIA’s current- and last-gen RTX cards, the just-released RTX 3060 sits in the middle, outperforming last-gen’s RTX 2080 SUPER. The RTX 3060 Ti with OptiX renders this BMW project in as much time as the RTX 3090 does with CUDA, so that card seems quite powerful for its (ideally uninflated) price.

A happy accident occurred during our testing, where our testing scripts thought the GTX 1660 Ti was an RTX card, and proceeded to run the OptiX tests. Since the GTX series doesn’t include RT (or Tensor) cores, that API was typically unavailable. At some point, that changed, because OptiX works on this RT-coreless GTX 1660 Ti, and actually improves performance by doing so. If you have an NVIDIA card, but one that’s not RTX-enabled, you should try out the OptiX option as well and see if performance improves. We unfortunately didn’t see improvement on our older Pascal-based GeForce GTX 1080 Ti, but it’s still worth testing out for yourself in case you see different.

Note that as of Blender 2.92, OptiX supports ambient occlusion and bevels, so the list of what the new API doesn’t support is becoming seriously thin.

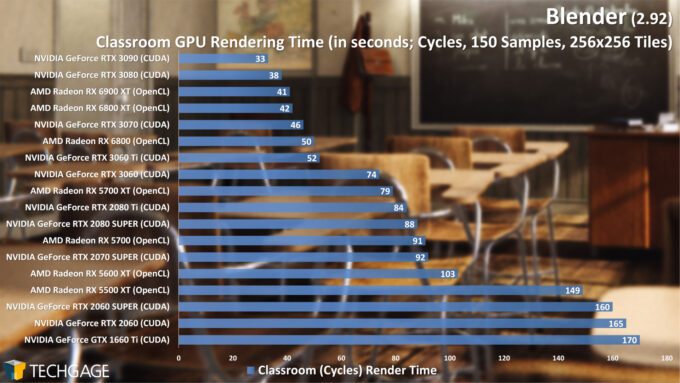

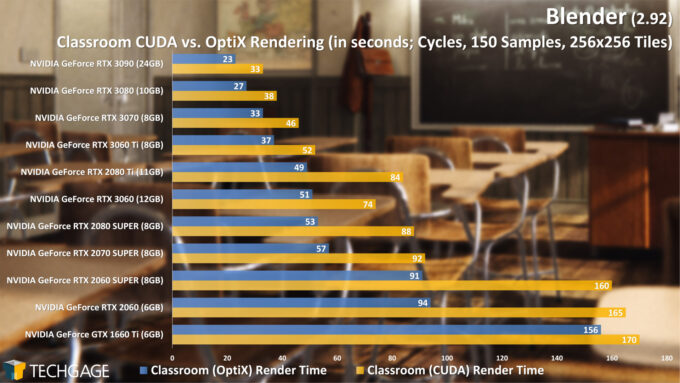

Let’s see how the Classroom project behaves:

The Classroom project has historically scaled a little differently from the BMW one, and that tradition has been upheld with 2.92. AMD’s Radeon once again perform really well in this project when compared to the BMW one, with the RX 6900 XT creeping up on the RTX 3080 – until OptiX acceleration is once again introduced, that is.

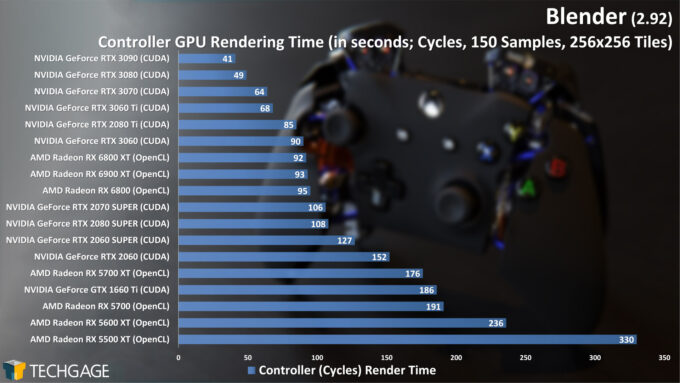

This third Cycles project, of an Xbox controller, yet again shakes things up, with NVIDIA’s new RTX 3060 performing on par with the RX 6800 XT. This project is a perfect example of one that wouldn’t have rendered in OptiX in 2.91, but now does in 2.92 thanks to the API’s improvements (we didn’t officially benchmark it, but did verify that it rendered correctly.)

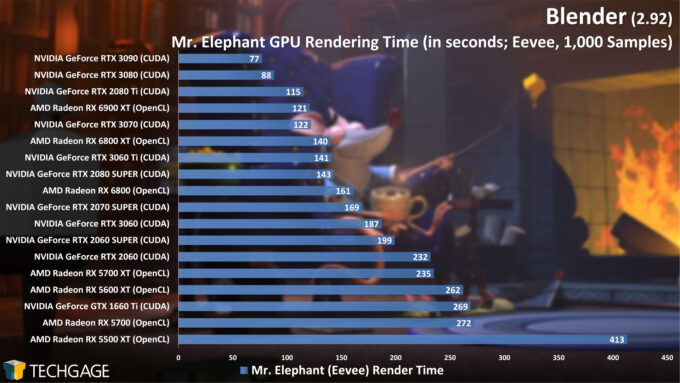

Cycles isn’t the only render engine in Blender. The new Eevee seems to be growing in popularity really quickly, which is great to see, and pretty much expected, because it’s powerful, and getting better all of the time. It’s also an engine that doesn’t take advantage of NVIDIA’s RT cores, and the result is a playing field that’s a bit more even:

One of the highlights featured on the Blender 2.92 features page is improved Cycles rendering performance, but interestingly enough, we didn’t actually see much change at all between 2.91 and 2.92. Where we did see an obvious improvement was with Eevee.

Between 2.91 and 2.92, Eevee render times improved from 111s to 77s on the RTX 3090, from 144s to 121s on the RX 6900, and from 179s to 122s on the RTX 3070. What’s really impressive is how much performance has changed on lower-end hardware. The RX 5500 XT’s render time dropped from 646s to 413s, while the the RTX 2060 SUPER dropped from 269s to 199s. It’s really great to see this kind of improvement from one Blender version to the next, so the developers who made it happen deserve some kudos!

CPU Rendering

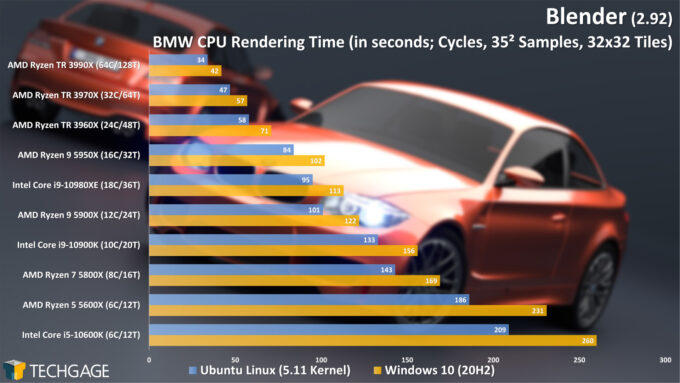

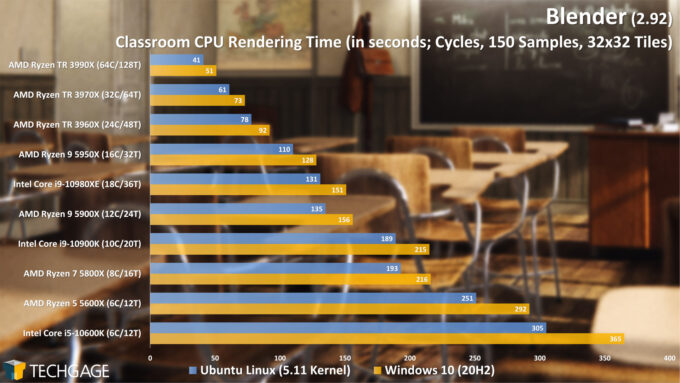

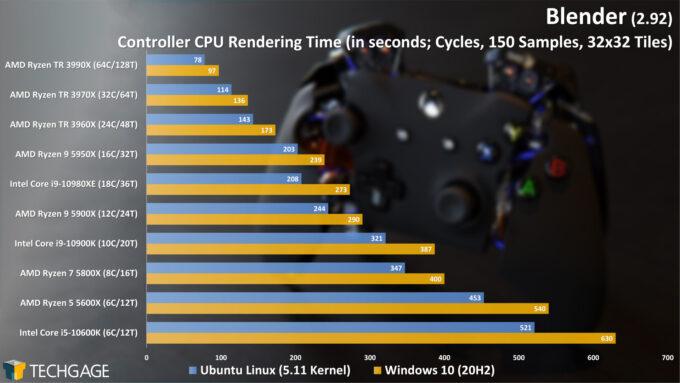

Every time we publish one of our Blender performance reports, someone inevitably asks us to run the same tests in Linux – and it’s for good reason. We’ve seen many times in the past, throughout our many CPU performance articles, where Linux had the upper-hand, and often, rendering is included in that.

If your workstation is blessed with a many-core CPU, you will enjoy dramatic rendering performance improvements over smaller chips. When AMD first unveiled Ryzen’s first-generation CPUs a few years ago, the company talked at length about its rendering benefits in Blender. Later, we even saw the company boast about it on its 256-thread servers. Fortunately, Cycles really does scale that well.

Not surprisingly, the 64-core Ryzen Threadripper 3990X takes the top spot, with well defined performance deltas between all of the chips. In every single case, renders done in Linux were speedier than Windows, with gains that would be appreciated by someone who owns either a low-end or top-end CPU. For most chips, the total render time is cut down by about 20%.

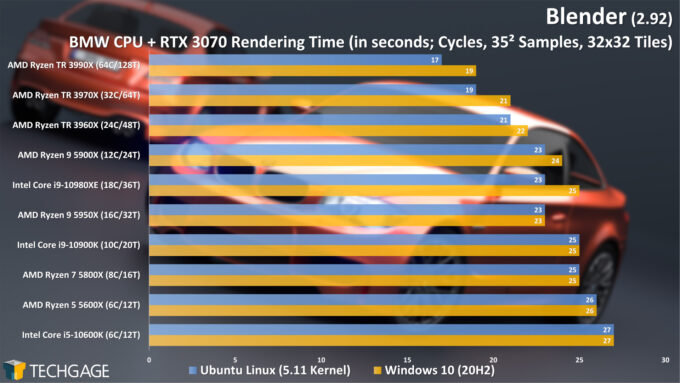

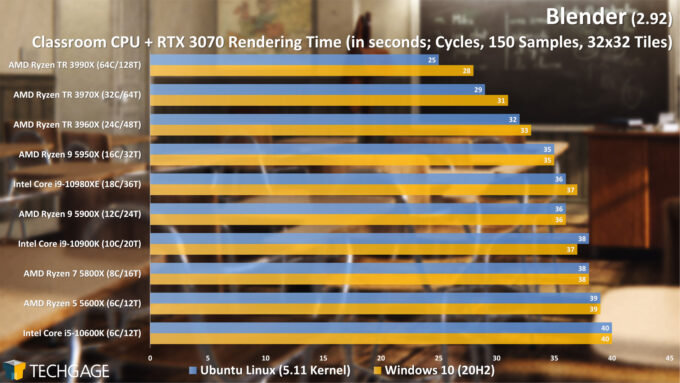

As we’ve likely conveyed already, modern GPUs are so powerful, that for rendering, you’re going to likely find the best value there. But, if you do happen to have a great CPU, it may just complement your GPU quite well. We’re going to explore that next, but do want to mention up front that a good CPU can also improve things like baking, physics, and compositing – so you probably don’t want to go too low-end, either. Another factor at play is memory, and as projects get larger, the GPU will end up using more system memory to compensate for its smaller framebuffer.

CPU + GPU Rendering

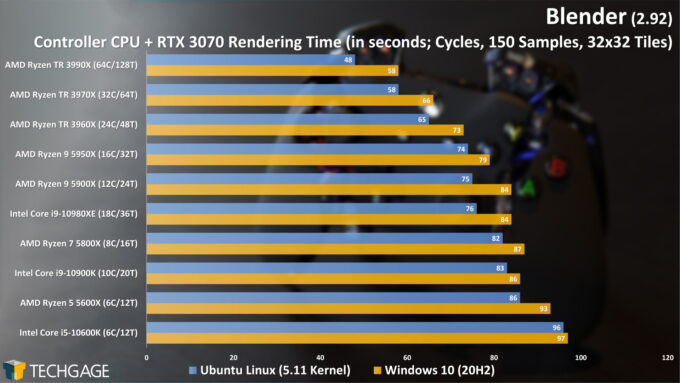

We mentioned earlier in the article that we stuck to Windows for our GPU rendering tests, as both OSes delivered virtually identical performance. Because the GPU is so powerful, the advantages on Linux when using heterogeneous rendering are not nearly as notable, but there are still some gains to be seen.

Ideally, if your workstation has a many-core (eg: 16+ cores) CPU, it’ll be because you have other workloads that need it, or you’re a huge multi-tasker. For most Blender users, we’d quicker recommend a more modest core count for your CPU, assuming that the chip is going to be clocked higher. CPUs with higher clock speeds, better IPC (instructions-per-clock), and generally higher single-threaded performance will make the OS and software snappier, even if the differences are hard to perceive through benchmarks. This is something we’ll talk about a bit more on the next page, where we dive into viewport performance.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!