- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Mid-2021 GPU Rendering Performance: Arnold, Blender, KeyShot, LuxCoreRender, Octane, Radeon ProRender, Redshift & V-Ray

It’s been six months since we’ve last taken an in-depth look at GPU rendering performance, and with NVIDIA having just released two new GPUs, we felt now was a great time to get up-to-date. With AMD’s and NVIDIA’s current-get stack in-hand, along with a few legends of the past, we’re going to investigate rendering performance in Blender, Octane, Redshift, V-Ray, and more.

Page 2 – Octane, V-Ray, Redshift, Arnold & KeyShot Performance; Power Consumption

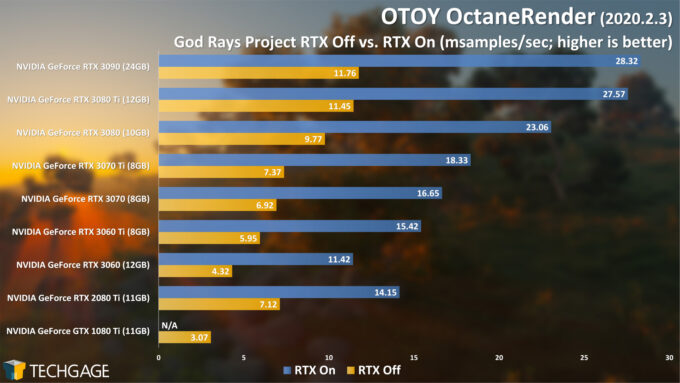

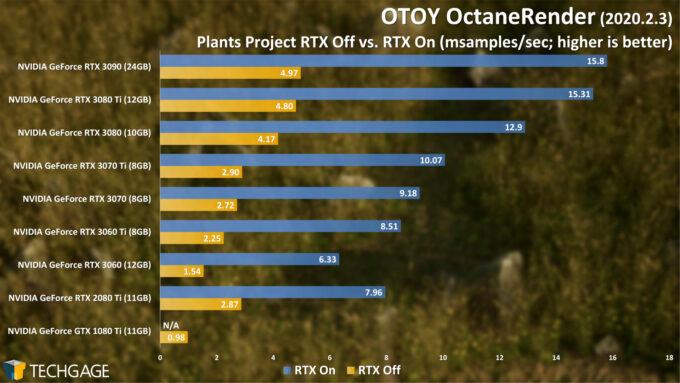

OTOY OctaneRender 2020

OctaneRender was one of the first NVIDIA-focused renderers that implemented OptiX ray tracing acceleration, and the company is clearly fond of it, considering there’s a special menu option for testing RTX on and off with any scene you have loaded. Across both of these projects, it’s clear that OptiX (or “RTX”) can dramatically improve rendering performance, to an even greater degree than most of the others. We’re talking performance that’s more than doubled.

The GeForce GTX 1080 Ti is still a great GPU in its own right, but we feel that gamers would get more use out of it than creators today, because the current crop of NVIDIA cards are simply unmatched. That’s even without RT acceleration; even the RTX 3060 proves close to 50% more competent than the GTX 1080 Ti. But, turn RTX on, and that $329 (SRP) GPU turns into a screamer.

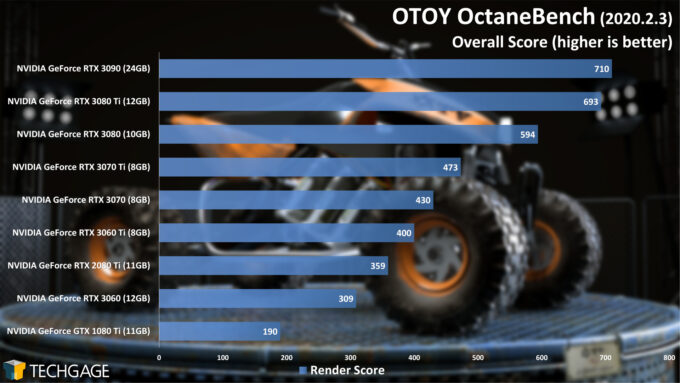

Fortunately, the standalone OctaneBench benchmark backs up our real-world tests pretty well:

As NVIDIA’s Jensen Huang loves to say, the “more you buy, the more you save”. In Octane’s case, the GPU you choose really does make an incredible difference to how many frames you can render in a given amount of time. Naturally, if your budget allows, adding multiple GPUs will accelerate the rendering further. Isn’t that just what you want to hear during ongoing chip shortages?

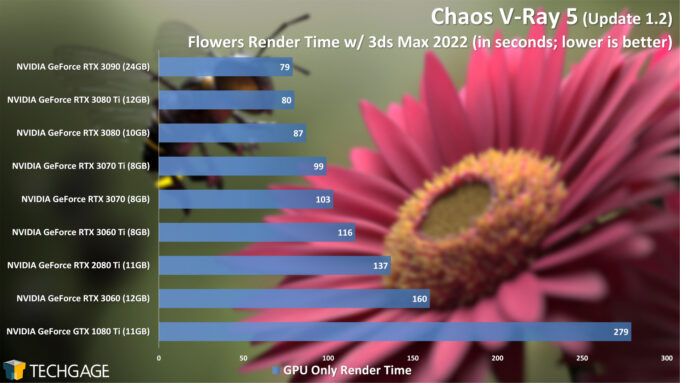

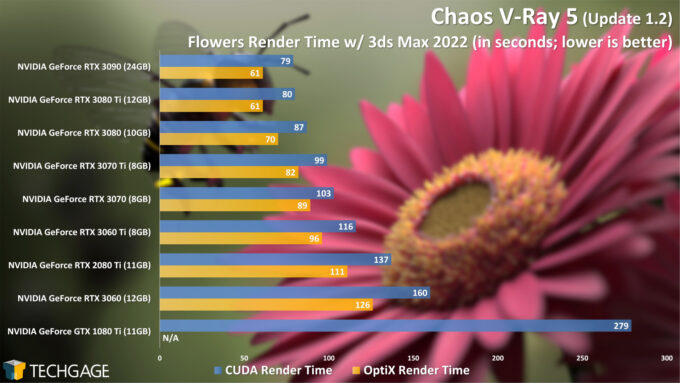

Chaos V-Ray 5

With our first V-Ray render, using CUDA exclusively, we’re seeing great scaling all around, and more proof that the GTX 1080 Ti is getting up there in age. We can also see that the 3080 Ti, despite costing hundreds less than the RTX 3090, effectively delivers the same performance. That even carries over to OptiX testing:

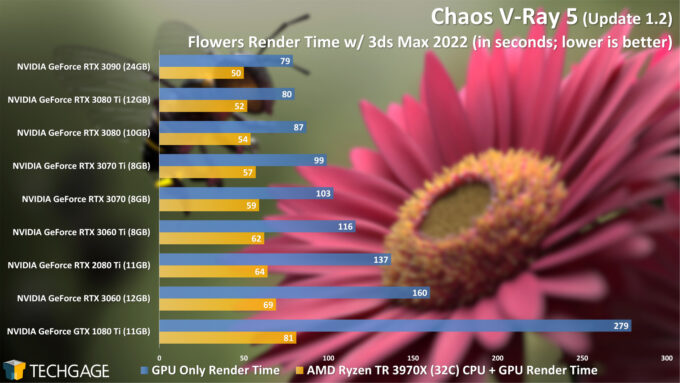

It’s interesting that the gains seen from OptiX in V-Ray are not quite as distinct as they are in a renderer like Octane. That said, you’ll definitely want to keep the RTX option enabled, since any performance improvement is going to be appreciated. Because OptiX doesn’t make quite as large a difference in V-Ray as some others, heterogeneous rendering stands to benefit even more – if you have a monster CPU:

Using a CPU as large as the Ryzen Threadripper 3970X might not be the best idea for a test like this, because it’s obviously not representative of a standard workstation choice. However, it still shows that big CPUs can in fact deliver a great benefit. With the 3970X and GTX 1080 Ti tag-teaming, we see render times on par with the RTX 3070 Ti running OptiX.

If you’re a creator, you’ll always want the latest GPU can get your hands on, but it’s still nice to see the CPU contributing so much here, especially if you happen to have a many-core CPU in your own rig, and want to better utilize it.

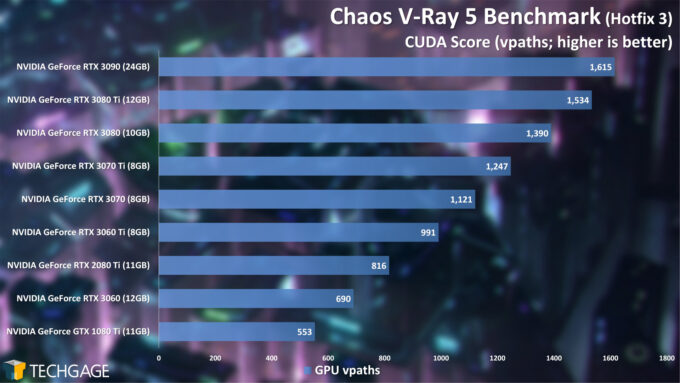

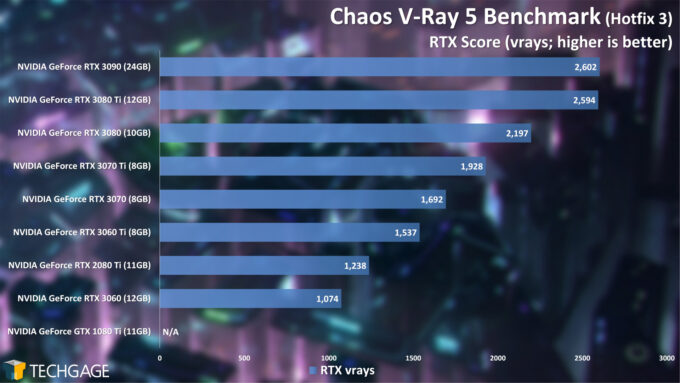

Let’s see what the standalone V-Ray benchmark has to say:

Ultimately, the V-Ray benchmark doesn’t scale identically to our real-world project, but that’s to be expected, since no two projects are generally going to scale exactly the same. Still, in some regards, the GTX 1080 Ti falls even further behind the rest in our real-world tests than it does when it’s summed up as a score with this dedicated benchmark. One thing the benchmark definitely agrees on is the fact that the RTX 3080 Ti and RTX 3090 perform effectively the same – they just have different frame buffer sizes.

Maxon Redshift 3

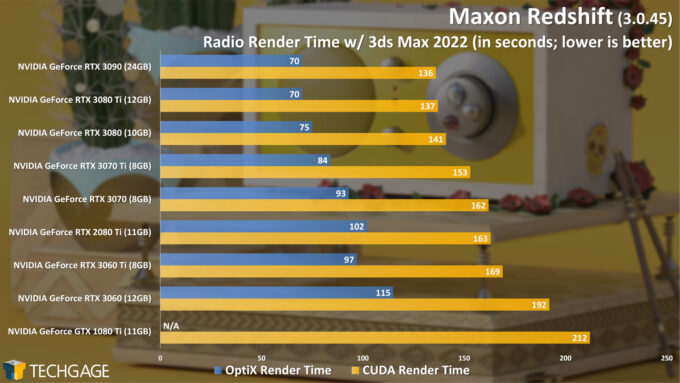

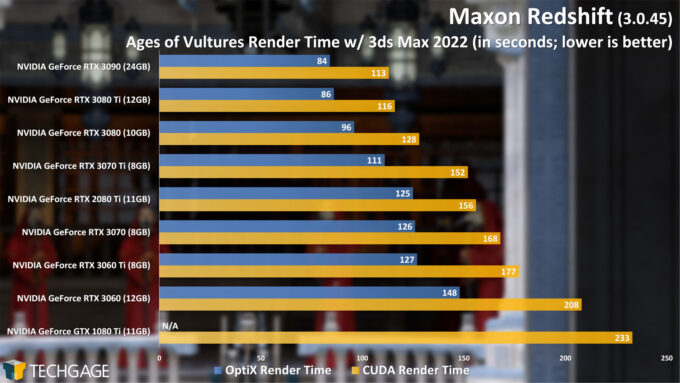

We’ve said before that two different projects are very unlikely to scale the same way in performance charts, and the two projects used here prove that in spades. With our simpler Radio project, enabling OptiX makes an enormous difference, while the gain in Age of Vultures is more mild. If you’re a Redshift user stuck with GTX 1080 Ti, you should feel happy about the performance you’re getting, although it will still be hard to ignore the newer GPUs that support RT acceleration.

There are a couple of specific results that stand out to us here. In the Radio project, the RTX 2080 Ti somehow falls behind the RTX 3060 when using OptiX, but the roles reverse with the Age of Vultures scene. Further, enabling OptiX almost mimics a GPU upgrade. The RTX 3060, with OptiX enabled, closely matches the performance of the RTX 3070 Ti using CUDA.

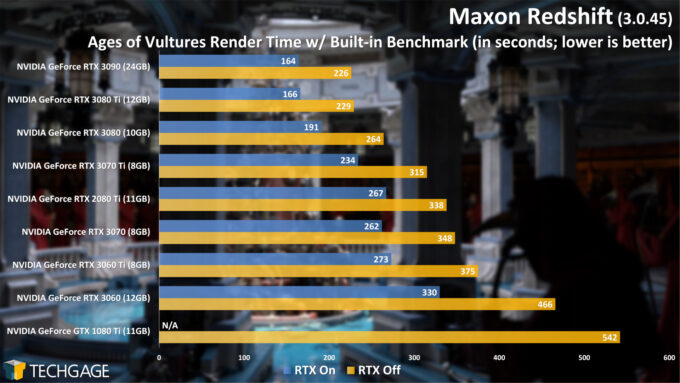

Here’s the take from the standalone benchmark, using the same 3.0.45 version:

Considering this benchmark uses the same scene as one of our real-world tests, the fact that it seems to scale identically isn’t too surprising, but even so, it’s nice to see.

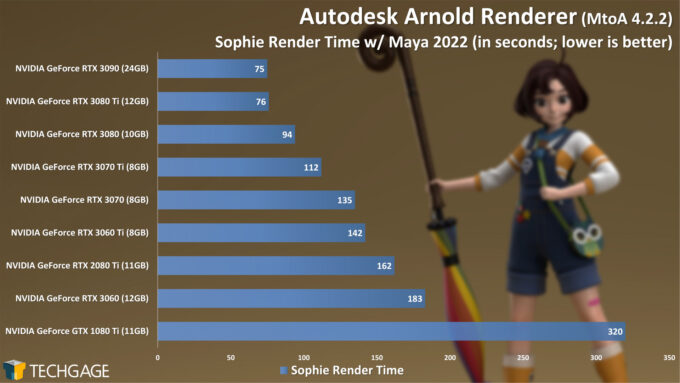

Autodesk Arnold 6

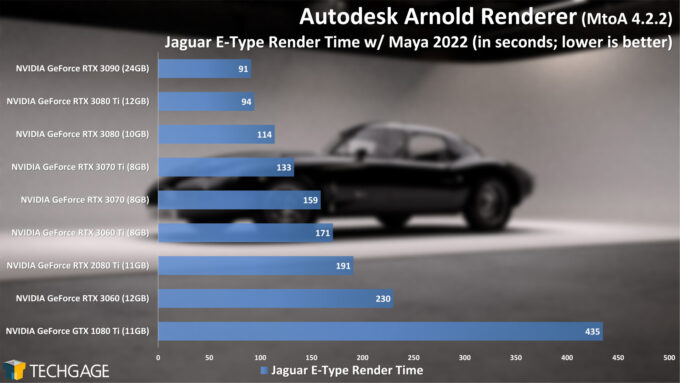

In many of our tests so far, we’ve run both CUDA and OptiX rendering to highlight the differences, so if you’ve been wondering why we don’t do that with Arnold, it’s because OptiX is default. If you have a GPU with RT acceleration capabilities, you’ll simply see improvements to performance automatically.

We’re given yet another clear-as-day example of how the GTX 1080 Ti isn’t the rendering powerhouse it once was, thanks in large part to lacking RT acceleration. That acceleration allows a low-end current-gen GPU, like the RTX 3060, to leap far ahead of the Pascal-based top-end GTX 1080 Ti. Funny enough, the RTX 3060 even manages to bring more memory to the table.

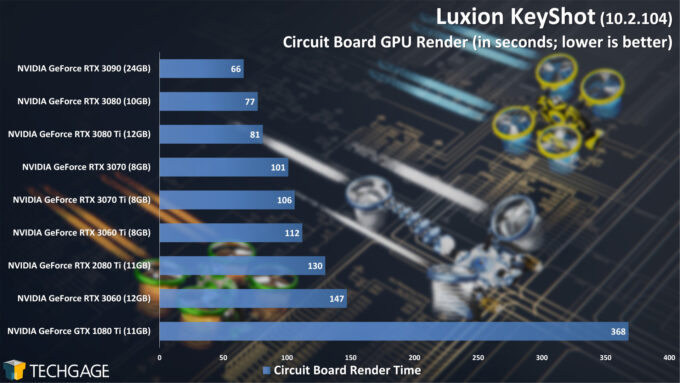

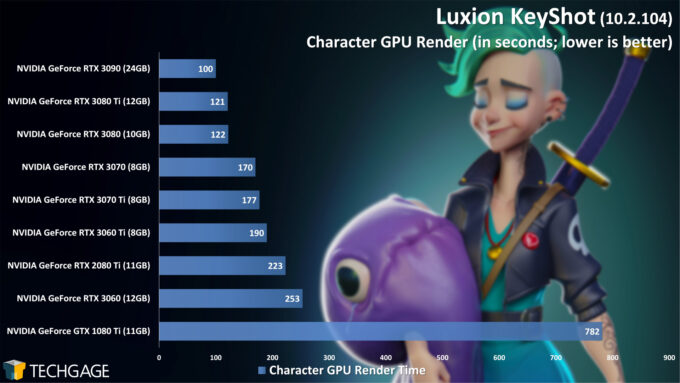

Luxion KeyShot 10

Like Arnold, KeyShot will automatically take advantage of RT acceleration if a GPU offers it, and also like Arnold, KeyShot highlights that it’s time to upgrade your GTX 1080 Ti.

There are a couple of odd results in both of the graphs above worth highlighting. In both projects, the RTX 3070 Ti fell behind the performance of the RTX 3070, which is yet another thing that forced us to reinstall both GPUs in order to sanity check (surprise: the results held). We can’t explain why these anomalies happen, but we’re well over being surprised when they creep up. Similarly, with the Circuit Board scene, the 3080 Ti somehow fell behind the 3080 (yes, we retested that, too.)

It could be that future KeyShot versions will fix those flip-flopped results, but as it stands today, we’d suggest the RTX 3070 over the 3070 Ti if KeyShot is your primary design tool, since it does prove faster at this current point in time. If the 3070 Ti had a bigger frame buffer, it’d be a no-brainer. But no one wants to pay more for less performance in their tool of choice. Last summer, we saw the same thing with the RTX 2070 SUPER performing the same (or better) than the RTX 2080 SUPER. Truly odd, and annoying, since it leads us to spend time retesting!

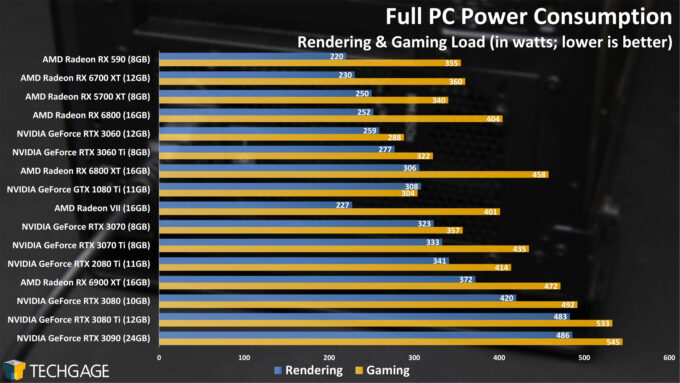

Power Consumption

To test this collection of GPUs for power and temperatures, we use both rendering and gaming workloads. On the rendering side, we run the Still Life project released alongside Blender 2.93; for gaming, we use 3DMark’s Fire Strike 4K stress-test. Both workloads are run for five minutes, with safe peak values recorded (eg: ignoring spikes that happen for a split second because Windows decides to do something). Power is recorded with a Kill-A-Watt meter, measuring full system power draw.

The reason we power test both rendering and gaming workloads is probably made obvious by the big deltas seen above. In every single case (aside from 1080 Ti), gaming will draw far more power than rendering. With either metric, NVIDIA’s top-end RTX 3080 Ti and RTX 3090 prove to be the power-hungriest in our lineup, but they also happen to deliver the best performance, so it’s hard to complain.

While its performance makes the Radeon VII not as relevant today as it was at launch, we feel compelled to point out that we have no idea why its power consumption is so modest in comparison to the rest of the GPUs here. We’ve seen this same behavior in previous looks, where rendering uses far less power than gaming, although the delta between them is far more pronounced today. We ran the same GPU on the Classroom project as a sanity check, and it does use more power there, but still not as much as we’d expect (260W) for a top-end Vega. Either way, it’s just too bad that GPU doesn’t offer outstanding rendering performance given the modest power it pulls down.

Final Thoughts

By now, you should have a good idea about which GPU you’d like to go with in your new build, or upgrade to in your current build. Because of the ongoing chip shortages, and cryptocurrency miners hogging a huge chunk of the models that make it into people’s hands, it’s really hard to guarantee that you’ll even be able to find the exact model you want. The situation is even worse if you’re trying for a specific model from any one of the vendors that thrive on offering 10 versions of the same GPU.

We can’t help with the chip shortage issue, but we can wish you good luck in finding the right one for your needs, sooner than later. If you typically go the prebuilt route, you may end up having better luck than someone trying to upgrade their current rig. You may also be price-gouged less on the GPU itself, but you will have to expect a longer-than-ideal lead time.

That all aside, like our many performance articles of the past, this one highlighted well the fact that not all workloads are alike. If you’re a KeyShot user, for example, you can’t move into Arnold or V-Ray and expect the exact same scaling. Ultimately, though, one thing is clear: it’s hard to beat NVIDIA.

Ignoring the tests that support only NVIDIA GPUs, the tested GeForce cards prove seriously strong in the tests that AMD’s Radeons also support. That even includes AMD’s own Radeon ProRender. For Blender, weaker viewport performance on Radeon makes going with NVIDIA a no-brainer, because this is hardly the first major Blender release where we’ve seen poor Radeon performance. Add OptiX ray tracing acceleration to the mix, or even the still-better GeForce performance in EEVEE, and it’s hard to look away from NVIDIA.

It’s our hope that AMD’s RDNA3 (or whatever it’s called) GPUs bring some hefty surprises, and not just keep up to the competition better, but force certain renderer companies to begin rolling out Radeon support in Windows. We’ve heard “no comment” from select renderer developers when asked about Radeon, but we can’t help but think if NVIDIA wasn’t so dominant, we’d see greater support for the red team by now.

If you are still left with any questions, you know where to post them (↓).

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!