- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

AMD Radeon R9 280X Graphics Card Review

Most next-gen GPU launches are a simple affair: Launch one model, then another, and then another. AMD’s latest series is a bit different. In advance of its forthcoming flagship R9 290X, the company decided to push all of its mainstream parts off of the truck at once. So, let’s get started, first with a look at the $299 R9 280X.

Page 1 – Introduction

Can you smell that? No, not me! I’m talking about that fresh stack of AMD GPUs sitting on the desk over there *points to the right*. Sheesh.

That’s right; it’s GPU launch time again, and AMD sure had no intention of easing into things. For the first time in quite a while (if not ever; my memory is horrible), AMD isn’t releasing just one or two GPUs today, but four: Radeon R9 280X, R9 270X, R7 260X and R7 250. Today, we’re going to be taking a look at the R9 280X, with looks at the others to come soon.

You might recall that AMD held a “Tech Day” in Hawaii a couple of weeks ago. Much was discussed there, so we’re not going to rinse and repeat what I’m sure you already know. Instead, we’ll wait for some of the technologies to become viable in the real-world so that we can test and relay our experiences.

AMD’s Radeon R9 280X

The reason AMD held its event in Hawaii is because “Hawaii” is the codename for its next high-end part, which is bringing quite a bit to the table (not all of it which we can talk about at this point). The R9 290X will be AMD’s highest-end for this new generation, codenamed Hawaii XT. The R9 290 will sit just below that, codenamed Hawaii Pro.

AMD’s current and upcoming lineup can be seen in the table below:

| AMD Radeon | Cores | Core MHz | Memory | Mem MHz | Mem Bus | TDP | Price |

| R9 290X | ??? | ??? | ??? | ??? | ??? | ??? | ??? |

| R9 290 | ??? | ??? | ??? | ??? | ??? | ??? | ??? |

| R9 280X | 2048 | <1000 | 3072MB | 6000 | 384-bit | 250W | $299 |

| R9 270X | 1280 | <1050 | 2048MB | 5600 | 256-bit | 180W | $199 |

| R7 260X | 896 | <1100 | 2048MB | 6500 | 128-bit | 115W | $139 |

| R7 250 | 384 | <1050 | 1024MB | 4600 | 128-bit | 65W | $??? |

AMD is throttling the amount of information that can be revealed about the 290 and 290X, but what I can say is that they’re going to be pretty interesting in a couple of different ways. Whether or not what those cards will bring to the table will make NVIDIA quiver in its boots, I’m not quite sure. We’ll have to wait and see. One thing’s for sure: AMD is damned confident about its product.

While AMD sent us reference versions of its R7 260X and R9 270X to take a look at, the R9 280X we received came courtesy of PowerColor. Except for the PCB color and the back ports, the cooler on the card I received is identical to the one seen below. The particular card I have isn’t even listed on PowerColor’s site, but it runs at reference clocks. Instead of 2x DVI + HDMI + DP, my sample has 1x DVI + HDMI + 2x mini-DP.

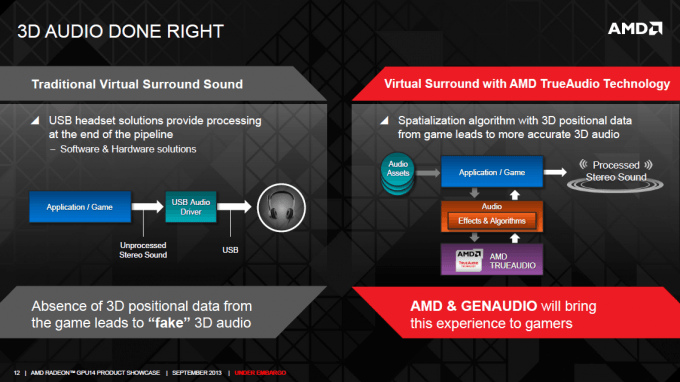

It’s not often that we hear (no pun) a GPU vendor talk about audio, but that was one of AMD’s major focuses at its event in Hawaii. With its DSP “TrueAudio”, the company (partnered with others) hopes to redefine our expectations of gaming audio. A common goal with audio is to allow us to pinpoint where a sound is coming from; that’s one of AMD’s goals here, but what makes it all the more interesting is that we’re meant to get that benefit even from stereo output. Color me intrigued.

I am not an audio guy – far from it – so there’s not a whole lot I can talk about at this point. There’s no way for anyone outside of AMD and its partner companies to test TrueAudio at this point, so right now, it’s just a waiting game until support gets here. Here’s the takeaway, though: Game developers have long had programmable shaders to work with; picture the same sort of flexibility with audio.

While not an issue on everyone’s mind, AMD is also taking advantage of this launch to bolster its 4K capabilities. The company is pushing for a new VESA standard that allows two outputs to drive two separate streams to a single 4K display. This would allow us to get over the hurdle of being stuck at 30Hz @ 4K, a typical problem at the moment (and obviously an issue that’s evident for gaming). Down the road, more advanced connectors should make this kind of work-around unnecessary.

Also on the display front, AMD’s latest GPUs continue to support 3 monitors off the same card, or 6 when a DisplayPort extender is used.

While there is much more we could talk about, such as AMD’s game push, this has been a rather intensive article to get out, and there are still other GPUs to be benchmarked. So, that said, let’s get right into a look at our (revised) GPU testing methodology, and then tackle our first results.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!