- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

AMD HD 6950 1GB vs. NVIDIA GTX 560 Ti Overclocking

AMD and NVIDIA released $250 GPUs last week, and both proved to deliver a major punch for modest cash. After testing, we found AMD to have a slight edge in overall performance, so to see if things change when OCing is brought into the picture, we pushed both cards hard, and then pit the results against our usual suite.

Page 1 – Introduction

Catering to those with appetites for a ~$250 graphics card, both AMD and NVIDIA released appropriate models last week. AMD was responsible for the least interesting of the two, simply knocking its Radeon HD 6950 2GB down to 1GB, while NVIDIA released what it hopes will become a legendary offering, the GeForce GTX 560 Ti.

Although we’ve posted articles taking a look at each card in some depth, one area we haven’t tackled is overclocking, so that’s the purpose of this article. When NVIDIA first briefed us on its GTX 560 Ti, it touted the major overclocking potential that the card offered. From AMD, “overclocking” wasn’t uttered even once.

In our review of NVIDIA’s card, we had to give props to AMD for having the slightly more appealing offering, because while it costs about $20 more, it proved to be a bit faster, and boasts improved efficiency overall. But with the GTX 560 Ti’s potential for overclocking, we thought we’d pit both cards against each other in that regard, to see if our opinions would change on which is better.

So, I sat down with both cards, installed the respective overclocking tools, and then got to work. For the AMD card, I used Sapphire’s TriXX, as it offers a great deal of flexibility. For the same reason, we chose to use MSI’s Afterburner for the NVIDIA card. Voltages were not touched during overclocking as neither program would allow us that privilege.

To deem an overclock as “stable”, it must first pass 30 minutes of 3DMark 11’s Extreme test, followed by real gameplay through both Dirt 2 and Metro 2033. If we can’t force the card to crash via these means, we consider it stable.

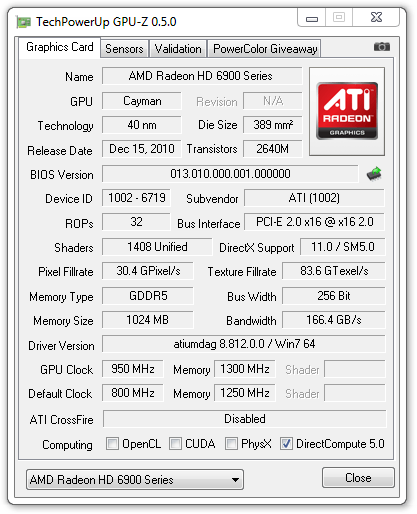

First up, AMD’s Radeon HD 6950 1GB. Like its 2GB bigger brother, the 1GB model features core clock speeds of 800MHz and memory clocks of 1250MHz.

The overclock I managed to reach with this card, 950MHz core and 1300MHz memory, impressed me quite a bit. I haven’t had the best of luck overclocking AMD’s previous HD 6000 series cards, so I didn’t expect much here, but as you can see, this is not a minor overclock… the core saw an 18% boost alone.

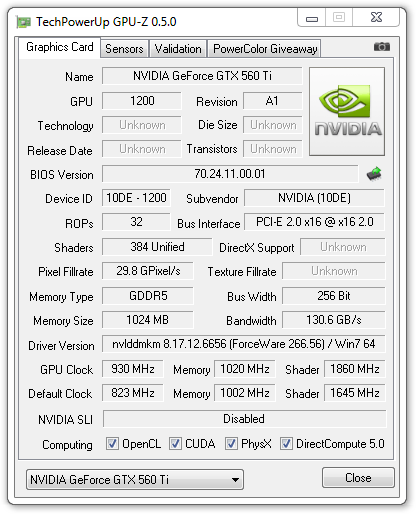

What about NVIDIA? Strangely enough, even though this was the card I had expected to reach unbelievable heights, it couldn’t. During our press briefing with the company, it floated around the idea of overclocks as high as 970MHz, but we were unable to even get close. Instead, we had to settle on 930MHz core (from 822MHz), 1860MHz shader (from 1645MHz) and 1020MHz memory (from 1002MHz).

Overall, I’m not super-pleased with this overclock since I was expecting much better. Even with a core clock of 940MHz (+10MHz above our “stable”), Dirt 2 would artifact rather starkly during a single race and caused the game to crash on me twice.

In looking around the Web, I’ve seen others achieve higher overclocks than this, some as high as 1,000MHz, which leads me to believe that most people should be able to get a clock much higher than 930MHz. Unfortunately, I only had one GPU sample to test out, so I’m hoping to get more in, in the near future, to see if I can reach anything higher.

As much as I wished we could have heightened the clock a bit more, we were unable, so what you see above is what we had to stick with. And with that said, let’s take a look at the actual performance gains throughout our usual suite from each card.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!