- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Core i7-5960X Extreme Edition Review: Intel’s Overdue Desktop 8-Core Is Here

In late 2011, I wagered that Intel would follow-up its i7-3960X with an eight-core model within the year. That didn’t happen. Instead, we have had to wait nearly three years since that release to finally see an eight-core Intel desktop chip become a reality. Now for the big question: Was the company’s Core i7-5960X worth the wait?

Page 1 – Introduction

It was a glorious thing when Intel released its Gulftown-based Core i7-980X in early 2010. It was the first desktop CPU out of the company that featured six cores, and it felt like a relative boon to those, like me, who run virtual machines, encode video, compile software, and more. In the end, I was left seriously impressed with that chip.

At that time, I naively believed that an eight-core was in our near-future. In fact, I outright expected Sandy Bridge, which released in late 2011, to have an eight-core model. It didn’t. I didn’t let anything dampen my spirits though, as at the end of my look at the Core i7-3960X, I said something to the effect of, “I can’t imagine that eight-core models will not be released within the next six months or so.”

I’m not sure what got that into my head. A staggering 1,020 days have passed since the 3960X’s announcement, and we finally have an eight-core chip at our disposal. Admittedly, I wasn’t exactly enthralled by what the Core i7-4960X brought to the table, but with eight cores, I think the i7-5960X has a great chance at having a different conclusion.

Welcome to Haswell-E:

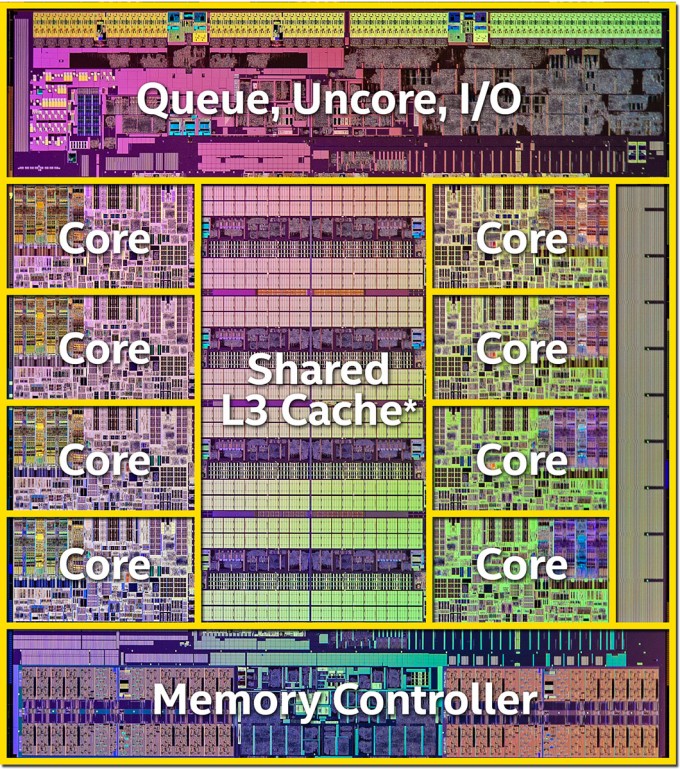

That’s a chip that means business. All Haswell-E processors are built on a 22nm process, but the eight-core of course tops the transistor count charts, with 2.6 billion – 800 million more than the Core i7-4960X. As expected, these transistors are built using Intel’s much-touted Tri-Gate 3D design.

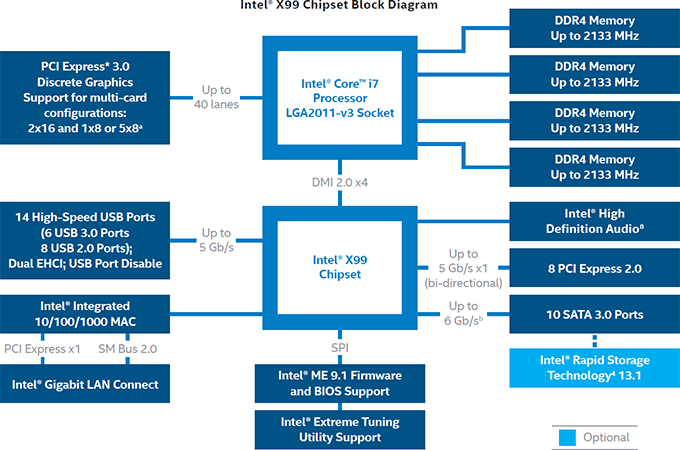

Like Sandy Bridge-E and Ivy Bridge-E, Haswell-E has 2011 contact points on its belly, but its processors will only support the latest socket revision, called LGA2011-3. At the moment, those are only going to be found on motherboards featuring Intel’s X99 chipset.

In regards to the function layout, nothing has really changed between Ivy Bridge-E and Haswell-E, and that’s because the design isn’t broken. All of the cores still sandwich the cache, and other key functions are found at the top and bottom.

The X99 chipset is Intel’s first desktop offering from Intel (or AMD, for that matter) to support DDR4 memory, and somewhat humorously, it officially supports very modest 2133MHz speeds. Chances are good that if you buy into this platform, you’ll be winding up with memory faster – or perhaps much faster – than this.

One important thing to note about this block diagram is the mention of “up to” 40 PCIe lanes. Last-gen, that didn’t matter too much, but this gen, it does. Intel’s decided to separate its smallest LGA2011-3 chip, the i7-5820K, further from the others by limiting its PCIe lanes to 28. Both the i7-5960X and i7-5930K have the expected 40, so for those who are building the highest-end gaming PCs possible, the i7-5820K may not be an ideal choice.

Intel’s sticking to the “3 model” scheme it started with Sandy Bridge-E for this launch, with the bottom CPU set to sell for $389 in quantities of 1,000. That should result in prices at etail of about $420 or so. The middle model bumps the price to $583, which nets you slightly faster stock speeds, as well as the full 40 PCIe lanes. The big gun is the i7-5960X, Intel’s debut eight-core. As is tradition for Intel’s top-end parts, this chip is priced at $999.

| Cores | Threads | Clock | Turbo | Cache | PCIe Lanes | TDP | $/1,000 | |

| Core i7-5960X | 8 | 16 | 3.0GHz | 3.5GHz | 20MB | 40 | 140W | $999 |

| Core i7-5930K | 6 | 12 | 3.5GHz | 3.7GHz | 15MB | 40 | 140W | $583 |

| Core i7-5820K | 6 | 12 | 3.3GHz | 3.6GHz | 15MB | 28 | 140W | $389 |

| Core i5-4790K | 4 | 8 | 4.0GHz | 4.4GHz | 8MB | 16 | 88W | $339 |

| Core i7-4690K | 4 | 4 | 3.5GHz | 3.9GHz | 6MB | 16 | 88W | $242 |

| All 4th-gen Core processors are built on a 22nm process, utilizing 3D tri-gate transistors. | ||||||||

Intel’s Core i7-5820K, despite its fewer PCIe lanes, is quite an attractive chip compared to the i7-4790K. It has a much slower clock, but it has 50% more cores, and far more L3 cache. For the most part, it’s similar to last-gen’s i7-4930K. That chip was 100MHz more at stock, and 200MHz more at Turbo, but had 3MB less L3 cache and cost $200 more.

As for the other two models, there’s quite a bit to talk about. For starters, the i7-5960X has a glaring flaw: Its stock speed is 3.0GHz. I don’t recall the last time any Intel enthusiast chip had dipped that low, and it’s hard to see that spec next to a $999 price tag. But on account of the fact that the chip has eight cores – something none of the other chips do, and something no AMD chip is going to hold a candle to – that drop in clock can be forgiven. Still, it doesn’t look right.

I haven’t put even two seconds into overclocking the i7-5960X as of the time of writing, but after talking to a couple of different vendors, I’ve come to the conclusion that a 4.5GHz overclock is going to be somewhat of a pipe dream. 4.0GHz, however, should be no problem at all to hit, especially if you don’t mind giving the chip a bit of extra voltage.

Even if you were to boost the chip to a static 3.7GHz or 3.8GHz across all of the cores, that dramatically enhances the appeal of the chip, and negates the fact that the i7-5930K is clocked a bit higher at stock.

Above is a shot of our i7-5960X sample cuddling with ASUS’ X99-DELUXE motherboard. This board is the first X99 offering I’ve touched, and so far, I’m beyond impressed with it. As you can probably imagine, this board will be the focus of my first X99 review, so stay tuned for that – there’s a lot to talk about.

Before moving on, there’s something interesting in that shot above: If you look closely at the CPU, you’ll see it say “USA”. I couldn’t help but ask Intel about this, since all of the CPUs I’ve received from the company have largely come from Costa Rica or Malaysia, and I was told that the USA label will not be carrying over to the retail products. So now that I’ve wasted your time with this information, let’s talk about DDR4.

Corsair was kind enough to send along a kit of its Vengeance LPX for our testing. This 16GB kit is clocked at DDR4-2800 speeds, although as I quickly found out, that speed is a little hard to hit at this point in time, and even after talking to ASUS and Corsair, I’m not entirely sure why. At 2800MHz speeds, my bandwidth score in Sandra was actually less than it was with the sticks clocked at 2400 or 2666. It could be that I have a bunk memory controller in my chip, or ASUS’ EFI needs some further tweaking. What I do know for sure is that there are some definite launch quirks here.

Desktop DDR4 at this point is bleeding-edge, and its pricing reflects it. I tackled this a couple of times recently in our news section, so I’m going to borrow a table from a post I wrote earlier this week to help illustrate things here.

| DDR3 | DDR4 | Premium | |

| 2133MHz 2x8GB | $163 (G.SKILL, 1.5V, CL 11) | $220 (Crucial, 1.2V, CL 15) | +35% |

| 2133MHz 4x8GB | $315 (G.SKILL, 1.65V, CL 10) | $440 (Crucial, 1.2V, CL 15) | +40% |

| 2400MHz 2x8GB | $153 (ADATA, 1.65V, CL 11) | $230 (Crucial, 1.2V, CL 16) | +50% |

| 2400MHz 4x8GB | $320 (Mushkin, 1.65V, CL 11) | $460 (Crucial, 1.2V, CL 15) | +44% |

| CL = CAS Latency. All DDR3 kits were the least-expensive non-sale options on Newegg as of the time of this post. | |||

If you’ll be building an X99-based PC, you’re quite obviously going to be shelling out a fair bit of money to get up and running. I think I’m safe to assume that 16GB is going to be the amount of memory most people will go for, and at the moment, that will set you back just over $200 for a modest kit. Couple that with a $300 motherboard and at least the $400 i7-5820K, and that becomes a $900 base to your new PC.

Performance Expectations

Hey, Intel! I think I see an AMD engineer snooping around outside!

Alright, with Intel not looking, I have this to say: You probably don’t need an eight-core Intel processor. It could be that such a thing doesn’t even need to be said, but I just feel better by saying it anyway.

The fact of the matter is, even six cores is overkill for most people. It takes specific scenarios to take proper advantage of the resources that such a chip provides, and at the moment, that doesn’t include gaming. Intel does make mention of 3DMark’s Physics test benefiting from the extra cores, but that’s not exactly representitive of actual gameplay.

Who’d benefit from such a big CPU the most are those who encode lots of video, render a lot of 3D projects, compile large applications, run multiple virtual machines, and in general, do some pretty hardcore stuff with their PC.

Because the i7-5960X is clocked at a mere 3.0GHz, the i7-5930K stands out. It might lose two cores, but it’s at least 500MHz faster, and of course, $400 less than the eight-core.

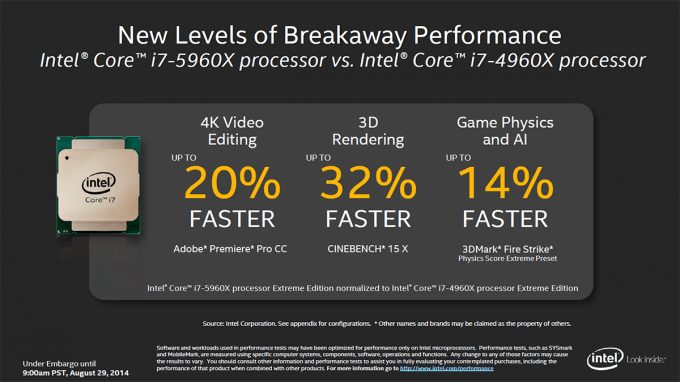

That all being said, let’s tackle a couple of quick numbers. Intel claims that its eight-core Haswell-E will prove up to 32% faster in 3D rendering (based on Cinebench scores), and up to 20% faster in 4K video editing (based on Adobe Premiere Pro CC). Further, in its test of converting a 4K source video to 1080p using HandBrake, the i7-5960X proved 69% faster than the quad-core i7-4790K, and up to 34% faster than the i7-4960X.

With that all taken care of, we can now move onto a look at our own performance tests, to see what the i7-5960X is truly made of. For the sake of this review, I’m going to be comparing the i7-5960X directly to the i7-4960X. I would have loved to have been able to include i7-4770K results in this review, but due to time constraints, I was unable to re-test the chip using our updated test suite. Fortunately, the real comparison involves the chips that are included, so let’s get a move on and get to comparing – well, after a quick look at our testing system and methodologies.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!