- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

NVIDIA GeForce RTX 3080 Performance In Blender, Octane, V-Ray, & More

NVIDIA’s first Ampere GeForce has landed, and it’s proving itself to be quite a beast. For our first look at the performance of NVIDIA’s new RTX 3080, we’re tackling rendering with the help of Octane, Blender, V-Ray, KeyShot, Arnold, and Redshift, comparing it against the previous generation of RTX cards, and also the $699 GPU from two generations ago: The GTX 1080 Ti.

Don’t miss our newer results featuring RTX 3090!

The GeForce RTX 3080 has arrived, and for creators, NVIDIA’s latest offers a lot to love. Whereas the Turing generation introduced ray tracing and deep-learning cores, the Ampere architecture supercharges each of those. Now, if you think back some of the jaw-dropping performance we saw with RTX-enabled design software, the thought of an (up to) doubling of performance is pretty exciting.

We’re not afraid to say up front that the RTX 3080 is one hell of a graphics card – either for creation or gaming. We’re starting our launch coverage with more of a creative twist, looking specifically at CUDA and OptiX-specific renderers, in addition to Blender 2.90, which will have results enhanced with competitor cards.

Priced at $699, the RTX 3080 follows in the footsteps of the 2080 SUPER, but targets the 2080 Ti in performance. Even the TITAN RTX is about to be taught a harsh lesson. For creators, the biggest limitation of the RTX 3080 is going to be the 10GB frame buffer, although for most users, that will still be sufficient for a while, something helped by the fact that the Ampere’s memory architecture with GDDR6X is really efficient.

For top-end users, the RTX 3090 is likely to be a no-brainer. In a single generation, NVIDIA took a card like the TITAN RTX, vastly improved its performance, kept the 24GB frame buffer, and dropped the price by $1,000. We’re not sure if there will be a TITAN for this Ampere generation, but a 40GB option priced at $2,500~$2,999 released at some point seems plausible (to us).

Here’s a breakdown of NVIDIA’s current lineup:

| NVIDIA’s GeForce Gaming GPU Lineup | |||||||

| Cores | Base MHz | Peak FP32 | Memory | Bandwidth | TDP | SRP | |

| RTX 3090 | 10,496 | 1,400 | 35.6 TFLOPS | 24GB 1 | 936GB/s | 350W | $1,499 |

| RTX 3080 | 8,704 | 1,440 | 29.7 TFLOPS | 10GB 1 | 760GB/s | 320W | $699 |

| RTX 3070 | 5,888 | 1,500 | 20.4 TFLOPS | 8GB 2 | 512GB/s | 220W | $499 |

| TITAN RTX | 4,608 | 1,770 | 16.3 TFLOPS | 24GB 2 | 672 GB/s | 280W | $2,499 |

| RTX 2080 Ti | 4,352 | 1,350 | 13.4 TFLOPS | 11GB 2 | 616 GB/s | 250W | $1,199 |

| RTX 2080 S | 3,072 | 1,650 | 11.1 TFLOPS | 8GB 2 | 496 GB/s | 250W | $699 |

| RTX 2070 S | 2,560 | 1,605 | 9.1 TFLOPS | 8GB 2 | 448 GB/s | 215W | $499 |

| RTX 2060 S | 2,176 | 1,470 | 7.2 TFLOPS | 8GB 2 | 448 GB/s | 175W | $399 |

| RTX 2060 | 1,920 | 1,365 | 6.4 TFLOPS | 6GB 2 | 336 GB/s | 160W | $299 |

| GTX 1660 Ti | 1,536 | 1,500 | 5.5 TFLOPS | 6GB 2 | 288 GB/s | 120W | $279 |

| GTX 1660 S | 1,408 | 1,530 | 5.0 TFLOPS | 6GB 2 | 336 GB/s | 125W | $229 |

| GTX 1660 | 1,408 | 1,530 | 5 TFLOPS | 6GB 4 | 192 GB/s | 120W | $219 |

| GTX 1650 S | 1,280 | 1,530 | 4.4TFLOPS | 4GB 2 | 192 GB/s | 100W | $159 |

| GTX 1650 | 896 | 1,485 | 3 TFLOPS | 4GB 4 | 128 GB/s | 75W | $149 |

| GTX 1080 Ti | 3,584 | 1,480 | 11.3 TFLOPS | 11GB 3 | 484 GB/s | 250W | $699 |

| Notes | 1 GDDR6X; 2 GDDR6; 3 GDDR5X; 4 GDDR5; 5 HBM2 GTX 1080 Ti = Pascal; GTX/RTX 2000 = Turing; RTX 3000 = Ampere |

||||||

While the RTX 3080 is going to prove itself to be a lot faster than the 2080 Ti, or even TITAN RTX, its TDP has been boosted, so you can expect a higher power drain from the wall. Something tells us that the performance gain will make that a non-issue.

The Founders Edition of the RTX 3080 can be seen in the photos above. The card features a new 12-pin power connector that will require an adapter to be used for any current PC. As time goes on, more power supply vendors are expected to adopt this connector, though that’s something that may happen quicker if AMD decides to also adopt it.

On this particular card, the positioning of the power connector on the GPU seems a bit strange, since the fact that it’s front-facing means that you won’t be able to avoid having a cable hanging in front of the card. The card PCB underneath is much shorter than the shroud, so NVIDIA couldn’t have moved it to the end like on most designs.

To learn more about what the RTX 3000 series brings to the table, you should refer to our announcement article. As mentioned above, this article is going to focus exclusively on rendering with CUDA or OptiX-infused renderers, but we will have plenty more performance coming for gaming, including RTX-specific testing.

An important note on general creator performance: We quickly tested things like SPECviewperf, Adobe Premiere Pro, MAGIX Vegas Pro, and so on, but didn’t find the performance to be so notably different on the RTX 3080 (vs. 2080 Ti) that it warranted full retesting of the stack. We’ll get those other benchmarks tested in the meantime for a fuller look down-the-road. For video encoding, don’t expect any significant gains right now unless you heavily use GPU-accelerated filters.

| Techgage Workstation Test System | |

| Processor | Intel Core i9-10980XE (18-core; 3.0GHz) |

| Motherboard | ASUS ROG STRIX X299-E GAMING |

| Memory | G.SKILL FlareX (F4-3200C14-8GFX) 4x8GB; DDR4-3200 14-14-14 |

| Graphics | NVIDIA RTX 3080 (10GB, GeForce 456.16) NVIDIA TITAN RTX (24GB, GeForce 452.06) NVIDIA GeForce RTX 2080 Ti (11GB, GeForce 452.06) NVIDIA GeForce RTX 2080 SUPER (8GB, GeForce 452.06) NVIDIA GeForce RTX 2070 SUPER (8GB, GeForce 452.06) NVIDIA GeForce RTX 2060 SUPER (8GB, GeForce 452.06) NVIDIA GeForce RTX 2060 (6GB, GeForce 452.06) NVIDIA GeForce GTX 1080 Ti (11GB, GeForce 452.06) |

| Audio | Onboard |

| Storage | Kingston KC1000 960GB M.2 SSD |

| Power Supply | Corsair 80 Plus Gold AX1200 |

| Chassis | Corsair Carbide 600C Inverted Full-Tower |

| Cooling | NZXT Kraken X62 AIO Liquid Cooler |

| Et cetera | Windows 10 Pro build 19041.329 (2004) |

| All product links in this table are affiliated, and help support our work. | |

OTOY OctaneRender 2020

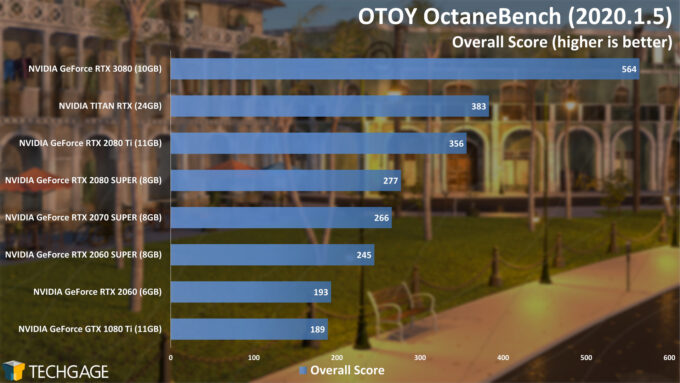

OTOY released the latest version of its OctaneBench benchmark a couple of days ago, and like OctaneRender itself, it supports NVIDIA’s latest graphics series. In fact, if you follow OTOY on Twitter, you’ve probably noticed that the company has been really excited for this release. And looking at these results, it’s not hard to see why.

When we wrote our RTX 3080 launch article, we mentioned that it was a “2080 Ti Killer for $700”, but the truth is, this new GPU even has the TITAN RTX in its sights. Versus that, the RTX 3080 is 47% faster, but in comparison to the previously-gen $699 2080 SUPER, it’s 103% faster. It’s 3x faster than the 1080 Ti, released also at $699 a few years ago.

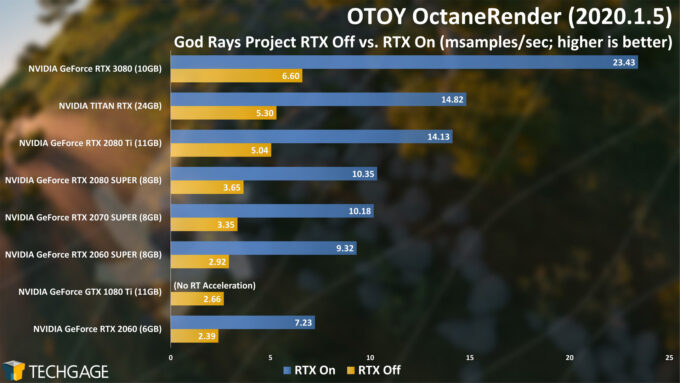

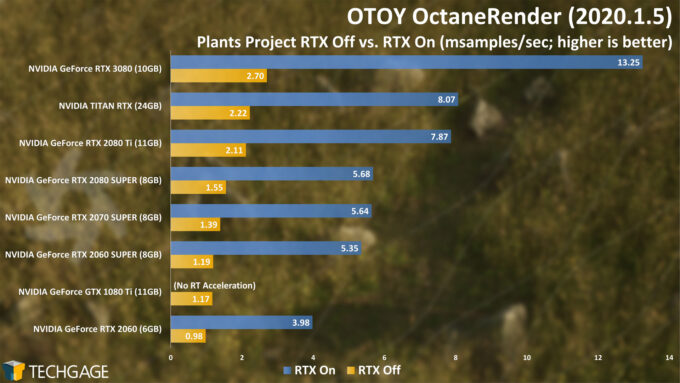

With a couple of projects in hand, we also tested the OctaneRender itself, which now has an RTX benchmarking option built-in:

These OctaneRender results paint an even better picture of the RTX 3080 vs. any other card in the lineup. With God Rays, it’s shown to be 58% faster than TITAN RTX, and more than twice as fast as the 2080 SUPER. You could say things are going well so far, so let’s move onto Blender:

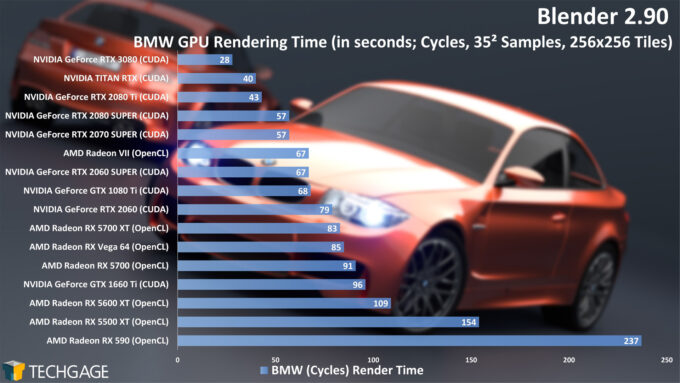

Blender 2.90

While we expected huge performance gains with rendering in particular, we were immediately blown away when we saw the result above In Blender and the ubiquitous BMW test. The TITAN RTX just brushed 40 seconds before, whereas the RTX 3080 breaches the 30 seconds mark, comfortably, and without OptiX. With this first project, NVIDIA managed to cut the render time in half between last-gen’s 2080 SUPER and the new RTX 3080.

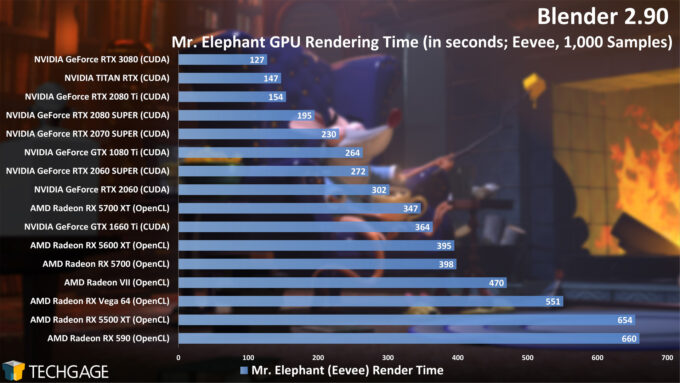

Cycles rendering absolutely loves OptiX, but Eevee isn’t paying any attention to it right now, so those gains are not quite as impressive:

Even without RTX acceleration, 14% faster for the RTX 3080 over the TITAN RTX is nothing to balk at, especially when that is not even the target GPU. Compared to the 2080 SUPER, the RTX 3080 is 35% faster in Eevee. While we’re testing a single still here, this performance directly scales with animation renders with smaller iteration counts.

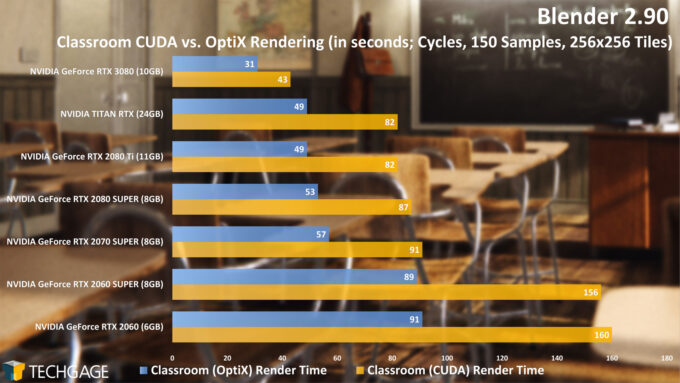

Back to Cycles, where we introduce NVIDIA’s OptiX ray tracing acceleration to the mix:

What can be said that isn’t obvious? With CUDA itself, the gains are huge gen-over-gen, and then OptiX improves that further. It’s actually incredible in a way how the $700 RTX 3080 stomps the previous-gen $2,499 TITAN RTX.

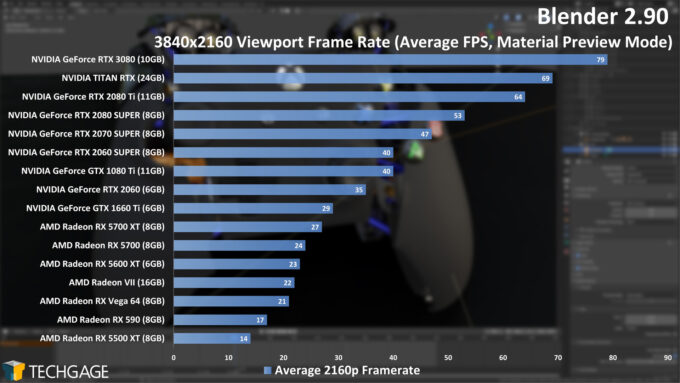

With our viewport test, we’re seeing another healthy gain with the RTX 3080 over the TITAN RTX, or in the most reasonable comparison, a nearly 50% gain over the previous-gen $699 2080 SUPER. We’re going to be posting this and more performance for a dedicated Blender 2.90 article soon, but what we’ll see there is that 1440p and 1080p will require huge models/scenes to show an improvement on top-end GPUs. We have six GPUs in our 1080p test that all perform the same thanks to a CPU bottleneck.

Chaos Group V-Ray 5

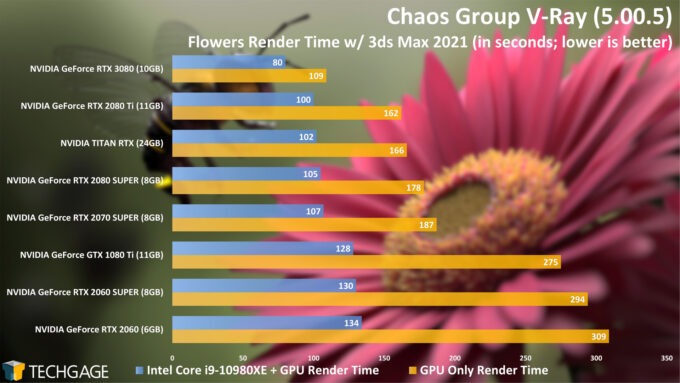

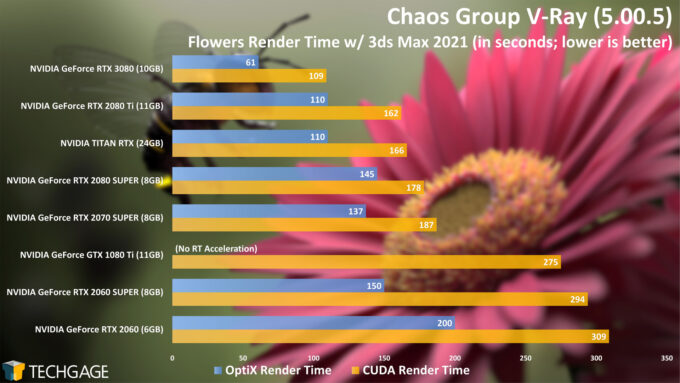

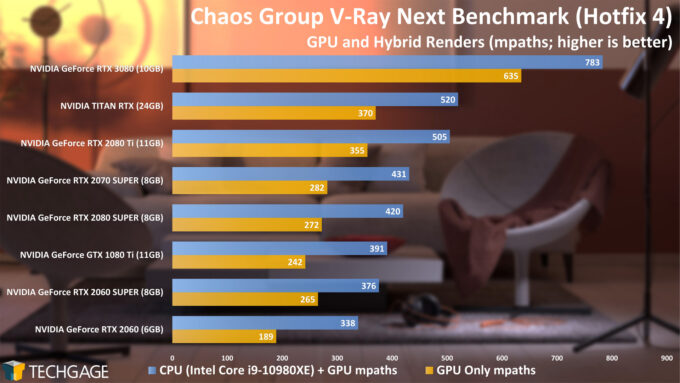

We’ll start our look at the V-Ray results with a GPU and CPU+GPU comparison. As you’d expect (or at least hope for), adding the CPU to a render can help out the end render time quite a bit, but that assumes you’re using a processor that can offer enough cores to be useful (our test PC has 18, although that’s far from necessary for a good workstation.)

Looking at CUDA only, we see the RTX 3080 performing significantly faster than the TITAN RTX. We can see the last-gen cards slowed their impressive render times down as we moved higher up the stack, but the RTX 3080 effectively sets a new bar. It’s going to be really interesting to see what some of the more budget-oriented Ampere cards will look like.

Like many of the other renderers here, V-Ray offers an OptiX option, so we also tested that out:

With these results, we’re seeing more impressive gains on the RTX 3080, with it proving to be 45% faster than the TITAN RTX with OptiX, and 58% faster than 2080 SUPER. The odd OptiX results between the 2070S and 2080S held true after retesting. Both of those GPUs have given us strange results in other tests, and we just have to chalk them up to being the oddities that they are.

Another interesting angle to look at these results with is the 1080 Ti using CUDA and RTX 3080 using OptiX. While CUDA alone proves 60% faster than 1080 Ti, OptiX bolsters that gain further. Interestingly, thanks to the beefier CPU in our test PC, only the RTX 3080 delivers a better OptiX result over the heterogeneous test.

With what we’re sure will be an outgoing benchmark (assuming Chaos Group is going to release a newer version), we see the RTX 3080 continuing to dominate.

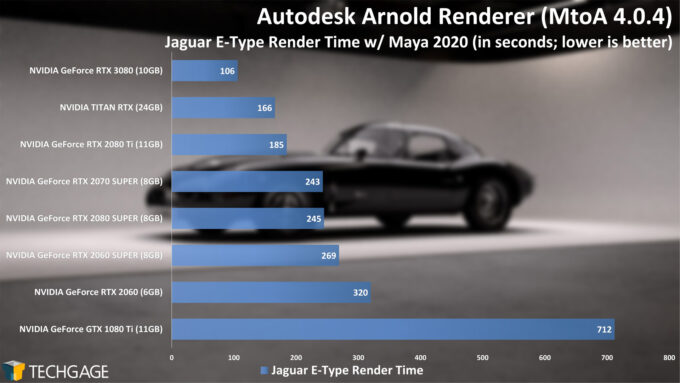

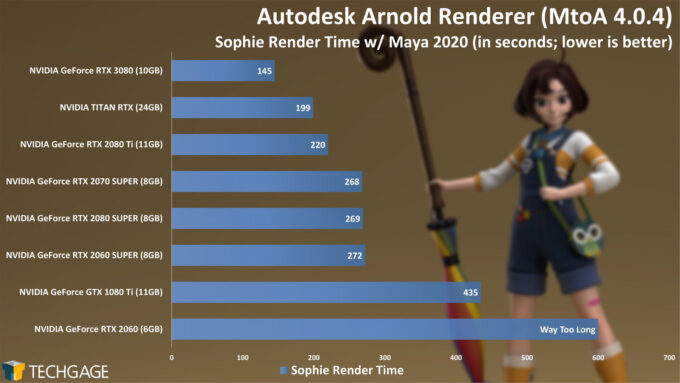

Autodesk Arnold 6

Autodesk’s Arnold uses OptiX by default, which means any GPU that has RT cores will automatically have them exercised, leading to some great performance out of the top of the stack. Like a few others in this article, these charts really highlight the improvements seen since the 2080 Ti. In the E-Type render, the RTX 3080 was much quicker than TITAN RTX, and dramatically faster than the 2080 SUPER.

Not that it’s relevant to this review, per se, but 6GB GPUs don’t seem to fare too well in Arnold, as the RTX 2060 and the Sophie test can attest. The 6GB GeForce 1660 Ti gives us the same issue (this is what’s referred to as an out-of-core problem, where the GPU’s framebuffer is too small for the scene, and can not access system memory to compensate, resulting in a very slow or failed render). As for the 1080 Ti, it’s looking long in the tooth, with the RTX 3080 proving 77% faster in Sophie, and an even more impressive 85% faster in the E-Type render. Or, in other math, the 3080 took just 15% of the time to render Sophie than the 1080 Ti, which was a drool-worthy GPU not too long ago.

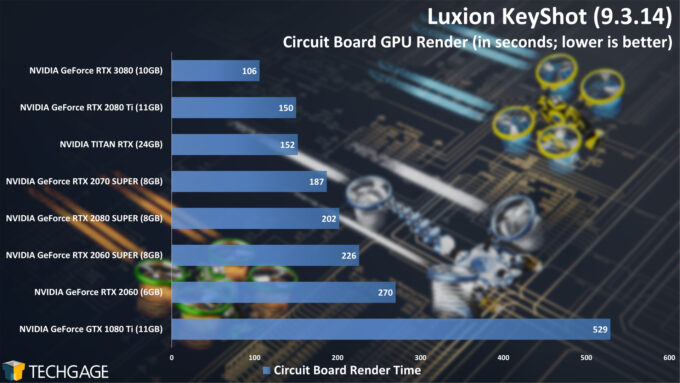

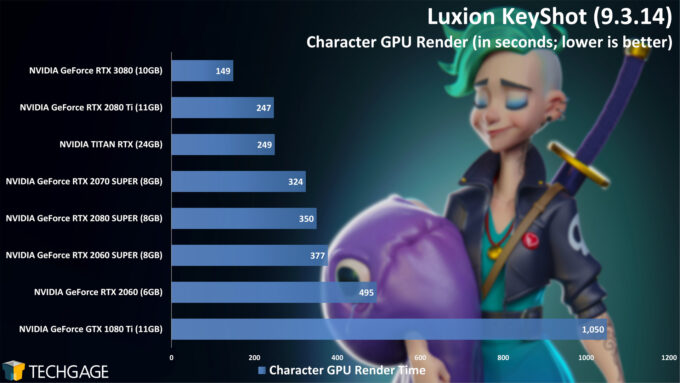

Luxion KeyShot 9

It’s beginning to look like every CUDA/OptiX renderer will see a huge gain on Ampere, isn’t it? KeyShot continues the pattern of the first test earlier, with the RTX 3080 proving 40~50% faster than the 2080S. For an even more impressive match-up: it’s 80% faster than the older Pascal-based 1080 Ti in the Circuit Board render, and 86% faster with the Character render.

The gains seen at the top-end are also really impressive, with the RTX 3080 showing cutting the Character render to 3/5ths the total time, vs. 2080 Ti. That 2080 Ti cost $1,199 when it launched in 2018, so the RTX 3080 continues to look seriously strong here.

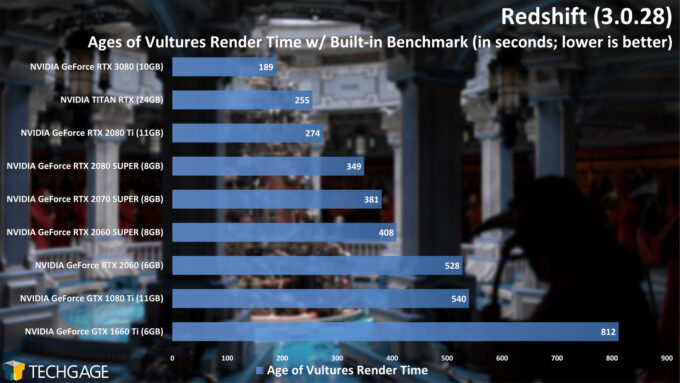

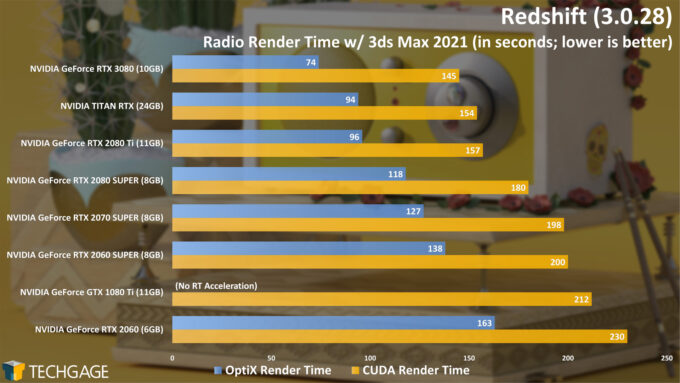

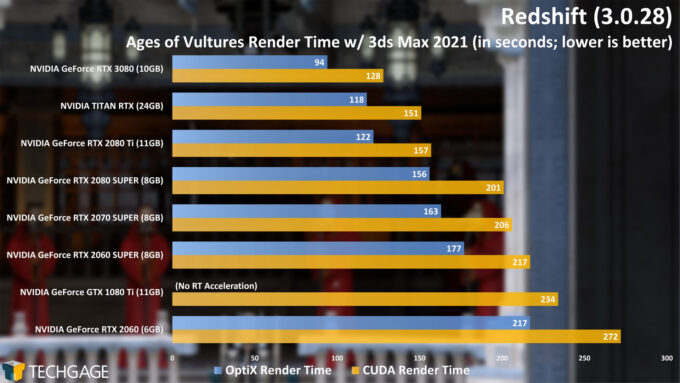

Maxon Redshift 3

With Redshift’s built-in benchmark, we immediately see some nice gains over the TITAN RTX, but not to the same extent as some of the other tests. But, it’s worth remembering that the better comparison is the 2080 SUPER, which has been sufficiently obliterated here.

Projects like those used for our manual Redshift tests really highlight the fact that not every project will render the same way (though, coincidentally, KeyShot does give us that impression sometimes.) With the simpler Radio render, the RTX 3080 only proved slightly faster than the TITAN RTX with CUDA, but OptiX bumped that to a much healthier gain, evidence of the much stronger RT core setup in Ampere.

With the much more complex Age of Vultures scene, the RTX 3080 improved its performance significantly in both CUDA and OptiX compared to the TITAN RTX. It feels weird to compare this $700 GPU to last-gen’s $2,499 GPU, so if we bring the $699 2080 SUPER into the mix, the RTX 3080 looks to be 40% faster when OptiX is used.

Sept 17 Addendum: Standalone benchmark results added due to popular demand.

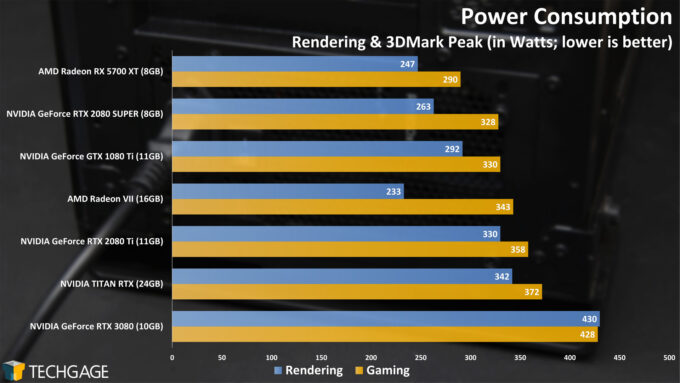

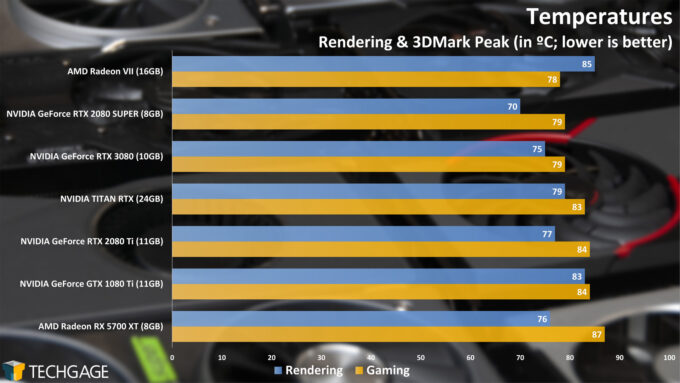

Power & Temperatures

Our power testing revealed a few interesting results, primarily because we’ve taken care of both rendering and gaming performance at the same time. The RTX 3080 drew around the same amount of power regardless of whether we were running the render or gaming stress. Interestingly, the Radeon VII used far less power while rendering than we expected (sanity checked, of course), yet it managed to peak with the highest temperature during the same test.

Clearly, the RTX 3080 is power hungry compared to the rest of the stack, but the level of performance gained negates that issue pretty easily. Faster renders also means the power draw won’t be peaked for as long as it would on an older generation card.

Final Thoughts

When NVIDIA released its Turing-based graphics cards in late 2018, the addition of dedicated ray tracing and AI cores felt really exciting to us. The only issue at the time was the fact that we had to endure a bit of a wait before software developers augmented their solutions with the RT acceleration. Today, we’re at the point where you can easily trip over a supported solution when roaming around.

Once Blender added its OptiX option, we really began to see what could be gained through accelerated ray tracing cores. While OptiX isn’t used in Eevee, it offers major performance advantages in Cycles. Fortunately, even the minor gains seen in Eevee are still appreciated – we’re still talking about a $699 graphics card that’s faster than last-gen’s 2080 Ti and TITAN RTX.

As we saw across most of these results, the performance gains seen with the new generation Ampere GeForces is simply incredible. There’s no other way to say it. The strong performance seen because of the RT cores makes AMD’s next move an important one. We’ve already known for ages that the new consoles all use ray tracing, and those are of course built with AMD Radeon GPUs. How that will all carry over to the desktop, we’re not sure, but we will gain a better understanding in late October when AMD makes its RDNA2 “Big Navi” announcement.

Even in the most modest of cases, the RTX 3080 outperformed the last-gen TITAN RTX by around 10%, and that’s not even the comparison card we should be choosing. That wouldn’t even be the 2080 Ti, which NVIDIA has said the RTX 3080 would easily beat out. The best comparison would be the 2080 SUPER, which also cost $699 ahead of this launch. Compared to that card, the RTX 3080 simply slays. We do not see gains like these come around to GPUs all too often.

As mentioned before, the only limitation we can think of with this card on the creator side is the 10GB frame buffer, but we don’t see that being a common complaint anytime soon. For those with the biggest needs, the 24GB frame buffer on the RTX 3090 should solve your quandary. Hopefully NVIDIA has other SKUs planned that will help fill that 10GB~24GB void (of course it does).

While this article took care of the ProViz aspect of the new GeForce RTX 3080, a forthcoming article will take an in-depth look at gaming, which will include a number of new RTX-infused titles. Stay tuned.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!