- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

Radeon Pro vs. Quadro: A Fresh Look At Workstation GPU Performance

There hasn’t been a great deal of movement on the ProViz side of the graphics card market in recent months, so now seems like a great time to get up to speed on the current performance outlook. Equipped with 12 GPUs, one multi-GPU config, current drivers, and a gauntlet of tests, let’s find out which cards deserve your attention.

Page 8 – Power & Final Thoughts

The performance information found in this article is outdated. We’d recommend looking through our recent GPU performance content for up-to-date results, benchmarks, and graphics cards.

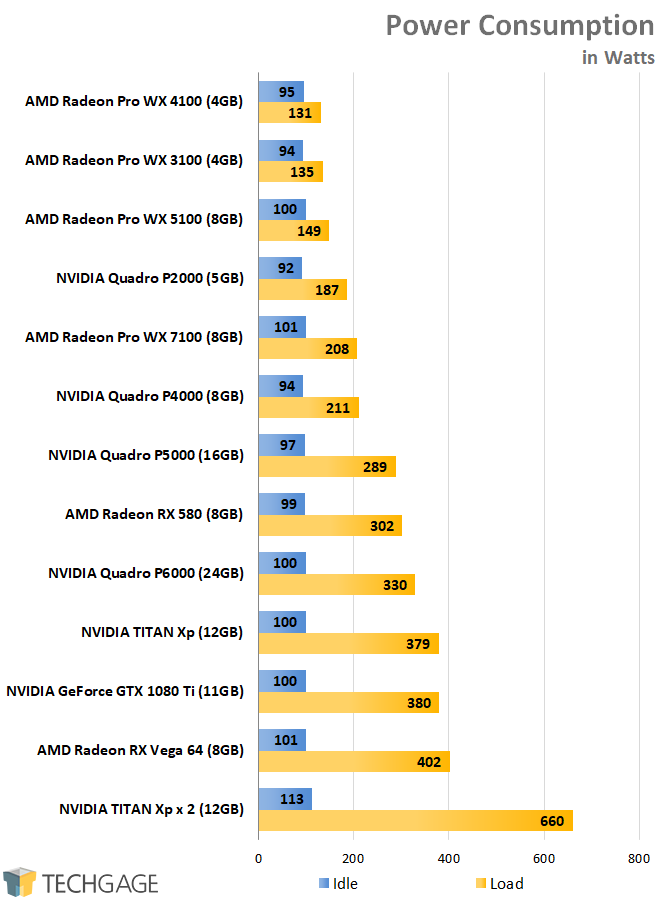

To test the power consumption of our workstation graphics card fleet, we utilize a combination of hardware and software tools. Those include a Kill-a-Watt monitor (that plugs into the wall), and also SiSoftware’s Sandra to help push the GPUs to their limit – with the help of the GPU Processing test.

Once the test PC is booted to the desktop, it’s left to sit until the CPU and memory are effectively idle. At this point, the idle wattage is recorded off of the Kill-a-Watt, if it’s likewise stable. Sandra’s GP Processing test is then kicked-off to stress the GPU, which is so effective that it only takes 30 – 60 seconds to get a stable peak.

To tackle the obvious “what?” in the chart above, the WX 4100 does in fact use less power than the WX 3100 when running Sandra’s general GPU processing test. The 1080 Ti and TITAN Xp are effectively equal in power draw, even though the TITAN Xp has additional cores. The power-hungriest card is AMD’s RX Vega 64, drawing a considerable amount of power over GTX 1080-esque P5000.

Meanwhile, the WX 7100 and P4000 class cards (and lower) basically sip power from the socket in comparison to the bigger cards. Overall, pretty expected results here.

Final Thoughts

A performance look at workstation graphics cards is far more difficult to summarize than with gaming options. Gaming GPUs typically scale pretty expectedly from game title to game title, but on the professional side of the market, the right optimizations can make a low-performance card outperform the competition’s high-end card. As I like to say, it pays to know your workload.

Both AMD and NVIDIA had many strengths, but to keep things simple, let’s tackle them one at a time.

With its GPUs, especially Vega, AMD delivers some explosive cryptography performance, pretty much matching the same kind of domination the company gives us with Zen (Threadripper is a real crypto beast). The company also exhibited great ray tracing performance with LuxMark and V-Ray on Vega, and perhaps not surprisingly, it slayed the Radeon ProRender test.

Overall, NVIDIA has more performance leads than AMD, thanks in part to the company’s aggressive and broad R&D driver efforts. In some cases, the Quadro line will perform an order of magnitude better than GeForce thanks to certain optimizations, such as Siemens NX, which performs 22x better on the TITAN Xp, and 25x better on Quadro P6000, over the GTX 1080 Ti.

Both Autodesk’s 3ds Max and Maya tend to run better on NVIDIA, but in many cases, AMD’s (~$649 SRP) Radeon RX Vega 64 kept pretty close to the NVIDIA competition. But, these solutions are some that lets us see lower-end Quadros (eg: P4000) perform better than the technically superior Vega 64. Again, “know your workload”.

On the deep-learning page, we saw what is made possible with Tensor cores, and while the entire focus of that page was on training and the like, those cores can also be used in rendering when using AI denoisers. NVIDIA has talked a lot about this technology since last fall, and recently, many renderer houses have announced plans to implement support it, such as Chaos Group. As with the DL/AI tests, Tensors can increase rendering denoising performance at least fivefold; it’s truly impressive to see.

Ultimately, there’s no such thing as a one-size-fits-all on the workstation side of the GPU market. In some cases, AMD performs better than NVIDIA, and vice versa. For general overall performance, NVIDIA offers the fastest GPU on the planet with its $3,000 TITAN V, which is 20-25% faster than the $1,200 TITAN Xp in single-precision workloads, so if money is no obstacle for you, it’d be hard to go wrong.

On the AMD side, even though we didn’t have one for testing, the Frontier Edition, at about $900, seems like great value for workstation users. It’s effectively a Vega 64, but with twice as much memory (16GB), which as we found out on the deep-learning page, is important for those kinds of workloads. And since Vega has uncapped half-precision performance (but a lack of Tensors), it’s an alluring choice (especially when the Vega 64 is experiencing inflated pricing; it almost makes the FE a no-brainer).

If you are interested in other workstation related content, be sure to check out our other work:

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!