- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

SIGGRAPH 2016: A Look At NVIDIA’s Upcoming Pascal GP102 Quadros, Iray VR, DGX-1 & mental ray Advancements

With SIGGRAPH 2016 in full swing, NVIDIA introduces its plans for GPU based ray-tracing, updates to Iray and mental ray, and extended support of SDKs and APIs. Virtual reality gets special treatment with ray-tracing and spherical composite video. And finally, NVIDIA begins scaling compute in the visual world with its Tesla P100 powered DGX-1 clusters, and introduces a monstrous 12 TFLOP Quadro, the P6000.

Page 1 – NVIDIA At SIGGRAPH 2016 – Pascal Quadros & Iray

If coffee doesn’t do it for you first thing in the morning, maybe this will. Late last week, NVIDIA dropped the mic after announcing its latest GPU, the 11 TFLOP beast that is the TITAN X. Utilizing the Pascal architecture, the new TITAN X showed enormous gains over the previous generation, with a clear lead over even the other Pascal GPUs, the GTX 1080 and 1070.

However, gaming GPUs are not what’s on the launch plate for NVIDIA today at SIGGRAPH, but workstation graphics cards from the Quadro line. If you thought the TITAN X was a powerhouse, then be prepared, as the latest generation Quadros are something special indeed.

NVIDIA Pascal Quadro

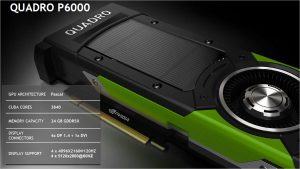

In a very unusual turn of events, the top-end Quadro GPU, the P6000, looks to be more powerful than the Pascal powered TITAN X. We’re not talking a little more powerful either – while clock speeds are not known right now, we do know the P6000 has 256 more cores at its disposal, and twice the memory. While the TITAN X hit 11 TFLOPs, the P6000 will be 12 TFLOPs of FP32 – a truly staggering number considering the old Maxwell TITAN X was 6.1 TFLOPs.

The latest TITAN X was announced at an AI research meet-up at Stanford University, rather than a more gaming themed press event we’re used to. In fact, no one even suspected the launch until it happened. The reason though is likely to do with the target audience. Surprising as it might seem, a lot of TITAN cards don’t find their way into gaming systems, but workstations, servers, render farms and research stations. They’re a middle ground between a GTX and a Quadro, resulting in an expensive gaming card or a cheap workstation card, depending on needs.

However, the TITAN X lacks a few key features that come with the Quadro range, namely ECC memory and specialized software support licenses and APIs. It’s just this time around, there is an even bigger difference with the extra cores for faster viewport rendering or CUDA processing. Let’s have a quick look at how the latest P6000 and P5000 compare to the previous Maxwell based M6000 and M5000, as well as the new TITAN X.

| NVIDIA GeForce & Quadro Roundup | ||||||

| Cores | Core MHz | Memory | Mem MHz | Mem Bus | TDP | |

| Quadro P6000 | 3840 | TBC | 24GB | 10000 | TBC | TBC |

| Quadro P5000 | 2560 | TBC | 16GB | 10000 | TBC | TBC |

| TITAN X (Pascal) | 3584 | 1531 | 12GB | 10000 | 384-bit | 250W |

| GeForce GTX 1080 | 2560 | 1607 | 8GB | 10000 | 256-bit | 180W |

| Quadro M6000 | 3072 | 988 | 12/24GB | 6612 | 384-bit | 250W |

| Quadro M5000 | 2048 | 861 | 8GB | 6612 | 256-bit | 150W |

Not a lot to go on at the moment, but those blanks will be filled in soon. The important thing is the positioning of the new P6000. From what we understand, the TITAN X isn’t using the full GP102 core, while the P6000 is; this could be due to yield issues, and as such the fully functioning chips are being binned for the top-line Quadros. The P5000 on the other hand, looks to be the same chip as the GTX 1080, and sports a similar compute score of 8.9 TFLOPs to the 1080’s 9 TFLOPs (likely just rounding).

However, comparing the P6000 and P5000 to their respective predecessors, the M6000 and M5000, things become more interesting. While the two Maxwell Quadros were released not too long ago, it’s only been a year and they’ve already been superseded. Just going by our previous tests with the performance gains of the GTX 1080 over the GTX 980, we can safely estimate a direct 30-40% performance boost in most compute tasks, but that excludes additional architecture improvements, as well as software updates which we’ll get to a little later.

While it’s no surprise VR is big business in the gaming world, VR is being taken seriously in the professional market too. This ties into the architectural reshuffle of the display handling capabilities of Pascal.

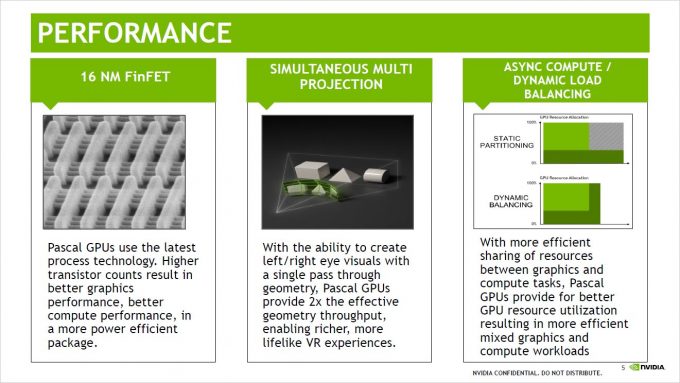

Back with the original launch of Pascal, we covered some of the new features, and one of the unsung heroes was Simultaneous Multi Projection (SMP). This had a two-fold impact on rendering performance with both multiple display handling, and VR perspective correction. When in VR mode, two displays from two perspectives needed to be rendered, then distorted to correct for the optics in head mounted displays. SMP allows for both perspectives to be rendered in a single pass, and on top of that, are rendered with the distortion already applied, reducing the amount of geometry needed to be rendered and a second pass with a post-processor.

These two factors combined mean that Pascal is 50-70% faster than Maxwell when it comes to VR rendering. According to NVIDIA’s own internal VR benchmarks, the P6000 with its much faster hardware, is 80% faster than the M6000 for VR rendering, and the P5000 is 70% faster than the M5000 for the same test. With additional rendering techniques such as Perceptually-Based Foveated VR, there’s some big gains to be made for real-time rendering.

SMP isn’t just for VR though, but multi monitor as well. If your business handles very large, high resolution displays, the new Pascal Quadros can support up to four 5K displays simultaneously. When combined with a Quadro Sync 2 card, up to 8 GPUs can be synchronized for high density displays.

Iray Scaling & VR

Speaking of real-time rendering, Iray now has support from 3ds Max, Maya, Rhino and Cinema 4D, with spherical panorama snapshots and stereoscopic VR coming soon. For those unfamiliar with Iray, it’s a physically based progressive rendering engine that was originally built on top of mental ray, that can perform either real-time viewport renders or production renders, using NVIDIA’s material definition language (MDL).

It’s an impressive technology that can leverage the rendering power of any connected hardware, be it CPU or GPU, local or server farm. We took a quick look at Iray last year, but over the last six months its matured into a stand-alone plugin compatible with multiple rendering engines, as well as the roll out of the new VR features listed above.

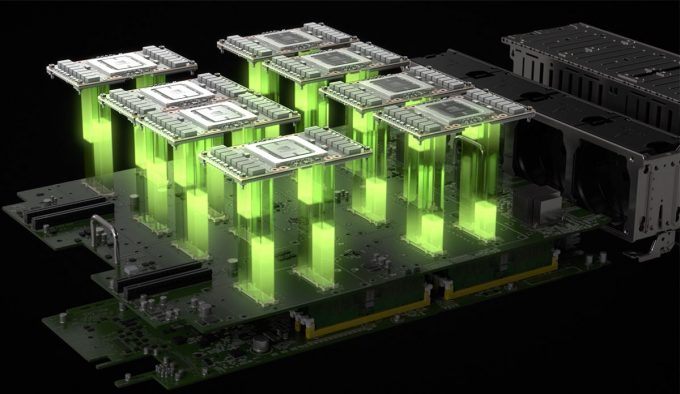

Taking Iray further is integration with Quadro VCA (Visual Computing Appliance) and the new DGX-1 clusters powered by 8x Tesla P100 compute units, which we mentioned a few months back. These DGX-1 clusters will house NVIDIA’s top-tier parts, Pascal powered Tesla cards built on GP100 cores complete with HBM2, similar to what was expected from the latest TITAN X GPU.

While DGX-1 was originally meant for compute, it’s quickly finding a home in render farms. Using the OptiX 4 API and NVLink, four of the DGX-1 Tesla cards can be pooled to create a 64GB interactive GPU memory pool for large data sets – perfect for database crunching and complex render scenes.

The VR capabilities of Iray have a number of possibilities – while showroom floor, walk through renders of cars is often a cited example (realistic physically based renders with changes being made to the vehicle on the spot), it’s also the realm of films that can see benefit.

While there’s more imagination and green screen used in modern films, sometimes it’s difficult to get a different perspective to what you are immersing your audience in when you are stuck with a 30″ monitor in a studio as your only view into the virtual world. Iray VR will allow you to move around the scene and make changes in real-time, even finding new angles for the shot. Further to this, it’s possible to render full VR videos, allowing the viewer to move their head around a scene while guided through a story.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!