- Qualcomm Launches Snapdragon 4 Gen 2 Mobile Platform

- AMD Launches Ryzen PRO 7000 Series Mobile & Desktop Platform

- Intel Launches Sleek Single-Slot Arc Pro A60 Workstation Graphics Card

- NVIDIA Announces Latest Ada Lovelace Additions: GeForce RTX 4060 Ti & RTX 4060

- Maxon Redshift With AMD Radeon GPU Rendering Support Now Available

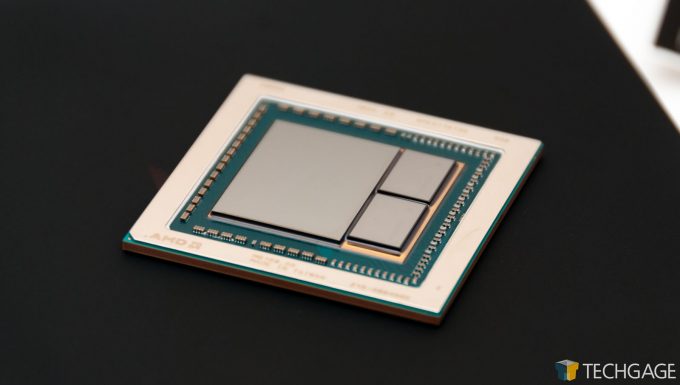

A Look At AMD’s Radeon RX Vega 64 Workstation & Compute Performance

After months and months of anticipation, AMD’s RX Vega series has arrived. The first model out-of-the-gate is the RX Vega 64, going up against the GTX 1080 in gaming. In lieu of a look at gaming to start our Vega coverage, we decided to go the workstation route – and we’re glad we did. Prepare yourself to be decently surprised.

Page 1 – Introduction, A Look At AMD’s Radeon RX Vega 64

April 30, 2018 Addendum: Updated performance can be found here.

When most people receive the latest top-end graphics card from AMD or NVIDIA, they get straight to testing its gaming performance. Me? Well, I’m not most people. I am hoping that there is some method to my madness, though. Hear me out.

AMD’s Radeon RX Vega is one of the worst-kept secrets in the history of the industry. Well before embargo-abiding press could release details, leakers around the globe told us everything we need to know. That includes relative performance to NVIDIA’s GeForce GTX 1080, the fact that it’s going to run hotter and draw more power over the competition, and that overall, it’s not the launch AMD was hoping for.

As we were allowed to reveal a couple of weeks ago, the $499 USD RX Vega 64 is designed to go up against NVIDIA’s GeForce GTX 1080, also priced at $499 USD. At the moment, though, the least expensive GTX 1080 I can find on Amazon is ~$539 USD. It’s not expected that Vega 64 (and 56) will launch with their SRPs in tact (current listings have had Vega at well over $1000), so the sad reality is, you’re going to be paying a premium on any GPU solution right now, unless you happen to get lucky.

Back to the story at hand, based on the myriad leaks that occurred surrounding RX Vega, which have made it sound like Vega 64 will never actually be able to beat the GTX 1080 at gaming, I decided to take a look at this card first from a workstation / compute perspective, to see if any unexpected advantages could be seen. Perhaps surprisingly, there are indeed some, and some of those are downright impressive.

Note: You can check out our preliminary gaming results here, more will come later when we get the full article prepared.

Admittedly, another reason I decided to take a look at the compute performance first is because our respective test rig was still hooked up and wrapping up testing conducted since I posted my look at AMD’s Radeon Pro WX 3100. In that review, six GPUs were tested in total; for this one, that’s been bumped to ten.

This is going to be the first of at least three articles surrounding workstation and gaming performance of RX Vega. This article sees the Vega 64 tackle the usual gauntlet of workstation tests, whereas the next article will took an in-depth look at gaming performance in our apples-to-apples tests. For those wanting quick and dirty gaming results, I have published a couple here. You can have a look at the reviewer’s kit we received right here.

| AMD Radeon Series | Cores | Core Base MHz | Core Boost MHz | FP32 (TFLOPS) | FP16 (TFLOPS) | Memory | Bandwidth | TDP |

| Radeon RX VEGA 64 LCE | 4096 | 1406 | 1677 | 13.7 | 27.5 | 8192 MB | 484 GB/s | 345W |

| Radeon RX VEGA 64 | 4096 | 1247 | 1546 | 12.66 | 25.3 | 8192 MB | 484 GB/s | 295W |

| Radeon RX VEGA 56 | 3584 | 1156 | 1471 | 10.5 | 21 | 8192 MB | 410 GB/s | 210W |

| Radeon R9 Fury X | 4096 | 1050 | – | 8.6 | 4096 MB | 512 GB/s | 275W | |

| Radeon R9 Fury | 3584 | 1000 | – | 7.16 | 4096 MB | 512 GB/s | 275W | |

| Radeon R9 Nano | 4096 | 1000 | – | 8.19 | 4096 MB | 512 GB/s | 175W | |

| Radeon RX 580 | 2304 | 1257 | 1340 | 6.17 | 8192 MB | 256 GB/s | 185W | |

| Radeon RX 480 | 2304 | 1120 | 1266 | 5.83 | 8192 MB | 256 GB/s | 150W | |

| Radeon RX 570 | 2048 | 1168 | 1244 | 5.1 | 4096 MB | 224 GB/s | 150W | |

| Radeon RX 470 | 2048 | 926 | 1206 | 4.94 | 4096 MB | 211 GB/s | 120W | |

| Radeon RX 560 | 1024 | 1175 | 1275 | 2.61 | 4096 MB | 112 GB/s | 80W | |

| Radeon RX 460 | 896 | 1090 | 1200 | 2.15 | 4096 MB | 112 GB/s | 75W | |

| Radeon RX 550 | 512 | 1100 | 1183 | 1.21 | 4096 MB | 112 GB/s | 50W |

I mentioned at the outset that Vega’s higher-than-desired power consumption hasn’t been a secret, and the rated spec of 295W TDP for Vega 64 confirms that (RX 580 is 185W, by contrast). This unfortunately leads me to the first major complaint about Vega 64, or at least this particular Vega 64. The “reference” cooler (if you want to call it that) looks nice, but it’s far from being an ideal solution. The card will run hot, as 295W would suggest, but to the point where throttling can take place.

The fact that the liquid-cooled version of Vega 64 exists highlights the fact that the GPU itself can achieve far greater performance than this RX 480-style cooler can complement. Sometimes, AMD and NVIDIA release new GPUs to reviewers without a reference version; companies like ASUS, GIGABYTE, MSI, PowerColor, or others, will send us custom designs. The GTX 1050 and 1050 Ti were sampled like this, and I truly feel Vega 64 should have been, too.

The fact of the matter is, reviewers can’t show Vega 64 or 56 in the light they should actually be shown. Some reviewers may have received the liquid-cooled version of the card, and I’d expect to see huge gains in performance there. And, better still, the card is sure to run cooler, with reduced chance of ever throttling. If these reference designs mimicked an ASUS STRIX, with three large fans, I am sure that would have aided in performance as well.

The moral of the story is, at some point soon, we need to look at vendor cards once they begin to hit the market, and hopefully at that time, drivers will be even better optimized, giving us a nice overall uptick in performance overall. Still, this acts as a good first test – just don’t treat the performance as gospel. Flat out, I would not recommend buying a Vega card with this reference cooler. It can’t handle the heat, and as a result, throttling can occur, and dBAs will raise.

Testing AMD’s Radeon RX Vega 64

Alright, from the get-go, a couple of things need to be made clear. The performance seen on RX Vega 64 is not meant to be representative of AMD’s workstation and compute performance on Vega in general. The upcoming WX 9100 and the preexisting Vega Frontier Edition are going to be equipped with Pro-specific optimized drivers, so while some performance will be expected here, some won’t be.

AMD didn’t send out Vega FE review samples, so this is my very first look at Vega in this context. I will be able to add some Vega FE results to our charts in the weeks ahead, nonetheless, and likewise, a Quadro P5000 is currently en route to help complete the overall look at current WS GPU options.

On the following pages, I’ll be putting AMD’s Radeon RX Vega 64 through a gauntlet of real-world and synthetic tests, utilizing apps from Autodesk, Adobe, SPEC, SiSoftware, and a handful of others (including light gaming tests for good measure).

All tests are run at least twice to produce an accurate result, and if for some reason an odd result creeps up, I do a third run. In the case of this particular review, no tests had to go that route, as most of the benchmarks are very good at delivering similar results with each repeated run.

The Windows 10 Pro (Creators Update) install used for testing has a couple of things disabled: User Account Control, Firewall, Search Indexer, OneDrive, and all notifications. During the install, everything on the Customize screen was disabled. All testing is conducted at 2560×1440 resolution (with the exception of 4K 3ds Max testing), with driver Vsync options left default.

Techgage’s workstation GPU test PC is built to be reflective of a high-end desktop that rules out as much as it can of bottlenecks. Intel’s top-end Core i9-7900X is used here, giving us a ton of breathing room on the CPU side. Kingston’s super-fast KC1000 M.2 SSD and 64GB of its HyperX FURY DRAM gives us the same breathing room on the storage and memory side.

Here’s the full list of specs:

| Techgage Workstation Test System | |

| Processor | Intel Core i9-7900X (10-core; 3.3GHz) |

| Motherboard | GIGABYTE X299 AORUS Gaming 7 |

| Memory | Kingston HyperX FURY (4x16GB; DDR4-2666 16-18-18) |

| Graphics | AMD Radeon RX Vega 64 8GB (Radeon 17.30.1051 Beta) AMD Radeon Pro WX 7100 8GB (Radeon 17.Q2.1) AMD Radeon Pro WX 5100 8GB (Radeon 17.Q2.1) AMD Radeon Pro WX 4100 4GB (Radeon 17.Q2.1) AMD Radeon Pro WX 3100 4GB (Radeon 17.Q2.1) NVIDIA TITAN Xp 12GB (GeForce 385.12) NVIDIA GeForce GTX 1080 Ti 11GB (GeForce 385.12) NVIDIA Quadro P6000 24GB (Quadro 385.12) NVIDIA Quadro P4000 8GB (Quadro 384.76) NVIDIA Quadro P2000 4GB (Quadro 384.76) |

| Audio | Onboard |

| Storage | Kingston KC1000 960GB M.2 SSD |

| Power Supply | Corsair 80 Plus Gold AX1200 |

| Chassis | Corsair Carbide 600C Inverted Full-Tower |

| Cooling | Corsair Hydro H100i V2 AIO Liquid Cooler |

| Et cetera | Windows 10 Pro (64-bit; build 15063) |

| For an in-depth pictorial look at this build, head here. | |

The benchmark results are categorized and spread across the next six pages. On page 2, AMD’s ProRender plugin is used in Autodesk’s 3ds Max 2017 to render two scenes, while two de facto benchmarking tools, as well as a newbie, wrap it up: Cinebench, LuxMark, and V-Ray Benchmark. Page 3 is home to an encode and CAD test, thanks to Adobe’s Premiere Pro CC 2017 and two 4K projects, and also Autodesk’s AutoCAD 2016, exercised through the use of the excellent Cadalyst benchmark.

SPEC produces so many benchmarks worthy of inclusion in our workstation GPU content, that it’s earned itself its own page. So on page 4, SPECviewperf helps us gain an understanding of viewport performance across 9 different applications. SPECapc 3ds Max 2015 and Maya 2012 finish things up with exhaustive tests in their namesake Autodesk products.

Like SPEC, Sandra’s test suite is large, so page 5 is dedicated to four of its tests: Cryptography, Financial Analysis, Scientific Analysis, as well as memory bandwidth. Two quick and dirty gaming benchmarks are featured on page 6: Futuremark’s 3DMark, and Unigine’s Superposition. Finally, the last page includes power results (sadly, no temperatures this go around), as well as the final thoughts.

So without further ado, let’s get this train moving.

Support our efforts! With ad revenue at an all-time low for written websites, we're relying more than ever on reader support to help us continue putting so much effort into this type of content. You can support us by becoming a Patron, or by using our Amazon shopping affiliate links listed through our articles. Thanks for your support!